TECHNICAL ASSET FINGERPRINT

82488db31cbc23b32104f271

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

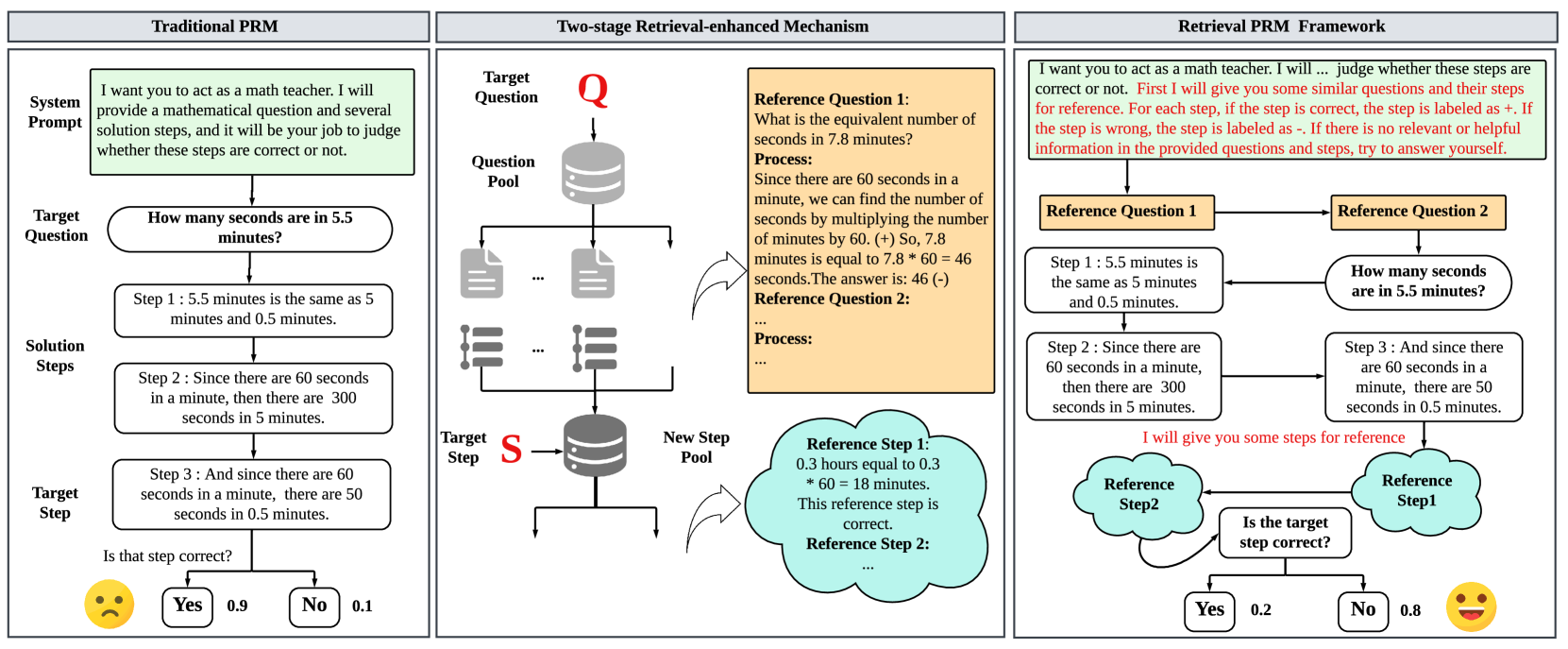

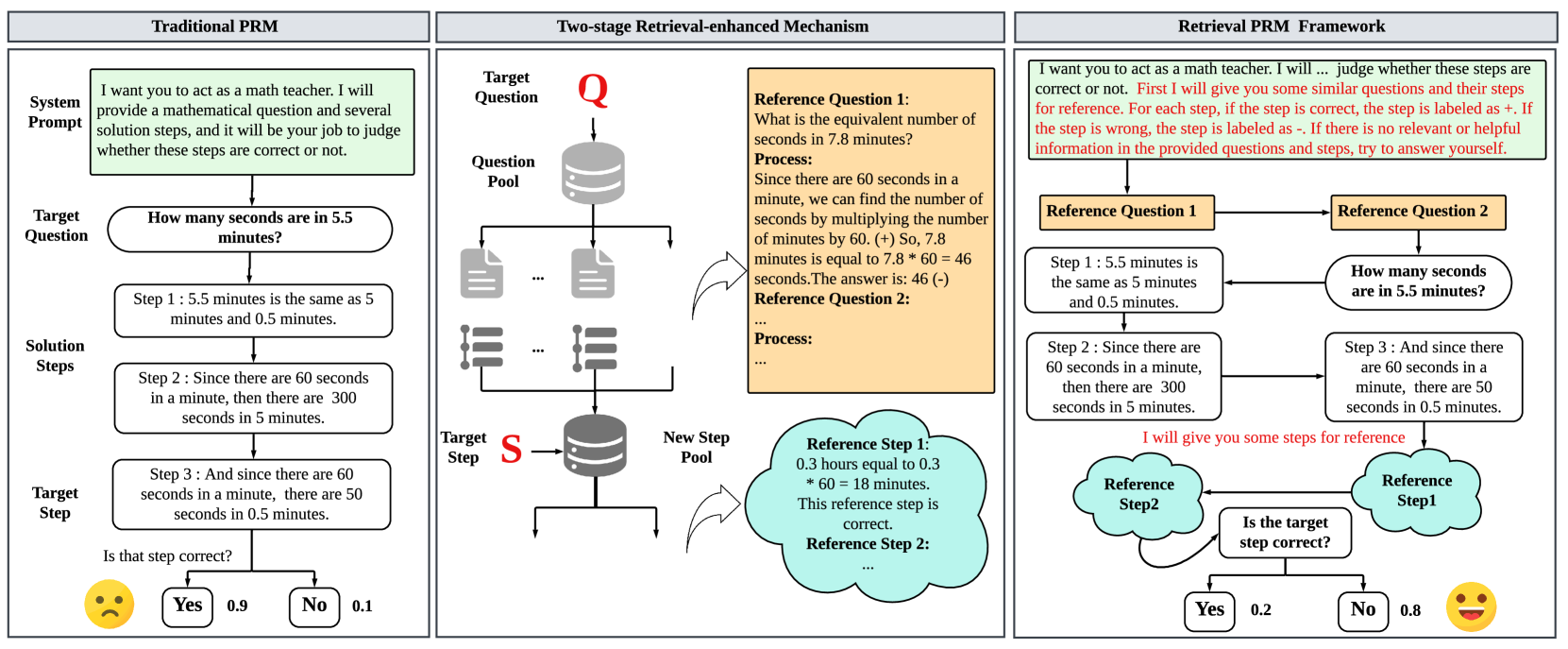

## Flowchart: Comparison of PRM Frameworks

### Overview

The image presents a comparative diagram of three different Problem Reasoning and Manipulation (PRM) frameworks: Traditional PRM, Two-stage Retrieval-enhanced Mechanism, and Retrieval PRM Framework. Each framework is illustrated as a flowchart, outlining the process from the initial prompt to the final evaluation.

### Components/Axes

**1. Traditional PRM (Left Panel):**

* **Title:** Traditional PRM

* **System Prompt:** "I want you to act as a math teacher. I will provide a mathematical question and several solution steps, and it will be your job to judge whether these steps are correct or not."

* **Target Question:** "How many seconds are in 5.5 minutes?"

* **Solution Steps:**

* Step 1: "5.5 minutes is the same as 5 minutes and 0.5 minutes."

* Step 2: "Since there are 60 seconds in a minute, then there are 300 seconds in 5 minutes."

* Step 3: "And since there are 60 seconds in a minute, there are 50 seconds in 0.5 minutes."

* **Target Step:** "Is that step correct?"

* **Decision Outcomes:**

* Yes: 0.9 (associated with a sad face emoji)

* No: 0.1

**2. Two-stage Retrieval-enhanced Mechanism (Middle Panel):**

* **Title:** Two-stage Retrieval-enhanced Mechanism

* **Target Question:** Labeled as "Q" in red.

* **Question Pool:** Represented by a database icon, followed by a series of document icons.

* **Target Step:** Labeled as "S" in red.

* **New Step Pool:** Represented by a database icon.

* **Reference Question 1:**

* "What is the equivalent number of seconds in 7.8 minutes?"

* Process: "Since there are 60 seconds in a minute, we can find the number of seconds by multiplying the number of minutes by 60. (+) So, 7.8 minutes is equal to 7.8 * 60 = 46 seconds. The answer is: 46 (-)"

* **Reference Question 2:**

* Process: "..."

* **Reference Step 1:**

* "0.3 hours equal to 0.3 * 60 = 18 minutes. This reference step is correct."

* **Reference Step 2:** "..."

**3. Retrieval PRM Framework (Right Panel):**

* **Title:** Retrieval PRM Framework

* **System Prompt:** "I want you to act as a math teacher. I will... judge whether these steps are correct or not. First I will give you some similar questions and their steps for reference. For each step, if the step is correct, the step is labeled as +. If the step is wrong, the step is labeled as -. If there is no relevant or helpful information in the provided questions and steps, try to answer yourself."

* **Reference Question 1:**

* Step 1: "5.5 minutes is the same as 5 minutes and 0.5 minutes."

* Step 2: "Since there are 60 seconds in a minute, then there are 300 seconds in 5 minutes."

* **Reference Question 2:**

* **Target Question:** "How many seconds are in 5.5 minutes?"

* Step 3: "And since there are 60 seconds in a minute, there are 50 seconds in 0.5 minutes."

* **Additional Text:** "I will give you some steps for reference"

* **Reference Step 2**

* **Reference Step 1**

* **Target Step:** "Is the target step correct?"

* **Decision Outcomes:**

* Yes: 0.2 (associated with a happy face emoji)

* No: 0.8

### Detailed Analysis

**Traditional PRM:**

* The system is given a direct question and a series of solution steps.

* The system must evaluate the correctness of the provided steps.

* The output suggests a high confidence (0.9) that the steps are incorrect, indicated by the sad face emoji.

**Two-stage Retrieval-enhanced Mechanism:**

* This framework involves retrieving relevant information from a question pool and a step pool.

* Reference questions and steps are used to aid in the problem-solving process.

* Reference Question 1 contains an arithmetic error: 7.8 * 60 = 468, not 46.

* The reference step is correct.

**Retrieval PRM Framework:**

* This framework also uses reference questions and steps.

* The system is provided with a target question and must determine the correctness of the steps.

* The output suggests a higher confidence (0.8) that the steps are incorrect, indicated by the happy face emoji.

### Key Observations

* The Traditional PRM and Retrieval PRM Frameworks both involve evaluating the correctness of solution steps.

* The Two-stage Retrieval-enhanced Mechanism incorporates a retrieval process to gather relevant information.

* There is an arithmetic error in Reference Question 1 within the Two-stage Retrieval-enhanced Mechanism.

* The confidence levels in the "Yes/No" decisions differ between the Traditional PRM and Retrieval PRM Frameworks.

### Interpretation

The diagram illustrates different approaches to problem-solving using PRM frameworks. The Traditional PRM relies on direct evaluation, while the Retrieval-enhanced mechanisms incorporate information retrieval to aid in the process. The arithmetic error in the Two-stage Retrieval-enhanced Mechanism highlights the importance of verifying the accuracy of retrieved information. The differing confidence levels in the final decisions suggest that the frameworks may have varying levels of effectiveness or biases. The use of emojis to represent the "Yes/No" outcomes adds a layer of emotional context to the decision-making process.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

## Diagrams: Comparison of Three Problem-Solving Frameworks

### Overview

This image presents a comparative analysis of three distinct problem-solving frameworks: "Traditional PRM," "Two-stage Retrieval-enhanced Mechanism," and "Retrieval PRM Framework." Each framework outlines a process for addressing a target question, likely in a mathematical context, by providing a system prompt, a target question, and a series of solution steps. The diagrams illustrate the flow of information and decision-making within each framework, highlighting differences in their approach to problem-solving and verification.

### Components/Axes

The image is divided into three main vertical sections, each representing one of the frameworks. Within each section, the following common elements are observed:

* **System Prompt:** A text box at the top, defining the role of the system and the task.

* **Target Question:** A rounded rectangle containing the specific question to be solved.

* **Solution Steps:** A series of rounded rectangles detailing the steps taken to solve the problem.

* **Target Step/Verification:** A final step or decision point, often accompanied by a question and a binary outcome (Yes/No) with associated probabilities.

**Framework 1: Traditional PRM**

* **System Prompt:** A green text box containing the text: "I want you to act as a math teacher. I will provide a mathematical question and several solution steps, and it will be your job to judge whether these steps are correct or not."

* **Target Question:** A rounded rectangle with the text: "How many seconds are in 5.5 minutes?"

* **Solution Steps:**

* Step 1: A rounded rectangle with the text: "Step 1: 5.5 minutes is the same as 5 minutes and 0.5 minutes."

* Step 2: A rounded rectangle with the text: "Step 2: Since there are 60 seconds in a minute, then there are 300 seconds in 5 minutes."

* Step 3: A rounded rectangle with the text: "Step 3: And since there are 60 seconds in a minute, there are 50 seconds in 0.5 minutes."

* **Target Step/Verification:**

* A question: "Is that step correct?"

* Two branches labeled "Yes" and "No."

* Associated values: "Yes" has a value of "0.9," and "No" has a value of "0.1."

* A sad yellow emoji is positioned to the left of the "Yes/No" branches.

**Framework 2: Two-stage Retrieval-enhanced Mechanism**

* **Target Question:** Labeled "Q" with an arrow pointing down to a database icon.

* **Question Pool:** Represented by a database icon, with arrows pointing to multiple document icons (representing retrieved questions).

* **Reference Question 1:** An orange text box containing:

* "Reference Question 1:"

* "What is the equivalent number of seconds in 7.8 minutes?"

* "Process:"

* "Since there are 60 seconds in a minute, we can find the number of seconds by multiplying the number of minutes by 60. (+) So, 7.8 minutes is equal to 7.8 * 60 = 46 seconds. The answer is: 46 (-)"

* "Reference Question 2:"

* "Process:"

* "..."

* **Document Icons:** Multiple document icons with ellipses (...) indicating a pool of questions.

* **List Icons:** Multiple list icons with ellipses (...) indicating a pool of solution steps.

* **Target Step:** Labeled "S" with an arrow pointing to a database icon labeled "New Step Pool."

* **New Step Pool:** A database icon representing a pool of new steps.

* **Reference Step 1:** A light blue cloud shape containing:

* "Reference Step 1:"

* "0.3 hours equal to 0.3"

* "* 60 = 18 minutes."

* "This reference step is correct."

* "Reference Step 2:"

* "..."

* **Arrows:** Indicate the flow from the target question to the question pool, then to retrieved documents and lists, and finally to the target step and new step pool. A curved arrow connects the document/list icons to the "Reference Question 1" text box.

**Framework 3: Retrieval PRM Framework**

* **System Prompt:** A green text box containing the text: "I want you to act as a math teacher. I will ... judge whether these steps are correct or not. First I will give you some similar questions and their steps for reference. For each step, if the step is correct, the step is labeled as +. If the step is wrong, the step is labeled as -. If there is no relevant or helpful information in the provided questions and steps, try to answer yourself."

* **Reference Question 1:** An orange text box.

* **Reference Question 2:** An orange text box. An arrow connects "Reference Question 1" to "Reference Question 2."

* **Target Question:** A rounded rectangle with the text: "How many seconds are in 5.5 minutes?" An arrow points from "Reference Question 2" to this target question.

* **Solution Steps:**

* Step 1: A rounded rectangle with the text: "Step 1: 5.5 minutes is the same as 5 minutes and 0.5 minutes." An arrow connects this to "Reference Question 1."

* Step 2: A rounded rectangle with the text: "Step 2: Since there are 60 seconds in a minute, then there are 300 seconds in 5 minutes." An arrow connects this to "Step 1."

* Step 3: A rounded rectangle with the text: "Step 3: And since there are 60 seconds in a minute, there are 50 seconds in 0.5 minutes." An arrow connects this to "Step 2."

* **Reference Steps:** Two light blue cloud shapes labeled "Reference Step2" and "Reference Step1." Arrows connect "Step 3" to "Reference Step1" and "Reference Step2" to "Step 3."

* **Verification:**

* A question: "Is the target step correct?"

* Two branches labeled "Yes" and "No."

* Associated values: "Yes" has a value of "0.2," and "No" has a value of "0.8."

* A happy yellow emoji is positioned to the right of the "Yes/No" branches.

* **Textual Annotation:** A red text annotation below "Step 3" and above the "Reference Step2" cloud reads: "I will give you some steps for reference."

### Detailed Analysis or Content Details

**Framework 1: Traditional PRM**

This framework presents a direct, step-by-step approach to solving the target question. The solution steps provided are:

1. Decomposition of 5.5 minutes into 5 minutes and 0.5 minutes.

2. Calculation of seconds in 5 minutes (300 seconds).

3. Calculation of seconds in 0.5 minutes (50 seconds).

The final step involves a binary judgment ("Is that step correct?") with a high probability of "Yes" (0.9) and a low probability of "No" (0.1), indicated by a sad emoji. This suggests a confidence in the correctness of the provided steps, with a slight uncertainty.

**Framework 2: Two-stage Retrieval-enhanced Mechanism**

This framework introduces a retrieval mechanism.

* The "Target Question" is processed, leading to a "Question Pool."

* From the "Question Pool," relevant questions are retrieved, exemplified by "Reference Question 1" (calculating seconds in 7.8 minutes). This reference question includes a detailed process and an answer (46 seconds).

* The process then moves to a "Target Step" which is stored in a "New Step Pool."

* A "Reference Step 1" is shown, which is deemed "correct." This step appears to be an example of a correct step within the system.

The diagram implies a process of retrieving relevant questions and steps to aid in solving the target question. The connection between retrieved documents/lists and the "Reference Question 1" text box suggests that the retrieved items inform the understanding or generation of reference questions.

**Framework 3: Retrieval PRM Framework**

This framework combines retrieval with a more explicit system prompt for judging correctness.

* The "System Prompt" clearly defines how steps will be labeled (+ for correct, - for wrong).

* "Reference Question 1" and "Reference Question 2" are provided, with "Reference Question 1" being linked to "Step 1" of the target problem.

* The "Target Question" is "How many seconds are in 5.5 minutes?"

* The "Solution Steps" (Step 1, Step 2, Step 3) are presented sequentially, with arrows indicating their dependency.

* "Reference Step1" and "Reference Step2" are provided as examples. The annotation "I will give you some steps for reference" clarifies their purpose.

* The verification stage ("Is the target step correct?") has a high probability of "No" (0.8) and a low probability of "Yes" (0.2), indicated by a happy emoji. This is a significant contrast to Framework 1 and suggests that the provided steps for the target question are likely incorrect or that the framework is designed to be more critical.

### Key Observations

* **Varying Confidence in Solution Steps:** Framework 1 shows high confidence (0.9 Yes) in the correctness of its solution steps, while Framework 3 shows low confidence (0.2 Yes, 0.8 No).

* **Role of Reference Information:** Framework 2 and 3 explicitly utilize "Reference Questions" and "Reference Steps" to aid in problem-solving or verification, whereas Framework 1 does not show this.

* **Complexity of Mechanisms:** Framework 2 and 3 appear to be more complex, involving retrieval mechanisms and explicit guidance on step evaluation.

* **Emoji Usage:** Framework 1 uses a sad emoji with a high "Yes" probability, which is counter-intuitive. Framework 3 uses a happy emoji with a high "No" probability, which is also counter-intuitive if the emoji is meant to represent the outcome of the step. It's more likely the emoji represents the system's sentiment towards the outcome.

### Interpretation

These diagrams illustrate different approaches to a problem-solving task, likely within a Natural Language Processing or AI context, where a system is tasked with evaluating mathematical steps.

* **Traditional PRM (Framework 1)** represents a baseline or a simpler model. It directly presents a problem and its solution steps, then asks for a judgment. The high probability of "Yes" suggests either the steps are indeed correct and the system is confident, or it's a simplified representation where the system is expected to agree. The sad emoji with a high "Yes" probability is peculiar and might indicate a misunderstanding of emoji sentiment or a specific convention within this framework.

* **Two-stage Retrieval-enhanced Mechanism (Framework 2)** introduces the concept of retrieving relevant information (questions and steps) from a pool. This suggests a more sophisticated approach where the system leverages external knowledge to assist in solving the target problem. The diagram highlights the flow of information from the target question to retrieval and then to the generation or evaluation of steps.

* **Retrieval PRM Framework (Framework 3)** builds upon the retrieval idea and provides a more detailed system prompt for evaluation. The critical difference here is the low confidence in the correctness of the provided steps (0.2 Yes, 0.8 No). This suggests that this framework is designed to be more discerning or that the example steps provided for the target question are intentionally flawed to demonstrate the framework's ability to identify errors. The happy emoji with a high "No" probability could signify that the system is pleased to have identified an incorrect step, aligning with its role of judging correctness.

In essence, the diagrams demonstrate an evolution from a direct, less informed approach (Framework 1) to more advanced, retrieval-augmented, and critically evaluative methods (Frameworks 2 and 3). Framework 3, in particular, seems to emphasize the system's role in identifying errors, as indicated by the high probability of "No" for the target step. The comparison highlights the trade-offs between simplicity and the sophistication of leveraging external knowledge and detailed evaluation criteria for problem-solving.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Retrieval Framework for Mathematical Problem Solving

### Overview

This diagram illustrates three different frameworks for solving mathematical problems: Traditional Prompting (PRM), a Two-stage Retrieval-enhanced Mechanism, and a Retrieval Framework. It focuses on how each framework handles a target question ("How many seconds are in 5.5 minutes?") and assesses the correctness of solution steps. The diagram uses flowcharts to depict the process within each framework, and includes example questions, steps, and confidence scores.

### Components/Axes

The diagram is divided into three main columns, each representing a different framework. Each column is further divided into sections for "System Prompt", "Target Question", "Solution Steps", and "Target Step". There are also visual elements like question pools, step pools, and decision points (Yes/No). Confidence scores are represented by bars with numerical values.

### Detailed Analysis or Content Details

**1. Traditional PRM (Left Column)**

* **System Prompt:** "I want you to act as a math teacher. I will provide a mathematical question and several solution steps, and it will be your job to judge whether these steps are correct or not."

* **Target Question:** "How many seconds are in 5.5 minutes?"

* **Solution Steps:**

* Step 1: "5.5 minutes is the same as 5 minutes and 0.5 minutes."

* Step 2: "Since there are 60 seconds in a minute, then there are 300 seconds in 5 minutes."

* Step 3: "And since there are 60 seconds in a minute, there are 30 seconds in 0.5 minutes."

* **Target Step:** "Is that step correct?"

* **Confidence Score:** A bar graph shows a confidence score of approximately 0.9 (90%) for "Yes" and 0.1 (10%) for "No".

**2. Two-stage Retrieval-enhanced Mechanism (Middle Column)**

* **System Prompt:** Not explicitly shown, but implied to be related to retrieval.

* **Target Question:** Represented by a "Q" icon, leading to a "Question Pool".

* **Reference Question 1:** "What is the equivalent number of seconds in 7.8 minutes?"

* **Process:** "Since there are 60 seconds in a minute, we can find the number of seconds by multiplying the number of minutes by 60. (+/-) So, 7.8 minutes is equal to 7.8 * 60 = 468 seconds. The answer is: 468 (+/-)"

* **Reference Question 2:** "Process:" (text is incomplete, but implies a similar calculation).

* **Target Step:** Represented by an "S" icon, leading to a "New Step Pool".

* **Reference Step 1:** "0.3 hours equal to 0.3 * 60 = 18 minutes." - Correct.

* **Reference Step 2:** (text is incomplete).

**3. Retrieval Framework (Right Column)**

* **System Prompt:** "I want you to act as a math teacher. I will... judge whether these steps are correct or not. First I will give you some similar questions and their steps for reference. For each step, if the step is correct, the step is labeled as +. If the step is wrong, the step is labeled as -. If there is no relevant or helpful information in the provided questions and steps, try to answer yourself."

* **Target Question:** "How many seconds are in 5.5 minutes?"

* **Reference Question 1:**

* Step 1: "5.5 minutes is the same as 5 minutes and 0.5 minutes."

* **Reference Question 2:**

* Step 1: "How many seconds are in 5.5 minutes?"

* **Reference Step 1:** "I will give you some steps for reference"

* **Decision Point:** "Is the target step correct?"

* **Confidence Score:** A bar graph shows a confidence score of approximately 0.2 (20%) for "Yes" and 0.8 (80%) for "No".

### Key Observations

* The Traditional PRM framework shows a high confidence in the correctness of the steps (90% Yes).

* The Retrieval Framework shows a low confidence in the correctness of the steps (20% Yes, 80% No).

* The Two-stage Retrieval-enhanced Mechanism includes reference questions and steps, suggesting a process of comparison and validation.

* The diagram highlights the importance of providing reference material for evaluating the correctness of solution steps.

* The confidence scores vary significantly between the frameworks, indicating different levels of certainty in the solution.

### Interpretation

The diagram demonstrates a progression in problem-solving frameworks, from a simple prompting approach to more sophisticated retrieval-enhanced methods. The Traditional PRM relies solely on the model's internal knowledge, while the Retrieval Framework leverages external information (reference questions and steps) to assess correctness. The Two-stage Retrieval-enhanced Mechanism appears to be an intermediate step, utilizing retrieval to provide context for the model.

The differing confidence scores suggest that the retrieval-enhanced frameworks may be more critical in their assessment of solution steps, potentially identifying errors that the Traditional PRM might overlook. The lower confidence in the Retrieval Framework could indicate that the reference material is not sufficiently relevant or helpful, or that the model struggles to effectively integrate the retrieved information.

The diagram highlights the potential benefits of retrieval-augmented generation (RAG) in improving the accuracy and reliability of mathematical problem-solving systems. It also suggests that the quality and relevance of the retrieved information are crucial factors in the success of these systems. The use of "+" and "-" labels for reference steps indicates a nuanced evaluation process, going beyond simple correctness judgments. The incomplete text in some sections suggests that the diagram is a work in progress or a simplified representation of a more complex system.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Diagram]: Comparison of Three Process Reward Model (PRM) Frameworks for Mathematical Reasoning

### Overview

The image is a technical diagram comparing three different architectural approaches for evaluating the correctness of mathematical solution steps. It is divided into three distinct panels, each illustrating a different framework: "Traditional PRM" (left), "Two-stage Retrieval-enhanced Mechanism" (center), and "Retrieval PRM Framework" (right). The diagram uses flowcharts, text boxes, arrows, and icons to explain the workflow and components of each system.

### Components/Axes

The diagram is organized into three vertical panels, each with a title bar at the top.

**Panel 1: Traditional PRM (Left)**

* **Title:** "Traditional PRM"

* **Components (Top to Bottom):**

1. **System Prompt (Green Box):** "I want you to act as a math teacher. I will provide a mathematical question and several solution steps, and it will be your job to judge whether these steps are correct or not."

2. **Target Question (Oval):** "How many seconds are in 5.5 minutes?"

3. **Solution Steps (Three Rectangles in Sequence):**

* "Step 1 : 5.5 minutes is the same as 5 minutes and 0.5 minutes."

* "Step 2 : Since there are 60 seconds in a minute, then there are 300 seconds in 5 minutes."

* "Step 3 : And since there are 60 seconds in a minute, there are 50 seconds in 0.5 minutes."

4. **Target Step (Oval):** "Is that step correct?" (Pointing to Step 3)

5. **Judgment Output:** A sad face emoji (😞) next to two boxes: "Yes" with a value of "0.9" and "No" with a value of "0.1".

**Panel 2: Two-stage Retrieval-enhanced Mechanism (Center)**

* **Title:** "Two-stage Retrieval-enhanced Mechanism"

* **Components:**

1. **Input:** "Target Question" labeled with a red "Q" pointing to a "Question Pool" database icon.

2. **Question Retrieval:** The Question Pool connects to multiple document icons, representing retrieved similar questions.

3. **Reference Question Box (Yellow):** Contains an example.

* **Header:** "Reference Question 1: What is the equivalent number of seconds in 7.8 minutes?"

* **Process:** "Since there are 60 seconds in a minute, we can find the number of seconds by multiplying the number of minutes by 60. (+) So, 7.8 minutes is equal to 7.8 * 60 = 46 seconds. The answer is: 46 (-)"

* **Note:** "Reference Question 2: Process: ..."

4. **Step Retrieval:** "Target Step" labeled with a red "S" points to a "New Step Pool" database icon.

5. **Reference Step Cloud (Light Blue):** Contains an example.

* "Reference Step 1: 0.3 hours equal to 0.3 * 60 = 18 minutes. This reference step is correct."

* "Reference Step 2: ..."

**Panel 3: Retrieval PRM Framework (Right)**

* **Title:** "Retrieval PRM Framework"

* **Components:**

1. **System Prompt (Green Box):** "I want you to act as a math teacher. I will ... judge whether these steps are correct or not. **First I will give you some similar questions and their steps for reference. For each step, if the step is correct, the step is labeled as +. If the step is wrong, the step is labeled as -. If there is no relevant or helpful information in the provided questions and steps, try to answer yourself.**"

2. **Reference Flow:**

* "Reference Question 1" (Yellow Box) points to "Reference Question 2" (Yellow Box).

* "Reference Question 1" also points down to "Step 1 : 5.5 minutes is the same as 5 minutes and 0.5 minutes." (White Box).

* "Reference Question 2" points down to the "Target Question" oval: "How many seconds are in 5.5 minutes?"

3. **Step Evaluation Flow:**

* The "Step 1" box points to "Step 2 : Since there are 60 seconds in a minute, then there are 300 seconds in 5 minutes." (White Box).

* "Step 2" points to "Step 3 : And since there are 60 seconds in a minute, there are 50 seconds in 0.5 minutes." (White Box).

* A red text note states: "I will give you some steps for reference".

* Two light blue clouds labeled "Reference Step2" and "Reference Step1" point to a decision box.

4. **Judgment:** The decision box "Is the target step correct?" (referring to Step 3) leads to:

* "Yes" with a value of "0.2"

* "No" with a value of "0.8"

* A happy face emoji (😊) is shown next to the "No" outcome.

### Detailed Analysis

* **Traditional PRM Flow:** A linear process. A system prompt defines the task. A target question is presented with its solution steps. The model must judge a specific target step (Step 3) in isolation. The output is a probability distribution (Yes: 0.9, No: 0.1), with a sad emoji suggesting an incorrect or low-confidence judgment for the given example.

* **Two-stage Retrieval Flow:** A parallel, retrieval-based process. It first retrieves similar questions from a pool based on the target question (Q). It then retrieves similar steps from a separate pool based on the target step (S). These retrieved items ("Reference Question" and "Reference Step") are provided as context, containing their own processes and correctness labels (+/-).

* **Retrieval PRM Framework Flow:** An integrated process that combines retrieval and judgment. The system prompt is modified to instruct the model to use provided references. It shows a chain where reference questions and their steps are provided alongside the target question and its steps. The model is explicitly given "Reference Steps" to aid in judging the target step. The output probability (Yes: 0.2, No: 0.8) with a happy emoji suggests a more confident and correct judgment ("No") for the same target step (Step 3) compared to the Traditional PRM.

### Key Observations

1. **Evolution of Context:** The core difference is the amount of contextual information provided to the judge. Traditional PRM uses none, the Two-stage Mechanism retrieves it separately, and the Retrieval PRM Framework integrates it directly into the prompt.

2. **Judgment Confidence:** For the identical target step ("Step 3: ... there are 50 seconds in 0.5 minutes"), the Traditional PRM assigns a high probability (0.9) to "Yes" (incorrect), while the Retrieval PRM Framework assigns a high probability (0.8) to "No" (correct). This visually demonstrates the claimed improvement of the retrieval-enhanced approach.

3. **Prompt Engineering:** The system prompt in the Retrieval PRM Framework is significantly more detailed, explicitly instructing the model on how to use the provided reference examples and their labels (+/-).

4. **Visual Cues:** The use of emojis (😞 vs. 😊) provides an immediate, non-numerical indicator of the perceived quality or correctness of the model's output in each framework.

### Interpretation

This diagram argues for the superiority of retrieval-augmented methods in training Process Reward Models for mathematical reasoning. The **Traditional PRM** is depicted as limited, making judgments in a vacuum, which can lead to confident errors (as shown by the high "Yes" probability for a wrong step). The **Two-stage Retrieval-enhanced Mechanism** introduces the concept of gathering relevant external knowledge (similar questions and steps) but presents it as a separate, preparatory stage.

The **Retrieval PRM Framework** is presented as the most advanced solution. It seamlessly integrates the retrieved references into the model's context window, transforming the task from pure judgment to **judgment-by-analogy**. The model is no longer just a math teacher but a teacher with a textbook of solved examples open in front of them. The dramatic shift in the probability distribution for the same step (from 0.9/0.1 to 0.2/0.8) is the central piece of evidence, suggesting that providing relevant, labeled reference cases significantly improves the model's ability to discern correct reasoning steps. The diagram implies that this approach leads to more reliable and accurate reward signals for training reasoning models.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Comparison of PRM Frameworks

### Overview

The image compares three problem-solving frameworks: **Traditional PRM**, **Two-stage Retrieval-enhanced Mechanism**, and **Retrieval PRM Framework**. Each framework is structured with system prompts, target questions, solution steps, and feedback mechanisms.

### Components/Axes

1. **Traditional PRM**

- **System Prompt**: "I want you to act as a math teacher..."

- **Target Question**: "How many seconds are in 5.5 minutes?"

- **Solution Steps**:

- Step 1: 5.5 minutes = 5 minutes + 0.5 minutes.

- Step 2: 5 minutes = 300 seconds.

- Step 3: 0.5 minutes = 30 seconds.

- **Target Step**: "Is that step correct?" with feedback (Yes: 0.9, No: 0.1).

2. **Two-stage Retrieval-enhanced Mechanism**

- **Question Pool**: Contains reference questions (e.g., "What is the equivalent number of seconds in 7.8 minutes?").

- **Reference Questions**:

- Reference Question 1: Process involves converting 7.8 minutes to seconds (7.8 × 60 = 468 seconds).

- Reference Question 2: Placeholder for additional examples.

- **New Step Pool**: Stores validated steps (e.g., "0.3 hours = 18 minutes").

3. **Retrieval PRM Framework**

- **Reference Questions**:

- Reference Question 1: "How many seconds are in 5.5 minutes?"

- Reference Question 2: Placeholder for additional examples.

- **Reference Steps**:

- Reference Step 1: "0.3 hours = 18 minutes" (correct).

- Reference Step 2: Placeholder for additional steps.

- **Feedback**: Emojis (😊 for correct, 😠 for incorrect) and probabilities (Yes: 0.2, No: 0.8).

### Detailed Analysis

- **Traditional PRM**:

- The target question is solved via direct calculation (5.5 minutes = 330 seconds).

- Feedback shows high confidence in correctness (Yes: 0.9).

- **Two-stage Retrieval-enhanced Mechanism**:

- Uses a database of reference questions to guide problem-solving.

- Example process: 7.8 minutes × 60 = 468 seconds.

- **Retrieval PRM Framework**:

- Integrates reference steps (e.g., "0.3 hours = 18 minutes") to validate target steps.

- Feedback uses emojis and probabilities to indicate correctness.

### Key Observations

1. **Feedback Mechanisms**:

- Traditional PRM uses binary feedback (Yes/No) with probabilities.

- Retrieval PRM Framework employs emojis for intuitive feedback.

2. **Reference Utilization**:

- Two-stage and Retrieval frameworks leverage reference questions/steps to enhance accuracy.

3. **Step Validation**:

- The Retrieval PRM Framework explicitly cross-references steps (e.g., "Is the target step correct?").

### Interpretation

- **Traditional PRM** relies on direct computation without external references, which may limit adaptability.

- **Retrieval-enhanced frameworks** improve robustness by integrating reference data, reducing errors in complex conversions (e.g., 7.8 minutes → 468 seconds).

- The use of emojis in the Retrieval PRM Framework suggests a user-friendly approach to feedback, potentially improving interpretability.

- The Two-stage mechanism’s question pool and new step pool indicate a modular design for scalable problem-solving.

## Additional Notes

- **Language**: All text is in English.

- **Missing Data**: No numerical trends or charts are present; the diagram focuses on structural and procedural comparisons.

- **Spatial Grounding**:

- **Traditional PRM**: Left section with linear flow (System Prompt → Target Question → Solution Steps → Feedback).

- **Two-stage Retrieval**: Central section with bidirectional flow (Question Pool ↔ Reference Questions ↔ New Step Pool).

- **Retrieval PRM**: Right section with reference-question-driven feedback loop.

DECODING INTELLIGENCE...