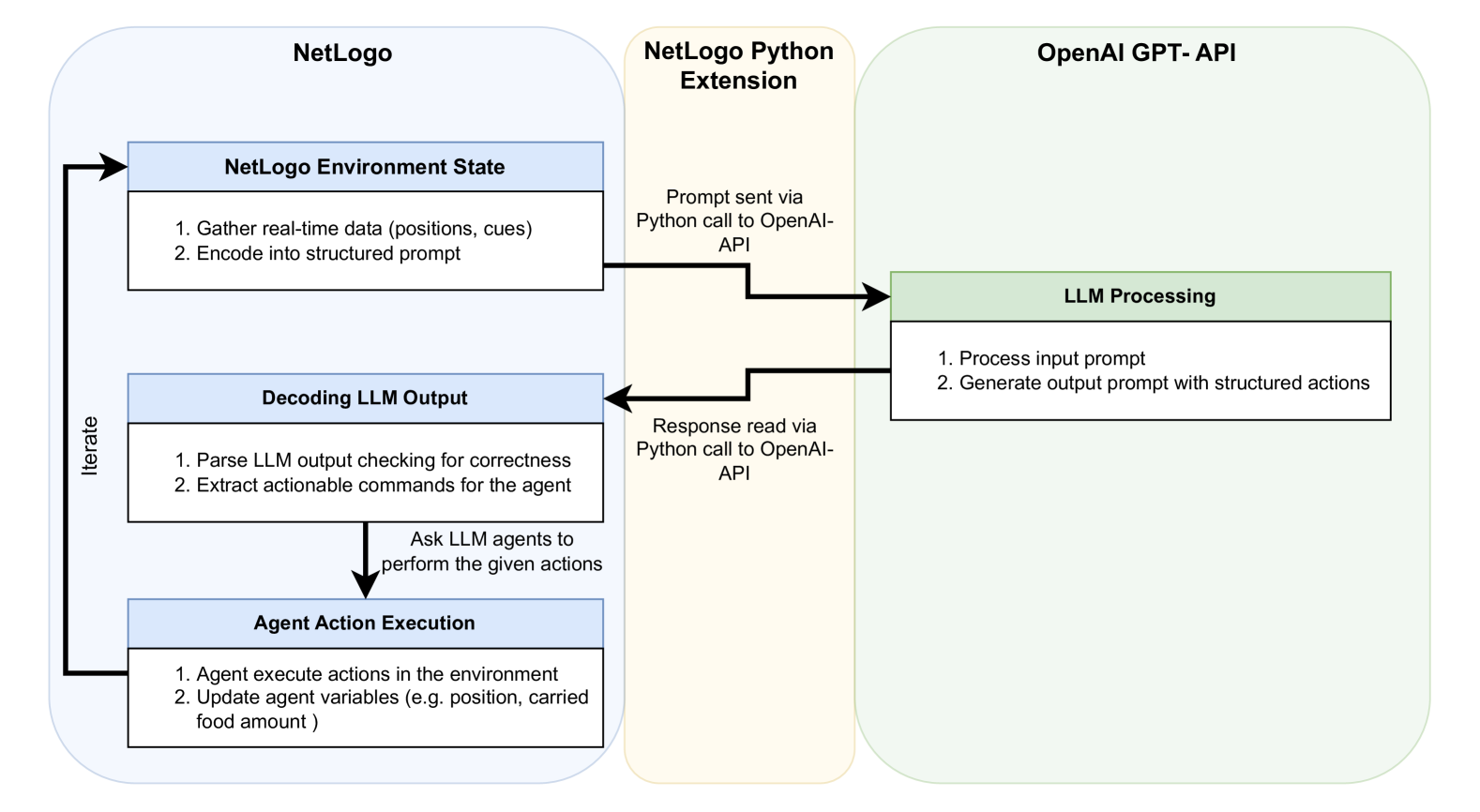

## System Diagram: NetLogo-OpenAI Integration

### Overview

The image is a system diagram illustrating the interaction between NetLogo, a NetLogo Python Extension, and the OpenAI GPT-API. It shows the flow of information and actions between these components, highlighting how NetLogo uses the OpenAI API for LLM processing to control agent behavior.

### Components/Axes

* **NetLogo (Left)**: Enclosed in a rounded rectangle. Contains three main blocks:

* NetLogo Environment State:

* "1. Gather real-time data (positions, cues)"

* "2. Encode into structured prompt"

* Decoding LLM Output:

* "1. Parse LLM output checking for correctness"

* "2. Extract actionable commands for the agent"

* Agent Action Execution:

* "1. Agent execute actions in the environment"

* "2. Update agent variables (e.g. position, carried food amount)"

* An "Iterate" arrow connects Agent Action Execution back to NetLogo Environment State.

* An arrow points from "Decoding LLM Output" to "Agent Action Execution" with the text "Ask LLM agents to perform the given actions"

* **NetLogo Python Extension (Center)**: Enclosed in a rounded rectangle.

* "Prompt sent via Python call to OpenAI-API" - connects to LLM Processing

* "Response read via Python call to OpenAI-API" - connects from LLM Processing

* **OpenAI GPT-API (Right)**: Enclosed in a rounded rectangle. Contains one main block:

* LLM Processing:

* "1. Process input prompt"

* "2. Generate output prompt with structured actions"

### Detailed Analysis

1. **NetLogo Environment State**:

* Gathers real-time data, including agent positions and environmental cues.

* Encodes this data into a structured prompt for the LLM.

2. **NetLogo Python Extension**:

* Facilitates communication between NetLogo and the OpenAI API.

* Sends the prompt to the OpenAI API using a Python call.

* Receives the response from the OpenAI API using a Python call.

3. **OpenAI GPT-API**:

* LLM Processing:

* Processes the input prompt received from NetLogo.

* Generates an output prompt with structured actions for the agents.

4. **Decoding LLM Output**:

* Parses the LLM output, checking for correctness.

* Extracts actionable commands for the agents.

5. **Agent Action Execution**:

* Agents execute the actions in the environment based on the LLM's commands.

* Agent variables, such as position and carried food amount, are updated.

6. **Iteration**:

* The process iterates, with the updated environment state being fed back into the NetLogo Environment State.

### Key Observations

* The diagram illustrates a closed-loop system where NetLogo uses the OpenAI API to control agent behavior based on real-time environmental data.

* The NetLogo Python Extension acts as a bridge between NetLogo and the OpenAI API.

* The "Iterate" loop indicates a continuous process of data gathering, prompt generation, LLM processing, action execution, and environment updating.

### Interpretation

The diagram demonstrates a system where NetLogo leverages the OpenAI GPT-API to create more intelligent and adaptive agents. By using real-time data and LLM processing, the agents can make more informed decisions and interact with the environment in a more sophisticated manner. The iterative nature of the system allows for continuous learning and adaptation. The system allows for the creation of complex simulations where agents can learn and adapt to their environment.