\n

## Diagram: NetLogo-OpenAI API Interaction Flow

### Overview

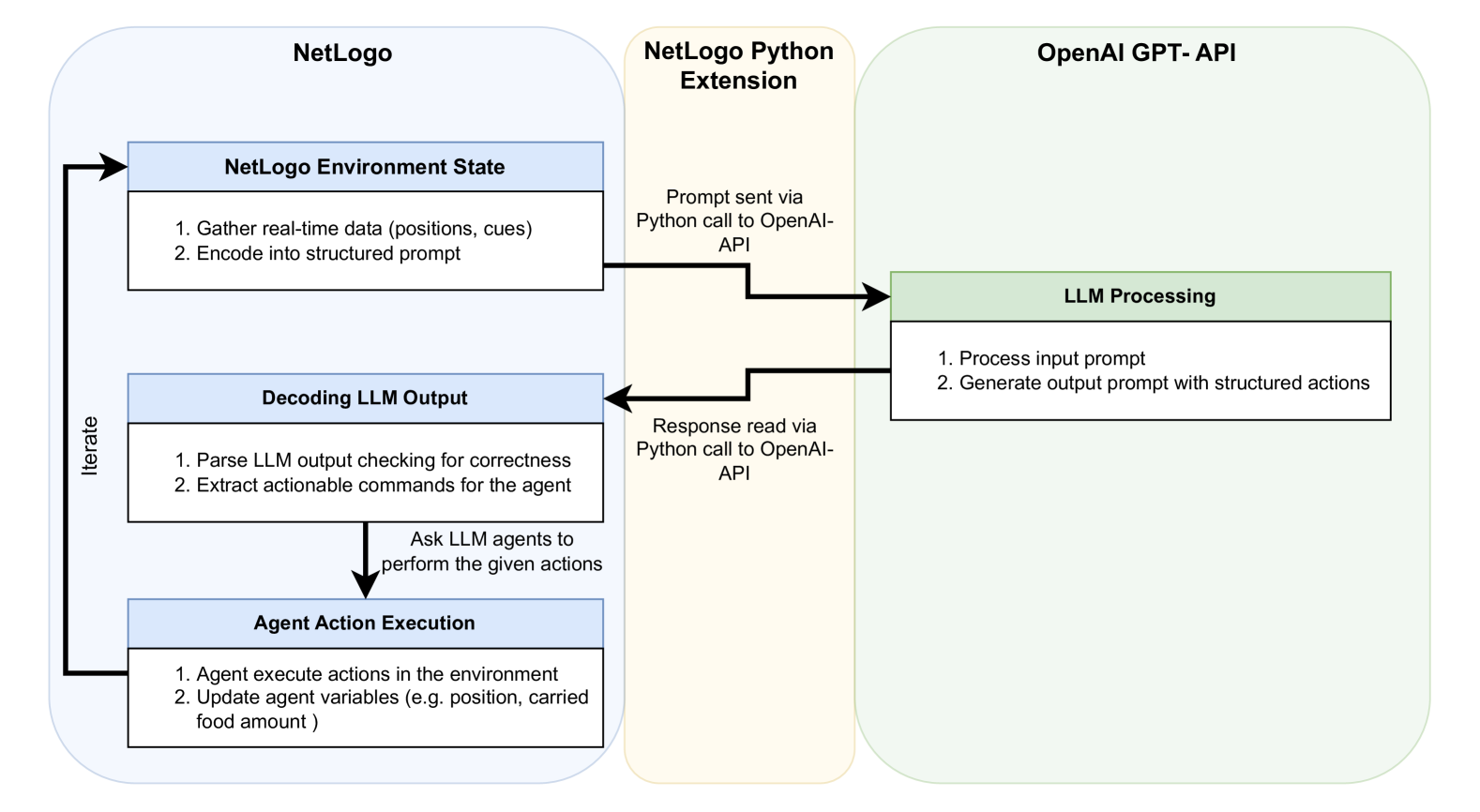

This diagram illustrates the interaction flow between NetLogo, a NetLogo Python Extension, and the OpenAI GPT-API. It depicts a cyclical process where NetLogo gathers environment data, sends it to OpenAI for processing, receives actions, and executes them within the NetLogo environment. The process is iterative, indicated by a looping arrow.

### Components/Axes

The diagram consists of three main blocks representing the three components: NetLogo, NetLogo Python Extension, and OpenAI GPT-API. Arrows indicate the flow of information between these components. Text boxes within each block describe the actions performed by that component. A label "Iterate" is placed alongside the looping arrow.

### Detailed Analysis or Content Details

**NetLogo Block (Left):**

* **NetLogo Environment State:** This is the initial state.

1. "Gather real-time data (positions, cues)"

2. "Encode into structured prompt"

* **Decoding LLM Output:**

1. "Parse LLM output checking for correctness"

2. "Extract actionable commands for the agent"

* **Agent Action Execution:**

1. "Agent execute actions in the environment"

2. "Update agent variables (e.g., position, carried food amount)"

**NetLogo Python Extension Block (Center):**

* "Prompt sent via Python call to OpenAI-API"

* "Response read via Python call to OpenAI-API"

* "Ask LLM agents to perform the given actions"

**OpenAI GPT-API Block (Right):**

* **LLM Processing:**

1. "Process input prompt"

2. "Generate output prompt with structured actions"

**Flow Arrows:**

* A blue arrow originates from "NetLogo Environment State" and points to "Prompt sent via Python call to OpenAI-API".

* A blue arrow originates from "Response read via Python call to OpenAI-API" and points to "Decoding LLM Output".

* A looping arrow labeled "Iterate" connects "Decoding LLM Output" back to "NetLogo Environment State".

### Key Observations

The diagram highlights a closed-loop system. The NetLogo environment provides input to the OpenAI API, which processes it and returns actions. These actions are then executed in NetLogo, updating the environment state, and the cycle repeats. The Python extension acts as a bridge between NetLogo and the OpenAI API. The emphasis on parsing and correctness checking in the "Decoding LLM Output" block suggests a need for robust error handling.

### Interpretation

This diagram represents a system for integrating large language models (LLMs) like those provided by OpenAI into a NetLogo simulation. NetLogo is used to create agent-based models, and the OpenAI API is used to provide intelligent behavior to those agents. The system allows for dynamic interaction between the simulation environment and the LLM, enabling agents to respond to changing conditions and make decisions based on complex reasoning. The iterative nature of the process suggests that the LLM can learn and adapt over time. The inclusion of "correctness checking" indicates a concern for the reliability and validity of the LLM's output, which is crucial for ensuring the integrity of the simulation. The diagram suggests a workflow where NetLogo provides the environment and basic agent functionality, while OpenAI provides the "brain" for the agents, allowing for more sophisticated and adaptive behavior. The use of a Python extension is a common approach for interfacing NetLogo with external APIs.