## Flowchart Diagram: NetLogo-OpenAI Integration Workflow

### Overview

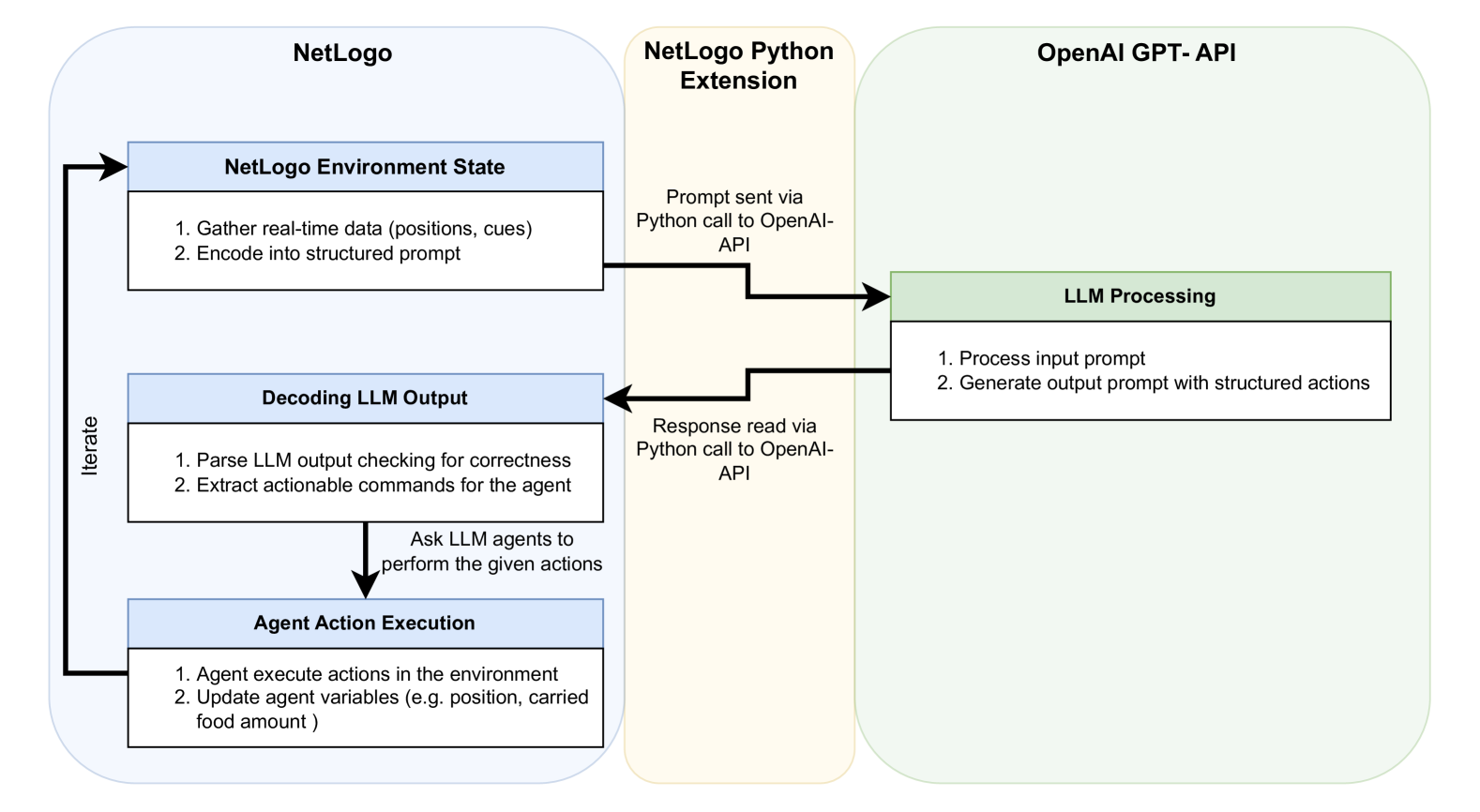

The diagram illustrates a cyclical workflow integrating NetLogo, a Python extension, and the OpenAI GPT-API. It depicts how real-time environmental data is processed through an LLM (Large Language Model) to generate agent actions in a simulated environment. The flow involves data encoding/decoding, API interactions, and iterative agent behavior updates.

### Components/Axes

1. **NetLogo Section (Blue Boxes)**:

- **NetLogo Environment State**:

- Step 1: Gather real-time data (positions, cues)

- Step 2: Encode into structured prompt

- **Decoding LLM Output**:

- Step 1: Parse LLM output for correctness

- Step 2: Extract actionable commands

- **Agent Action Execution**:

- Step 1: Execute actions in environment

- Step 2: Update agent variables (e.g., position, carried food amount)

2. **NetLogo Python Extension (Orange Box)**:

- **Prompt Sent via Python Call to OpenAI API**

- **Response Read via Python Call to OpenAI API**

3. **OpenAI GPT-API (Green Box)**:

- **LLM Processing**:

- Step 1: Process input prompt

- Step 2: Generate output prompt with structured actions

**Flow Arrows**:

- NetLogo → Python Extension (prompt sending)

- Python Extension → OpenAI API (prompt submission)

- OpenAI API → Python Extension (response retrieval)

- Python Extension → NetLogo (decoded commands)

- NetLogo → NetLogo (iterative loop)

### Detailed Analysis

- **NetLogo Environment State**: Initializes the simulation by collecting positional and contextual data, then formats it into a prompt for the LLM.

- **Decoding LLM Output**: Validates the LLM's response for structural integrity before extracting executable commands (e.g., "move north," "collect food").

- **Agent Action Execution**: Implements commands in the NetLogo environment, updating agent states (e.g., position changes, food inventory).

- **Python Extension**: Acts as a bridge, translating NetLogo's structured prompts into API calls and parsing OpenAI's responses.

- **OpenAI GPT-API**: Processes prompts to generate actionable outputs, which are then relayed back through the Python extension.

### Key Observations

1. **Cyclical Workflow**: The system operates in a closed loop, with NetLogo continuously updating the environment state based on agent actions.

2. **Structured Prompts**: Both input and output prompts are explicitly structured to ensure LLM responses are actionable (e.g., "Generate output prompt with structured actions").

3. **Validation Step**: The decoding phase includes a correctness check, suggesting a focus on reliability in LLM outputs.

4. **Variable Updates**: Agent variables (position, food) are explicitly tied to environmental interactions, indicating a dynamic simulation.

### Interpretation

This workflow demonstrates a hybrid system where NetLogo's agent-based modeling is augmented by LLM capabilities via the OpenAI API. The Python extension serves as a critical intermediary, enabling bidirectional communication between NetLogo and the API. The structured prompts and validation steps suggest an emphasis on robustness, ensuring that LLM-generated commands align with the simulation's requirements. The iterative nature of the loop implies real-time adaptability, allowing agents to respond dynamically to environmental changes. The explicit mention of "carried food amount" as an updated variable hints at resource management as a core simulation mechanic.