## Flowchart: QA Dataset Processing Pipeline for Hallucination Detection

### Overview

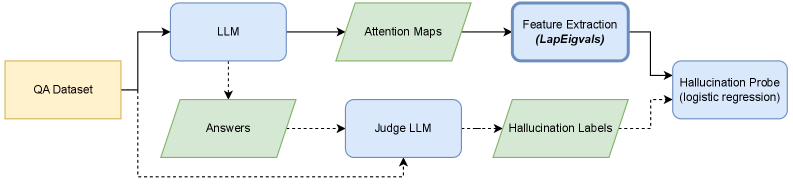

The flowchart illustrates a technical pipeline for analyzing a QA (Question Answering) dataset using Large Language Models (LLMs) and machine learning techniques to detect hallucinations. The system integrates attention-based feature extraction, logistic regression, and model-based judgment to evaluate answer quality.

### Components/Axes

1. **Input**: QA Dataset (rectangular yellow box, leftmost node).

2. **Core Components**:

- **LLM (Large Language Model)**: Blue rectangle, central node.

- **Attention Maps**: Green parallelogram, derived from LLM outputs.

- **Feature Extraction (LapEigvals)**: Blue rectangle, processes attention maps.

- **Hallucination Probe (logistic regression)**: Blue rectangle, final classification step.

- **Answers**: Green parallelogram, outputs from LLM.

- **Judge LLM**: Blue rectangle, evaluates answers for hallucination labels.

- **Hallucination Labels**: Green parallelogram, outputs from Judge LLM.

3. **Flow Direction**:

- Solid arrows indicate direct data flow.

- Dashed arrows represent indirect or iterative relationships (e.g., feedback loops).

### Detailed Analysis

- **QA Dataset** → **LLM**: The pipeline begins with a QA dataset fed into an LLM to generate answers.

- **LLM → Attention Maps**: The LLM produces attention maps, visualizing input focus areas.

- **Attention Maps → Feature Extraction (LapEigvals)**: Laplacian eigenvalues (LapEigvals) are extracted as features from attention maps.

- **Feature Extraction → Hallucination Probe**: Features are input into a logistic regression model to predict hallucination likelihood.

- **Answers → Judge LLM**: Generated answers are evaluated by a separate Judge LLM to assign hallucination labels.

- **Judge LLM → Hallucination Labels**: Labels (e.g., "hallucinated" or "non-hallucinated") are generated.

- **Hallucination Labels → Hallucination Probe**: Labels may be used to train or validate the logistic regression model.

### Key Observations

- **Dual Pathway Architecture**: The system combines attention-based feature extraction (unsupervised) with model-based judgment (supervised) for hallucination detection.

- **Logistic Regression as Final Classifier**: The Hallucination Probe uses logistic regression, suggesting a probabilistic output for hallucination likelihood.

- **Feedback Loop**: Dashed arrows imply iterative refinement between the LLM, Judge LLM, and Hallucination Probe.

### Interpretation

This pipeline demonstrates a hybrid approach to hallucination detection in QA systems:

1. **Attention Maps** provide insight into the LLM's reasoning process, enabling feature extraction that captures contextual dependencies.

2. **Judge LLM** acts as a ground-truth evaluator, assigning labels to answers, which could be used to train the logistic regression model.

3. **Logistic Regression** (Hallucination Probe) likely combines features from attention maps and Judge LLM outputs to make final predictions, balancing interpretability and performance.

The system emphasizes transparency (via attention maps) and robustness (via dual evaluation paths), addressing hallucination detection as both a feature-based and model-based problem. The use of logistic regression suggests a focus on probabilistic confidence scores for hallucination likelihood.