## Line Chart: Model Performance on Math Problems

### Overview

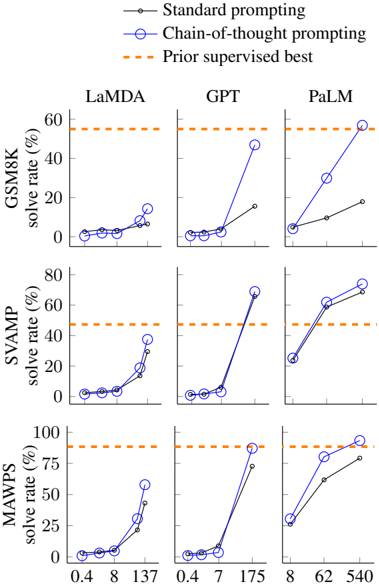

The image presents a series of line charts comparing the performance of three large language models (LaMDA, GPT, and PaLM) on three different math problem datasets (GSM8K, SVAMP, and MAWPS). Performance is measured by "solve rate" (percentage of problems solved correctly). The charts compare "Standard prompting" versus "Chain-of-thought prompting" and benchmark against a "Prior supervised best" performance level.

### Components/Axes

* **X-axis:** Represents model size, with values 0.4, 8, 137, 7, 175, 62, and 540. The units are not explicitly stated, but likely represent the number of parameters in the model.

* **Y-axis:** Represents "solve rate (%)", ranging from 0% to 100%.

* **Datasets:** GSM8K, SVAMP, and MAWPS are displayed as rows.

* **Models:** LaMDA, GPT, and PaLM are displayed as columns.

* **Legend:**

* Black line: "Standard prompting"

* Blue line with circle markers: "Chain-of-thought prompting"

* Orange dashed line: "Prior supervised best"

### Detailed Analysis or Content Details

**GSM8K Dataset:**

* **LaMDA:** The "Standard prompting" line (black) remains relatively flat, starting at approximately 5% and ending around 10%. The "Chain-of-thought prompting" line (blue) starts at approximately 5%, rises to around 20% at x=8, then plateaus around 20-25%. The "Prior supervised best" (orange dashed) is at approximately 55%.

* **GPT:** The "Standard prompting" line (black) starts at approximately 5%, rises to around 10% at x=7, then plateaus. The "Chain-of-thought prompting" line (blue) starts at approximately 5%, rises sharply to around 45% at x=7, then continues to approximately 50% at x=175. The "Prior supervised best" (orange dashed) is at approximately 55%.

* **PaLM:** The "Standard prompting" line (black) starts at approximately 5%, rises to around 15% at x=62, then plateaus. The "Chain-of-thought prompting" line (blue) starts at approximately 5%, rises sharply to around 40% at x=62, then continues to approximately 45% at x=540. The "Prior supervised best" (orange dashed) is at approximately 55%.

**SVAMP Dataset:**

* **LaMDA:** The "Standard prompting" line (black) remains flat around 5-10%. The "Chain-of-thought prompting" line (blue) starts at approximately 5%, rises to around 40% at x=8, then continues to approximately 45% at x=137. The "Prior supervised best" (orange dashed) is at approximately 60%.

* **GPT:** The "Standard prompting" line (black) remains flat around 5-10%. The "Chain-of-thought prompting" line (blue) starts at approximately 5%, rises sharply to around 60% at x=7, then plateaus. The "Prior supervised best" (orange dashed) is at approximately 60%.

* **PaLM:** The "Standard prompting" line (black) remains flat around 5-10%. The "Chain-of-thought prompting" line (blue) starts at approximately 5%, rises to around 55% at x=62, then continues to approximately 60% at x=540. The "Prior supervised best" (orange dashed) is at approximately 60%.

**MAWPS Dataset:**

* **LaMDA:** The "Standard prompting" line (black) starts at approximately 5%, rises to around 25% at x=137. The "Chain-of-thought prompting" line (blue) starts at approximately 5%, rises sharply to around 75% at x=8, then continues to approximately 80% at x=137. The "Prior supervised best" (orange dashed) is at approximately 75%.

* **GPT:** The "Standard prompting" line (black) starts at approximately 5%, rises to around 30% at x=175. The "Chain-of-thought prompting" line (blue) starts at approximately 5%, rises sharply to around 90% at x=7, then continues to approximately 95% at x=175. The "Prior supervised best" (orange dashed) is at approximately 75%.

* **PaLM:** The "Standard prompting" line (black) starts at approximately 5%, rises to around 40% at x=540. The "Chain-of-thought prompting" line (blue) starts at approximately 5%, rises sharply to around 75% at x=62, then continues to approximately 80% at x=540. The "Prior supervised best" (orange dashed) is at approximately 75%.

### Key Observations

* "Chain-of-thought prompting" consistently outperforms "Standard prompting" across all models and datasets.

* Performance generally increases with model size (larger x-values), particularly for "Chain-of-thought prompting".

* The "Prior supervised best" performance is often a ceiling for the "Chain-of-thought prompting" results, though PaLM and GPT approach it on some datasets.

* GPT and PaLM show more dramatic improvements with "Chain-of-thought prompting" than LaMDA.

* The MAWPS dataset shows the largest performance gains from "Chain-of-thought prompting".

### Interpretation

The data strongly suggests that "Chain-of-thought prompting" is a highly effective technique for improving the performance of large language models on math problems. The consistent gains across models and datasets indicate that this is not a dataset-specific or model-specific effect. The increase in performance with model size suggests that larger models are better able to leverage the benefits of "Chain-of-thought prompting". The fact that the models approach, but don't consistently exceed, the "Prior supervised best" suggests that there is still room for improvement, but that "Chain-of-thought prompting" is a significant step forward. The differences in performance between the models suggest that some architectures are more amenable to this technique than others. The MAWPS dataset's particularly large gains may indicate that this dataset benefits more from the reasoning capabilities unlocked by "Chain-of-thought prompting" than the other datasets. The x-axis values likely represent model parameter counts, and the charts demonstrate a clear correlation between model scale and problem-solving ability when combined with chain-of-thought prompting.