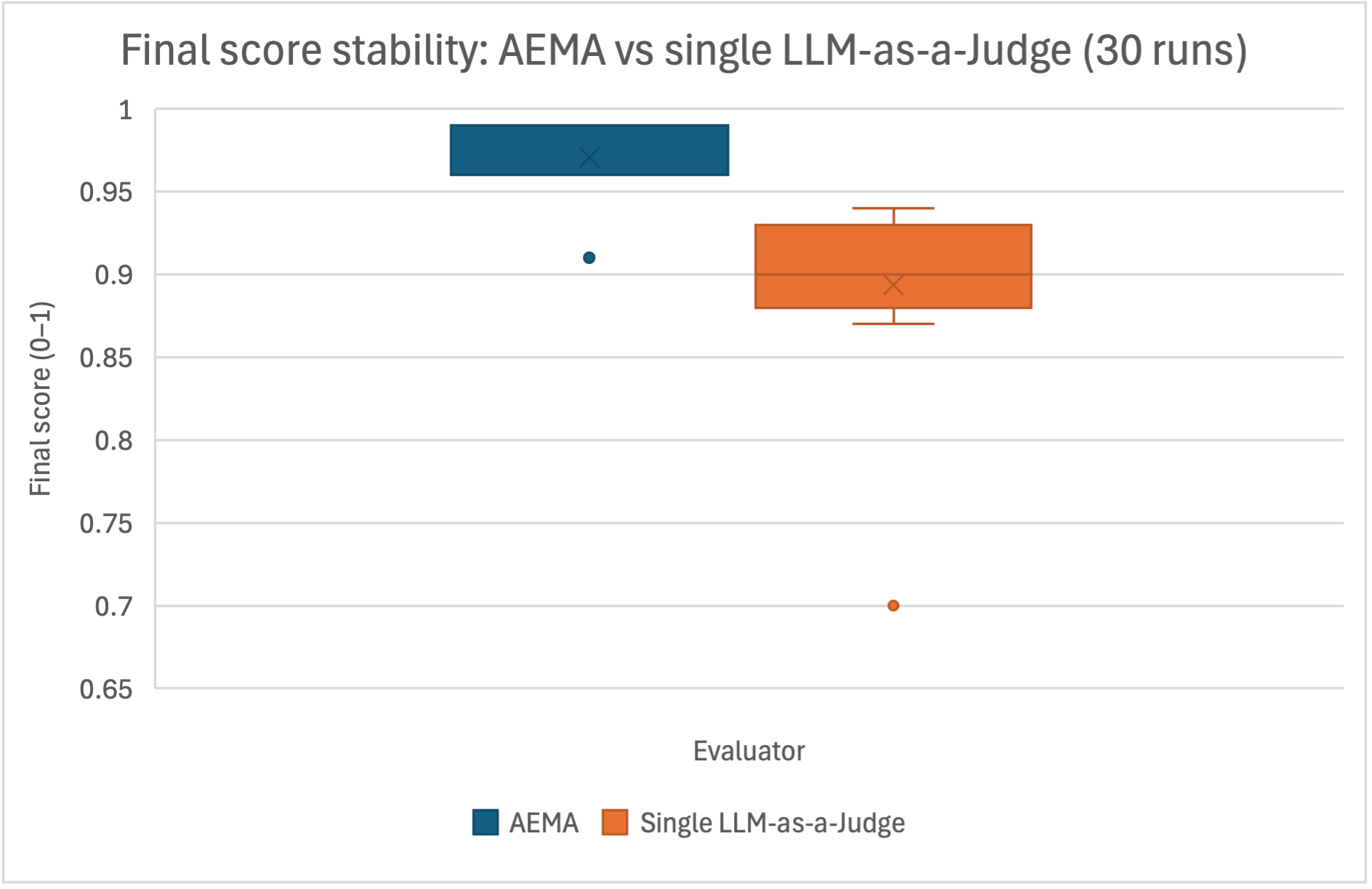

## Box Plot: Final Score Stability Comparison

### Overview

This image is a box plot comparing the stability of final scores (on a 0-1 scale) between two evaluation methods, "AEMA" and "Single LLM-as-a-Judge," based on 30 runs. The chart visually demonstrates the distribution, central tendency, and variability of scores for each method.

### Components/Axes

* **Chart Title:** "Final score stability: AEMA vs single LLM-as-a-Judge (30 runs)"

* **Y-Axis:**

* **Label:** "Final score (0-1)"

* **Scale:** Linear scale from 0.65 to 1.0, with major gridlines at intervals of 0.05 (0.65, 0.7, 0.75, 0.8, 0.85, 0.9, 0.95, 1).

* **X-Axis:**

* **Label:** "Evaluator"

* **Categories:** Two categories are plotted: "AEMA" (left) and "Single LLM-as-a-Judge" (right).

* **Legend:** Located at the bottom center of the chart.

* A blue square is labeled "AEMA".

* An orange square is labeled "Single LLM-as-a-Judge".

* **Data Series:** Two box plots, one for each evaluator, colored according to the legend.

### Detailed Analysis

**1. AEMA (Blue Box Plot - Left)**

* **Visual Trend:** The distribution is extremely compressed and located at the very top of the score range, indicating high consistency and high scores.

* **Components & Approximate Values:**

* **Median (Horizontal Line within Box):** Approximately 0.97.

* **Interquartile Range (IQR - The Box):** The box is very narrow. The lower quartile (Q1, bottom of the box) is approximately 0.96. The upper quartile (Q3, top of the box) is approximately 0.98.

* **Whiskers:** The whiskers are not visibly extended beyond the box, suggesting the minimum and maximum values (excluding outliers) are very close to the quartiles.

* **Outliers:** One outlier point is visible below the box, at approximately 0.91.

* **Mean (Marked with an 'x'):** The 'x' is positioned within the box, near the median, at approximately 0.97.

**2. Single LLM-as-a-Judge (Orange Box Plot - Right)**

* **Visual Trend:** The distribution shows significantly more spread (variability) than AEMA, with scores centered lower on the scale. The box is wider, and whiskers are longer.

* **Components & Approximate Values:**

* **Median (Horizontal Line within Box):** Approximately 0.90.

* **Interquartile Range (IQR - The Box):** The box spans from a lower quartile (Q1) of approximately 0.88 to an upper quartile (Q3) of approximately 0.93.

* **Whiskers:** The upper whisker extends to a maximum value (excluding outliers) of approximately 0.94. The lower whisker extends to a minimum value (excluding outliers) of approximately 0.87.

* **Outliers:** One outlier point is visible far below the lower whisker, at approximately 0.70.

* **Mean (Marked with an 'x'):** The 'x' is positioned slightly below the median line, at approximately 0.895.

### Key Observations

1. **Stability Contrast:** AEMA demonstrates vastly superior score stability. Its IQR (approx. 0.02) is an order of magnitude smaller than that of the Single LLM-as-a-Judge (approx. 0.05).

2. **Performance Level:** AEMA not only is more stable but also achieves a higher central tendency (median ~0.97 vs. ~0.90).

3. **Outliers:** Both methods produced outliers. AEMA's single outlier (~0.91) is still a high score, merely falling outside its own tight cluster. The Single LLM-as-a-Judge produced a severe low outlier (~0.70), indicating a run with significantly degraded performance.

4. **Distribution Shape:** The Single LLM-as-a-Judge's median is closer to its Q1 than its Q3, suggesting a slight positive skew (scores are more concentrated toward the lower end of its IQR).

### Interpretation

This chart provides strong evidence that the **AEMA evaluation method is significantly more reliable and consistent** than using a Single LLM-as-a-Judge. The tight clustering of AEMA scores near the maximum value (1.0) suggests it produces highly reproducible results across multiple runs. In contrast, the Single LLM-as-a-Judge exhibits substantial volatility, with scores varying widely and the potential for catastrophic failure (as evidenced by the outlier at 0.70).

For practical application, this implies that relying on a single LLM run for evaluation introduces unacceptable noise and risk. AEMA, by presumably aggregating or stabilizing judgments, mitigates this instability, leading to more trustworthy and actionable scoring. The data argues for the necessity of methods like AEMA in any rigorous evaluation pipeline where consistency is critical.