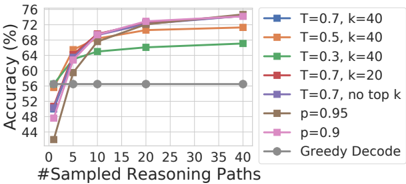

## Line Chart: Accuracy vs. Number of Sampled Reasoning Paths for Various Decoding Strategies

### Overview

The image is a line chart comparing the performance (accuracy) of different text generation decoding strategies as the number of sampled reasoning paths increases. The chart demonstrates how accuracy generally improves with more sampling paths for most methods, with notable differences in performance ceilings and rates of improvement.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **X-Axis:**

* **Label:** `#Sampled Reasoning Paths`

* **Scale:** Linear, from 0 to 40.

* **Major Ticks/Markers:** 0, 5, 10, 20, 30, 40.

* **Y-Axis:**

* **Label:** `Accuracy (%)`

* **Scale:** Linear, from 44 to 76.

* **Major Ticks:** 44, 48, 52, 56, 60, 64, 68, 72, 76.

* **Legend:** Positioned on the right side, outside the main plot area. It lists 8 distinct data series with corresponding colors and marker styles.

1. `T=0.7, k=40` (Blue line, square marker)

2. `T=0.5, k=40` (Orange line, square marker)

3. `T=0.3, k=40` (Green line, square marker)

4. `T=0.7, k=20` (Red line, square marker)

5. `T=0.7, no top k` (Purple line, square marker)

6. `p=0.95` (Brown line, square marker)

7. `p=0.9` (Pink line, square marker)

8. `Greedy Decode` (Gray line, circle marker)

### Detailed Analysis

The chart plots accuracy (%) against the number of sampled reasoning paths (0, 5, 10, 20, 30, 40) for each decoding strategy. Below is an analysis of each series, including its visual trend and approximate data points.

**Trend Verification & Data Points (Approximate):**

1. **`T=0.7, k=40` (Blue, Square):**

* **Trend:** Steep initial rise, then plateaus at a high level.

* **Points:** (0, ~56%), (5, ~68%), (10, ~70%), (20, ~72%), (30, ~73%), (40, ~74%).

2. **`T=0.5, k=40` (Orange, Square):**

* **Trend:** Very similar to the blue line, slightly lower final accuracy.

* **Points:** (0, ~56%), (5, ~67%), (10, ~69%), (20, ~71%), (30, ~72%), (40, ~73%).

3. **`T=0.3, k=40` (Green, Square):**

* **Trend:** Rises more slowly and plateaus at a significantly lower accuracy than the T=0.5/0.7 lines.

* **Points:** (0, ~56%), (5, ~62%), (10, ~64%), (20, ~65%), (30, ~66%), (40, ~66%).

4. **`T=0.7, k=20` (Red, Square):**

* **Trend:** Follows a path very close to the `T=0.7, k=40` line, nearly indistinguishable at many points.

* **Points:** (0, ~56%), (5, ~68%), (10, ~70%), (20, ~72%), (30, ~73%), (40, ~74%).

5. **`T=0.7, no top k` (Purple, Square):**

* **Trend:** Starts lower than the top-k variants but rises to meet them at higher path counts.

* **Points:** (0, ~48%), (5, ~64%), (10, ~68%), (20, ~71%), (30, ~72%), (40, ~73%).

6. **`p=0.95` (Brown, Square):**

* **Trend:** Starts very low, rises sharply, and converges with the top-performing group.

* **Points:** (0, ~44%), (5, ~64%), (10, ~69%), (20, ~72%), (30, ~73%), (40, ~74%).

7. **`p=0.9` (Pink, Square):**

* **Trend:** Nearly identical to the `p=0.95` line.

* **Points:** (0, ~46%), (5, ~64%), (10, ~69%), (20, ~72%), (30, ~73%), (40, ~74%).

8. **`Greedy Decode` (Gray, Circle):**

* **Trend:** **Flat line.** Accuracy does not change with the number of sampled reasoning paths.

* **Points:** Constant at approximately 56% across all x-values (0, 5, 10, 20, 30, 40).

### Key Observations

1. **Performance Ceiling:** Most sampling-based methods (all except Greedy Decode) converge to a similar high accuracy range of approximately 72-74% when 20 or more reasoning paths are sampled.

2. **Greedy Decode Baseline:** Greedy Decode serves as a flat baseline at ~56% accuracy, indicating that simply taking the most likely token at each step does not benefit from increased computational effort (more paths).

3. **Temperature (T) Impact:** Lower temperature (T=0.3) results in a lower performance ceiling compared to higher temperatures (T=0.5, T=0.7) when using top-k sampling (k=40).

4. **Top-k (k) Impact:** For T=0.7, reducing k from 40 to 20 (`T=0.7, k=20`) has a negligible effect on the final accuracy trend. Removing top-k entirely (`T=0.7, no top k`) hurts initial performance at low path counts but catches up.

5. **Nucleus Sampling (p):** Both p=0.9 and p=0.95 perform nearly identically, starting from a low point but rapidly achieving top-tier accuracy.

6. **Diminishing Returns:** For all improving methods, the most significant gains occur between 0 and 10 sampled paths. The improvement from 20 to 40 paths is marginal.

### Interpretation

This chart provides a technical comparison of decoding strategies for tasks requiring reasoning (e.g., chain-of-thought or self-consistency prompting). The data suggests:

* **Sampling is Crucial:** Methods that sample multiple reasoning paths and aggregate results (likely via majority vote) significantly outperform the deterministic Greedy Decode baseline. This validates the "self-consistency" paradigm.

* **Robustness of High Temperature:** Higher temperatures (0.5, 0.7) combined with top-k or nucleus sampling appear more effective for this task than a low temperature (0.3), likely because they encourage more diverse, exploratory reasoning paths that can correct early errors.

* **Efficiency vs. Performance:** There is a clear trade-off. Using 10-20 sampled paths captures most of the potential accuracy gain. Going to 40 paths yields only slight improvements at the cost of linearly increased computation.

* **Method Equivalence at Scale:** With sufficient sampling (≥20 paths), the specific choice between top-k (with k=20 or 40) and nucleus sampling (p=0.9/0.95) at T=0.7 becomes less critical, as they all converge to a similar high-performance plateau. The initial starting point (accuracy at 0 paths) varies greatly, but the system "recovers" with more samples.

**In essence, the chart demonstrates that for complex reasoning tasks, investing computational resources into sampling and aggregating multiple diverse reasoning paths is a highly effective strategy, with diminishing returns beyond a certain point (≈20 paths).**