## Grouped Bar Chart: Prediction Flip Rate by Dataset and Model

### Overview

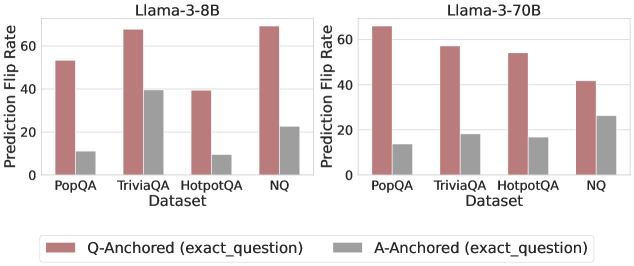

The image displays two side-by-side grouped bar charts comparing the "Prediction Flip Rate" for two different large language models (Llama-3-8B and Llama-3-70B) across four question-answering datasets. The charts evaluate the stability of model predictions under two different anchoring conditions.

### Components/Axes

* **Chart Titles (Top Center):**

* Left Chart: `Llama-3-8B`

* Right Chart: `Llama-3-70B`

* **Y-Axis (Left Side of Each Chart):**

* Label: `Prediction Flip Rate`

* Scale: 0 to 60, with major tick marks at 0, 20, 40, and 60.

* **X-Axis (Bottom of Each Chart):**

* Label: `Dataset`

* Categories (from left to right): `PopQA`, `TriviaQA`, `HotpotQA`, `NQ`

* **Legend (Bottom Center, spanning both charts):**

* A red/brown square labeled: `Q-Anchored (exact_question)`

* A gray square labeled: `A-Anchored (exact_question)`

* **Data Series:** Each dataset category has two bars, one for each anchoring condition, placed side-by-side.

### Detailed Analysis

**Llama-3-8B Chart (Left Panel):**

* **Trend Verification:** For all four datasets, the Q-Anchored (red/brown) bar is significantly taller than the A-Anchored (gray) bar, indicating a higher prediction flip rate when the question is anchored.

* **Data Points (Approximate Values):**

* **PopQA:** Q-Anchored ≈ 53, A-Anchored ≈ 10

* **TriviaQA:** Q-Anchored ≈ 68, A-Anchored ≈ 39

* **HotpotQA:** Q-Anchored ≈ 39, A-Anchored ≈ 9

* **NQ:** Q-Anchored ≈ 69, A-Anchored ≈ 22

**Llama-3-70B Chart (Right Panel):**

* **Trend Verification:** The same pattern holds: Q-Anchored bars are consistently taller than A-Anchored bars across all datasets. The overall height of the bars appears slightly lower compared to the 8B model for most categories.

* **Data Points (Approximate Values):**

* **PopQA:** Q-Anchored ≈ 66, A-Anchored ≈ 14

* **TriviaQA:** Q-Anchored ≈ 57, A-Anchored ≈ 18

* **HotpotQA:** Q-Anchored ≈ 54, A-Anchored ≈ 17

* **NQ:** Q-Anchored ≈ 42, A-Anchored ≈ 26

### Key Observations

1. **Consistent Anchoring Effect:** Across both model sizes and all four datasets, anchoring the prompt with the exact question (`Q-Anchored`) leads to a substantially higher prediction flip rate than anchoring with the exact answer (`A-Anchored`).

2. **Dataset Variability:** The magnitude of the flip rate varies by dataset. For example, `TriviaQA` and `NQ` show very high Q-Anchored flip rates for the 8B model, while `HotpotQA` shows the lowest for both anchoring types in that model.

3. **Model Size Comparison:** The larger Llama-3-70B model generally exhibits lower flip rates than the 8B model, particularly for the Q-Anchored condition on datasets like `TriviaQA` and `NQ`. However, for `PopQA`, the 70B model's Q-Anchored flip rate is higher.

4. **Relative Stability:** The A-Anchored condition results in flip rates mostly below 30, suggesting predictions are more stable when anchored to an answer format.

### Interpretation

This data suggests that the **format of the prompt significantly influences the stability of a large language model's predictions**. When a model is prompted with a question (`Q-Anchored`), its output is more volatile and prone to "flipping" (changing) compared to when it is prompted with a fixed answer format (`A-Anchored`).

The **Peircean investigative reading** implies that the "exact_question" anchor introduces more interpretative ambiguity or contextual variability for the model, leading to less deterministic outputs. In contrast, the "exact_question" anchor (likely meaning the prompt is structured around providing an answer) constrains the model's response space, leading to greater consistency.

The **anomaly** is that while the 70B model is generally more stable, it is not uniformly so; its higher flip rate on `PopQA` under Q-Anchoring indicates that dataset-specific characteristics can interact with model scale in non-linear ways. This highlights that prompt engineering for stability must be tailored to both the model and the specific knowledge domain (dataset).