TECHNICAL ASSET FINGERPRINT

837475acf8b16c5f2a40bcbf

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Charts: Model Performance on Math Problems

### Overview

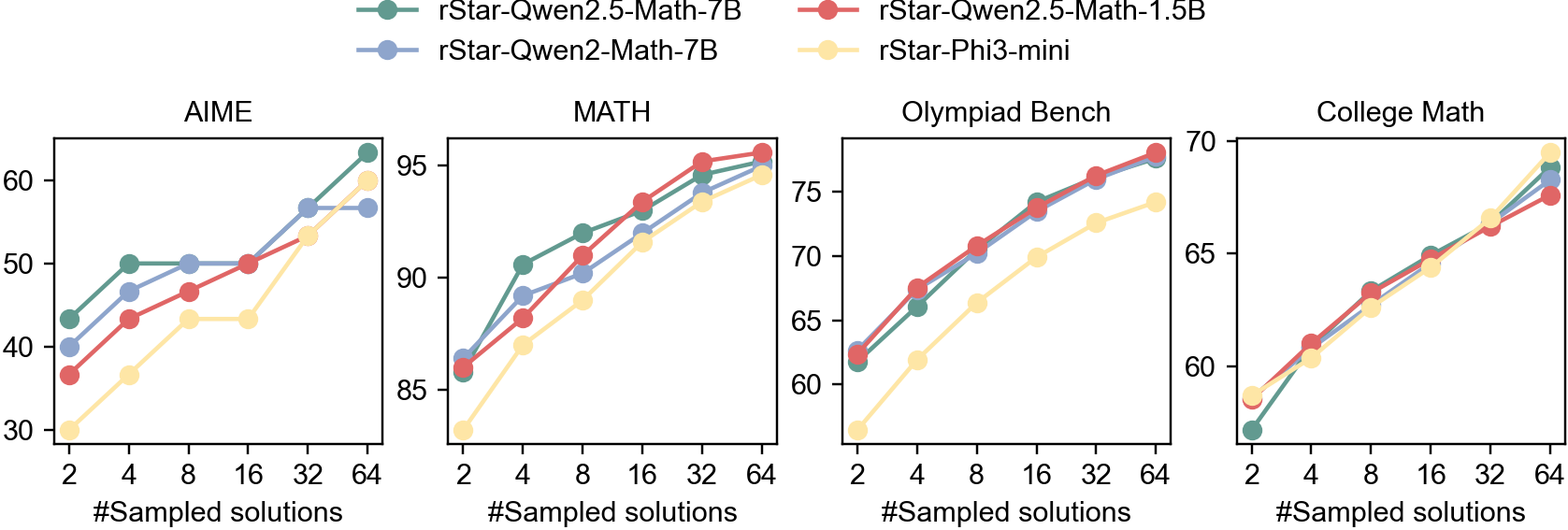

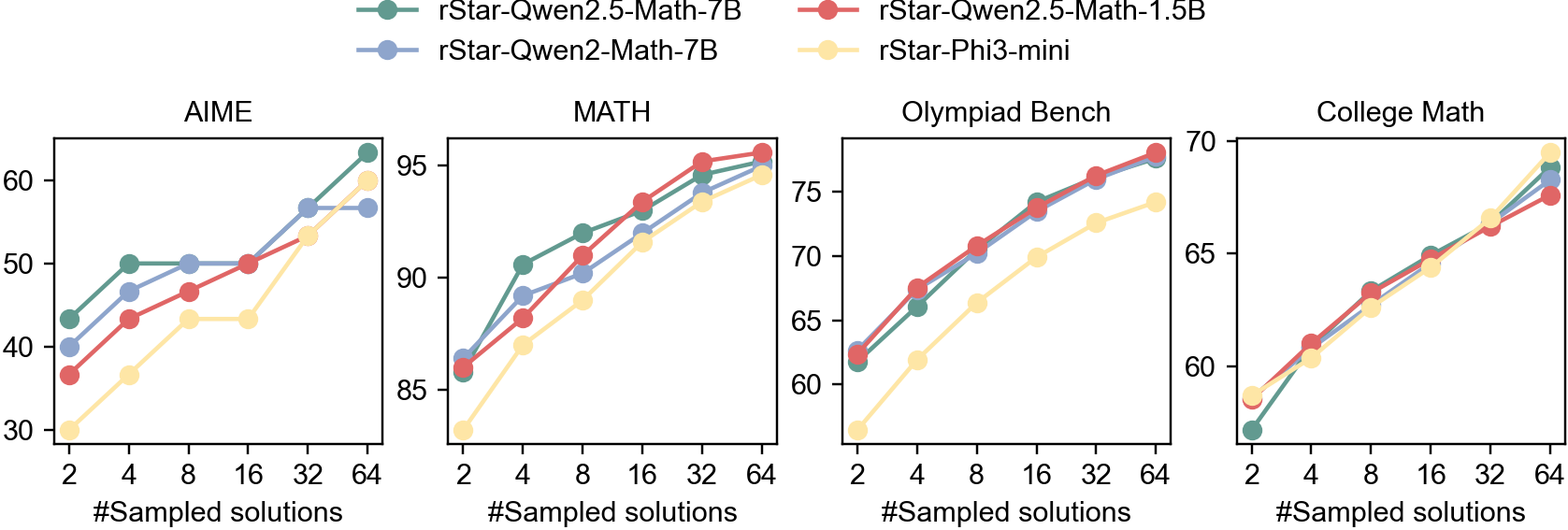

The image contains four line charts comparing the performance of four different language models (rStar-Qwen2.5-Math-7B, rStar-Qwen2-Math-7B, rStar-Qwen2.5-Math-1.5B, and rStar-Phi3-mini) on four different math problem datasets: AIME, MATH, Olympiad Bench, and College Math. The x-axis represents the number of sampled solutions (2, 4, 8, 16, 32, 64), and the y-axis represents the performance score.

### Components/Axes

* **Legend (Top):**

* Green: rStar-Qwen2.5-Math-7B

* Blue: rStar-Qwen2-Math-7B

* Red: rStar-Qwen2.5-Math-1.5B

* Yellow: rStar-Phi3-mini

* **X-axis (Horizontal):** "#Sampled solutions" with markers at 2, 4, 8, 16, 32, and 64.

* **Y-axis (Vertical):** Performance score. The scale varies for each chart.

* AIME: 30 to 60

* MATH: 85 to 95

* Olympiad Bench: 60 to 75

* College Math: 60 to 70

* **Chart Titles:** AIME, MATH, Olympiad Bench, College Math

### Detailed Analysis

**1. AIME**

* **rStar-Qwen2.5-Math-7B (Green):** Starts at approximately 42, increases to 50 at 4 sampled solutions, remains relatively flat at 50 until 16 sampled solutions, and then increases to approximately 63 at 64 sampled solutions.

* **rStar-Qwen2-Math-7B (Blue):** Starts at approximately 40, increases to 49 at 4 sampled solutions, remains relatively flat at 50 until 32 sampled solutions, and then increases to approximately 57 at 64 sampled solutions.

* **rStar-Qwen2.5-Math-1.5B (Red):** Starts at approximately 37, increases to 47 at 4 sampled solutions, remains relatively flat at 50 until 16 sampled solutions, and then increases to approximately 58 at 64 sampled solutions.

* **rStar-Phi3-mini (Yellow):** Starts at approximately 32, increases to 37 at 4 sampled solutions, increases to 42 at 8 sampled solutions, remains relatively flat at 43 until 16 sampled solutions, and then increases to approximately 55 at 64 sampled solutions.

**2. MATH**

* **rStar-Qwen2.5-Math-7B (Green):** Starts at approximately 86, increases to 91 at 4 sampled solutions, increases to 94 at 16 sampled solutions, and then increases to approximately 96 at 64 sampled solutions.

* **rStar-Qwen2-Math-7B (Blue):** Starts at approximately 87, increases to 89 at 4 sampled solutions, increases to 92 at 16 sampled solutions, and then increases to approximately 95 at 64 sampled solutions.

* **rStar-Qwen2.5-Math-1.5B (Red):** Starts at approximately 87, increases to 91 at 4 sampled solutions, increases to 94 at 16 sampled solutions, and then increases to approximately 96 at 64 sampled solutions.

* **rStar-Phi3-mini (Yellow):** Starts at approximately 83, increases to 88 at 4 sampled solutions, increases to 91 at 16 sampled solutions, and then increases to approximately 94 at 64 sampled solutions.

**3. Olympiad Bench**

* **rStar-Qwen2.5-Math-7B (Green):** Starts at approximately 62, increases to 67 at 4 sampled solutions, increases to 72 at 16 sampled solutions, and then increases to approximately 77 at 64 sampled solutions.

* **rStar-Qwen2-Math-7B (Blue):** Starts at approximately 62, increases to 68 at 4 sampled solutions, increases to 73 at 16 sampled solutions, and then increases to approximately 76 at 64 sampled solutions.

* **rStar-Qwen2.5-Math-1.5B (Red):** Starts at approximately 62, increases to 68 at 4 sampled solutions, increases to 74 at 16 sampled solutions, and then increases to approximately 77 at 64 sampled solutions.

* **rStar-Phi3-mini (Yellow):** Starts at approximately 58, increases to 62 at 4 sampled solutions, increases to 67 at 16 sampled solutions, and then increases to approximately 74 at 64 sampled solutions.

**4. College Math**

* **rStar-Qwen2.5-Math-7B (Green):** Starts at approximately 58, increases to 63 at 4 sampled solutions, increases to 66 at 16 sampled solutions, and then increases to approximately 69 at 64 sampled solutions.

* **rStar-Qwen2-Math-7B (Blue):** Starts at approximately 58, increases to 63 at 4 sampled solutions, increases to 66 at 16 sampled solutions, and then increases to approximately 68 at 64 sampled solutions.

* **rStar-Qwen2.5-Math-1.5B (Red):** Starts at approximately 58, increases to 63 at 4 sampled solutions, increases to 66 at 16 sampled solutions, and then increases to approximately 68 at 64 sampled solutions.

* **rStar-Phi3-mini (Yellow):** Starts at approximately 57, increases to 61 at 4 sampled solutions, increases to 64 at 16 sampled solutions, and then increases to approximately 67 at 64 sampled solutions.

### Key Observations

* All models generally improve in performance as the number of sampled solutions increases across all datasets.

* The rStar-Qwen2.5-Math-7B model (Green) generally performs the best or close to the best across all datasets.

* The rStar-Phi3-mini model (Yellow) generally performs the worst across all datasets, but still shows improvement with more sampled solutions.

* The performance difference between models is more pronounced on the AIME dataset compared to the other datasets.

### Interpretation

The data suggests that increasing the number of sampled solutions generally improves the performance of language models on math problem-solving tasks. The rStar-Qwen2.5-Math-7B model appears to be the most effective among the models tested, while the rStar-Phi3-mini model is the least effective. The AIME dataset seems to be more challenging, as the performance differences between the models are more apparent. The models converge towards similar performance levels on the MATH, Olympiad Bench, and College Math datasets, especially with a higher number of sampled solutions. This could indicate that these datasets are less sensitive to model architecture differences or that the models are approaching a performance ceiling on these tasks.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: Performance of Language Models on Various Benchmarks

### Overview

The image presents four line charts, each depicting the performance of different language models across varying numbers of sampled solutions. The charts compare the performance of rStar-Qwen2.5-Math-7B (grey line), rStar-Qwen2-Math-7B (blue line), rStar-Qwen2.5-Math-1.5B (green line), and rStar-Phi3-mini (orange line) on four distinct benchmarks: AIME, MATH, Olympiad Bench, and College Math. The x-axis represents the number of sampled solutions, ranging from 2 to 64, while the y-axis represents the performance score.

### Components/Axes

* **X-axis Label (all charts):** "#Sampled solutions"

* **Y-axis Label (all charts):** Performance Score (scales vary per chart)

* **Legend (top-right of the entire image):**

* rStar-Qwen2.5-Math-7B (Grey line)

* rStar-Qwen2-Math-7B (Blue line)

* rStar-Qwen2.5-Math-1.5B (Green line)

* rStar-Phi3-mini (Orange line)

* **Chart Titles (top-center of each chart):** AIME, MATH, Olympiad Bench, College Math.

### Detailed Analysis or Content Details

**AIME Chart:**

* The grey line (rStar-Qwen2.5-Math-7B) shows an upward trend, starting at approximately 34 at 2 sampled solutions and reaching around 62 at 64 sampled solutions.

* The blue line (rStar-Qwen2-Math-7B) begins at approximately 38 at 2 sampled solutions, rises sharply to around 58 at 16 sampled solutions, and plateaus around 62 at 64 sampled solutions.

* The green line (rStar-Qwen2.5-Math-1.5B) starts at around 32 at 2 sampled solutions, increases steadily to approximately 54 at 32 sampled solutions, and reaches around 58 at 64 sampled solutions.

* The orange line (rStar-Phi3-mini) begins at approximately 30 at 2 sampled solutions, rises to around 45 at 16 sampled solutions, and reaches approximately 52 at 64 sampled solutions.

**MATH Chart:**

* The grey line (rStar-Qwen2.5-Math-7B) shows a strong upward trend, starting at approximately 86 at 2 sampled solutions and reaching around 96 at 64 sampled solutions.

* The blue line (rStar-Qwen2-Math-7B) begins at approximately 84 at 2 sampled solutions, rises rapidly to around 94 at 16 sampled solutions, and plateaus around 96 at 64 sampled solutions.

* The green line (rStar-Qwen2.5-Math-1.5B) starts at around 82 at 2 sampled solutions, increases steadily to approximately 92 at 32 sampled solutions, and reaches around 94 at 64 sampled solutions.

* The orange line (rStar-Phi3-mini) begins at approximately 80 at 2 sampled solutions, rises to around 88 at 16 sampled solutions, and reaches approximately 92 at 64 sampled solutions.

**Olympiad Bench Chart:**

* The grey line (rStar-Qwen2.5-Math-7B) shows an upward trend, starting at approximately 58 at 2 sampled solutions and reaching around 74 at 64 sampled solutions.

* The blue line (rStar-Qwen2-Math-7B) begins at approximately 60 at 2 sampled solutions, rises to around 70 at 16 sampled solutions, and plateaus around 72 at 64 sampled solutions.

* The green line (rStar-Qwen2.5-Math-1.5B) starts at around 56 at 2 sampled solutions, increases steadily to approximately 68 at 32 sampled solutions, and reaches around 70 at 64 sampled solutions.

* The orange line (rStar-Phi3-mini) begins at approximately 54 at 2 sampled solutions, rises to around 64 at 16 sampled solutions, and reaches approximately 67 at 64 sampled solutions.

**College Math Chart:**

* The grey line (rStar-Qwen2.5-Math-7B) shows an upward trend, starting at approximately 60 at 2 sampled solutions and reaching around 70 at 64 sampled solutions.

* The blue line (rStar-Qwen2-Math-7B) begins at approximately 58 at 2 sampled solutions, rises to around 66 at 16 sampled solutions, and plateaus around 68 at 64 sampled solutions.

* The green line (rStar-Qwen2.5-Math-1.5B) starts at around 56 at 2 sampled solutions, increases steadily to approximately 65 at 32 sampled solutions, and reaches around 67 at 64 sampled solutions.

* The orange line (rStar-Phi3-mini) begins at approximately 54 at 2 sampled solutions, rises to around 62 at 16 sampled solutions, and reaches approximately 65 at 64 sampled solutions.

### Key Observations

* Across all benchmarks, increasing the number of sampled solutions generally improves performance for all models.

* rStar-Qwen2.5-Math-7B consistently performs the best, followed closely by rStar-Qwen2-Math-7B.

* rStar-Phi3-mini consistently performs the worst across all benchmarks.

* The performance gains from increasing sampled solutions diminish as the number of solutions increases, particularly for rStar-Qwen2-Math-7B.

* The MATH benchmark shows the highest overall performance scores, while AIME shows the lowest.

### Interpretation

The data demonstrates the impact of increasing sampled solutions on the performance of different language models across various mathematical benchmarks. The consistent outperformance of rStar-Qwen2.5-Math-7B suggests that model size and architecture play a significant role in problem-solving capabilities. The diminishing returns observed with increased sampling indicate a point of saturation where additional solutions provide minimal performance improvement. The differences in performance across benchmarks highlight the varying difficulty levels of the tasks. The results suggest that for optimal performance, a balance between model complexity and the number of sampled solutions is crucial. The consistent lower performance of rStar-Phi3-mini may indicate limitations in its architecture or training data compared to the other models.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Line Charts (Subplots)]: Performance of Four Models Across Mathematics Benchmarks with Increasing Sampled Solutions

### Overview

The image contains four horizontally arranged line charts (subplots), each representing a distinct mathematics benchmark: **AIME**, **MATH**, **Olympiad Bench**, and **College Math**. Each chart plots the performance (y-axis) of four models against the number of sampled solutions (x-axis, values: 2, 4, 8, 16, 32, 64). Models are distinguished by color:

- Green: *rStar-Qwen2.5-Math-7B*

- Red: *rStar-Qwen2.5-Math-1.5B*

- Blue: *rStar-Qwen2-Math-7B*

- Yellow: *rStar-Phi3-mini*

### Components/Axes

- **Legend**: Positioned at the top, listing four models with corresponding colors (green, red, blue, yellow).

- **X-axis (all charts)**: Labeled *“#Sampled solutions”* with tick marks at 2, 4, 8, 16, 32, 64.

- **Y-axis (per chart)**:

- *AIME*: Range ~30–60 (performance metric, e.g., accuracy).

- *MATH*: Range ~85–95.

- *Olympiad Bench*: Range ~60–75.

- *College Math*: Range ~60–70.

### Detailed Analysis (Per Chart)

#### 1. AIME Chart

- **Trend**: All models show increasing performance with more sampled solutions.

- **Data Points (approximate)**:

- Green (*rStar-Qwen2.5-Math-7B*): ~43 (2), ~50 (4), ~50 (8), ~50 (16), ~57 (32), ~62 (64).

- Red (*rStar-Qwen2.5-Math-1.5B*): ~37 (2), ~43 (4), ~47 (8), ~50 (16), ~53 (32), ~58 (64).

- Blue (*rStar-Qwen2-Math-7B*): ~40 (2), ~47 (4), ~50 (8), ~50 (16), ~57 (32), ~57 (64).

- Yellow (*rStar-Phi3-mini*): ~30 (2), ~37 (4), ~43 (8), ~43 (16), ~53 (32), ~60 (64).

#### 2. MATH Chart

- **Trend**: All models show increasing performance, converging at higher sampled solutions.

- **Data Points (approximate)**:

- Green: ~86 (2), ~90 (4), ~92 (8), ~93 (16), ~94 (32), ~95 (64).

- Red: ~86 (2), ~88 (4), ~91 (8), ~93 (16), ~94 (32), ~95 (64).

- Blue: ~86 (2), ~89 (4), ~91 (8), ~93 (16), ~94 (32), ~95 (64).

- Yellow: ~83 (2), ~87 (4), ~89 (8), ~91 (16), ~93 (32), ~94 (64).

#### 3. Olympiad Bench Chart

- **Trend**: All models show increasing performance, with green, red, blue converging at higher sampled solutions.

- **Data Points (approximate)**:

- Green: ~62 (2), ~66 (4), ~70 (8), ~73 (16), ~74 (32), ~75 (64).

- Red: ~63 (2), ~67 (4), ~70 (8), ~73 (16), ~74 (32), ~75 (64).

- Blue: ~62 (2), ~66 (4), ~70 (8), ~73 (16), ~74 (32), ~75 (64).

- Yellow: ~58 (2), ~62 (4), ~66 (8), ~70 (16), ~72 (32), ~73 (64).

#### 4. College Math Chart

- **Trend**: All models show increasing performance, with yellow (*rStar-Phi3-mini*) slightly outperforming others at 64 sampled solutions.

- **Data Points (approximate)**:

- Green: ~58 (2), ~61 (4), ~63 (8), ~65 (16), ~67 (32), ~69 (64).

- Red: ~59 (2), ~61 (4), ~63 (8), ~65 (16), ~67 (32), ~68 (64).

- Blue: ~59 (2), ~61 (4), ~63 (8), ~65 (16), ~67 (32), ~69 (64).

- Yellow: ~59 (2), ~61 (4), ~63 (8), ~65 (16), ~67 (32), ~70 (64).

### Key Observations

- **Performance Trend**: All models improve with more sampled solutions across all benchmarks, indicating that increasing sampled solutions enhances performance.

- **Model Comparison**:

- In *AIME*, *rStar-Phi3-mini* (yellow) starts lowest but rises to match/exceed others at 64.

- In *MATH*, all models converge to similar high performance at 64.

- In *Olympiad Bench*, green, red, blue converge, while yellow lags slightly.

- In *College Math*, yellow (*rStar-Phi3-mini*) slightly outperforms others at 64.

- **Consistency**: Green (*rStar-Qwen2.5-Math-7B*) and red (*rStar-Qwen2.5-Math-1.5B*) often perform similarly, suggesting comparable performance between the 7B and 1.5B variants.

### Interpretation

The charts demonstrate that increasing sampled solutions (from 2 to 64) consistently improves performance across all models and benchmarks. This suggests that sampling more solutions (e.g., in reasoning/generation tasks) enhances outcomes, likely due to increased diversity/quality of solutions. Convergence at higher sampled solutions (e.g., *MATH*, *Olympiad Bench*) implies diminishing returns or a performance ceiling. The slight outperformance of *rStar-Phi3-mini* in *College Math* at 64 may indicate its strength in that benchmark, while *rStar-Qwen2.5-Math* variants show strong cross-benchmark performance. This data informs how model size (7B vs. 1.5B) and architecture (Qwen2.5-Math vs. Phi3-mini) interact with sampling strategies in mathematical reasoning tasks.

(Note: All values are approximate, based on visual estimation of the charts.)

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Model Performance Across Benchmarks

### Overview

The image contains four line graphs comparing the performance of four AI models (rStar-Qwen2.5-Math-7B, rStar-Qwen2.5-Math-1.5B, rStar-Qwen2-Math-7B, and rStar-Phi3-mini) across four benchmarks: AIME, MATH, Olympiad Bench, and College Math. Each graph plots performance metrics (y-axis) against the number of sampled solutions (x-axis: 2, 4, 8, 16, 32, 64). The legend is positioned at the top, with colors mapped to models.

### Components/Axes

- **X-axis**: "#Sampled solutions" (values: 2, 4, 8, 16, 32, 64) across all graphs.

- **Y-axes**:

- AIME: 30–60

- MATH: 85–95

- Olympiad Bench: 60–75

- College Math: 55–70

- **Legend**: Top of the image, mapping colors to models:

- Green: rStar-Qwen2.5-Math-7B

- Red: rStar-Qwen2.5-Math-1.5B

- Blue: rStar-Qwen2-Math-7B

- Yellow: rStar-Phi3-mini

### Detailed Analysis

#### AIME

- **Green (rStar-Qwen2.5-Math-7B)**: Starts at ~40 (2 samples), rises to ~60 (64 samples).

- **Red (rStar-Qwen2.5-Math-1.5B)**: Starts at ~35, peaks at ~60.

- **Blue (rStar-Qwen2-Math-7B)**: Starts at ~40, plateaus at ~58.

- **Yellow (rStar-Phi3-mini)**: Starts at ~30, rises to ~60.

#### MATH

- **Green (rStar-Qwen2.5-Math-7B)**: Starts at ~85, peaks at ~95.

- **Red (rStar-Qwen2.5-Math-1.5B)**: Starts at ~80, peaks at ~95.

- **Blue (rStar-Qwen2-Math-7B)**: Starts at ~85, peaks at ~93.

- **Yellow (rStar-Phi3-mini)**: Starts at ~80, peaks at ~92.

#### Olympiad Bench

- **Green (rStar-Qwen2.5-Math-7B)**: Starts at ~60, peaks at ~75.

- **Red (rStar-Qwen2.5-Math-1.5B)**: Starts at ~65, peaks at ~75.

- **Blue (rStar-Qwen2-Math-7B)**: Starts at ~60, peaks at ~74.

- **Yellow (rStar-Phi3-mini)**: Starts at ~55, peaks at ~70.

#### College Math

- **Green (rStar-Qwen2.5-Math-7B)**: Starts at ~55, peaks at ~70.

- **Red (rStar-Qwen2.5-Math-1.5B)**: Starts at ~58, peaks at ~70.

- **Blue (rStar-Qwen2-Math-7B)**: Starts at ~55, peaks at ~69.

- **Yellow (rStar-Phi3-mini)**: Starts at ~50, peaks at ~70.

### Key Observations

1. **Performance Trends**: All models improve with more sampled solutions, but the rate of improvement varies.

2. **Model Size Impact**:

- 7B models (green/blue) generally outperform smaller models (red/yellow) in MATH and Olympiad Bench.

- Phi3-mini (yellow) shows the steepest improvement in AIME and College Math.

3. **Benchmark-Specific Patterns**:

- **MATH**: 7B models achieve near-peak performance early (e.g., ~95 by 16 samples).

- **Olympiad Bench**: Phi3-mini closes the gap with larger models by 64 samples.

- **College Math**: All models converge to similar performance (~65–70) at 64 samples.

### Interpretation

The data suggests that larger models (7B) excel in complex benchmarks like MATH, where accuracy is critical. Smaller models (1.5B/Phi3-mini) require more samples to match performance but demonstrate scalability. The Olympiad and College Math benchmarks highlight the importance of solution diversity, as Phi3-mini improves significantly with more samples. This implies that model size and sampling strategy are interdependent factors in optimizing performance across tasks.

DECODING INTELLIGENCE...