\n

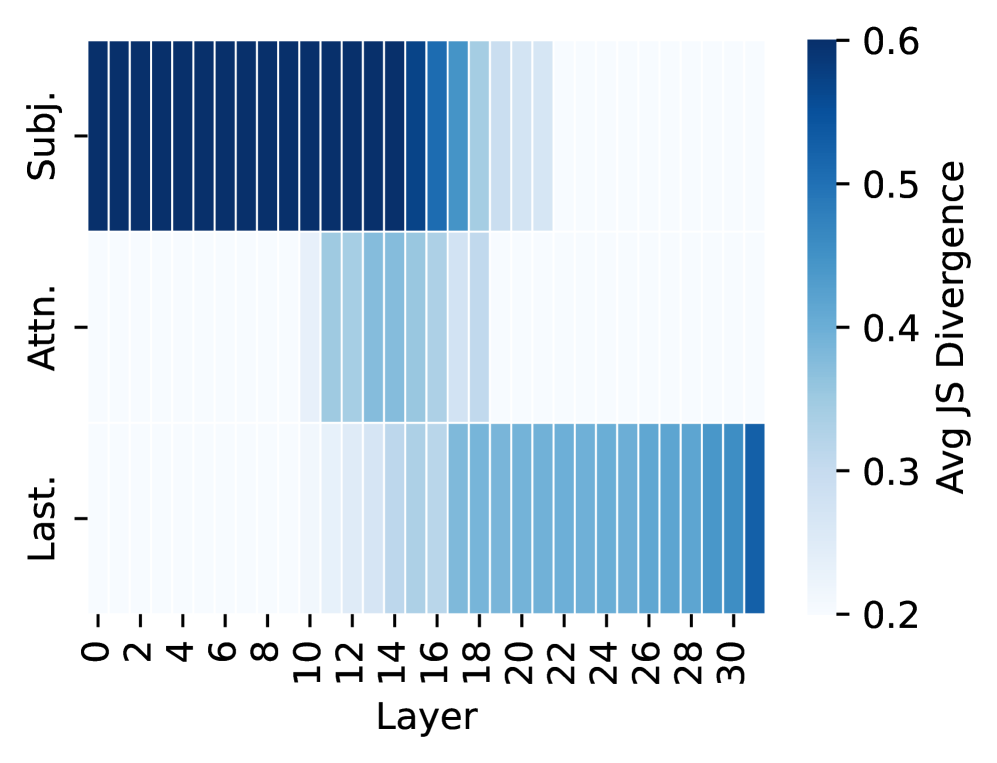

## Heatmap: Average Jensen-Shannon Divergence Across Model Layers and Components

### Overview

The image is a heatmap visualizing the "Avg JS Divergence" (Average Jensen-Shannon Divergence) across different layers of a model (likely a neural network) for three distinct components or metrics. The divergence is represented by a color gradient, with darker blues indicating higher divergence values.

### Components/Axes

* **X-Axis (Horizontal):** Labeled **"Layer"**. It represents model layers, numbered from **0 to 30** in increments of 2 (0, 2, 4, ..., 30).

* **Y-Axis (Vertical):** Lists three categorical components:

1. **Subj.** (Top row)

2. **Attn.** (Middle row)

3. **Last.** (Bottom row)

* **Color Scale/Legend:** Positioned on the **right side** of the chart. It is a vertical color bar labeled **"Avg JS Divergence"**.

* The scale ranges from **0.2** (lightest blue/white) to **0.6** (darkest blue).

* Intermediate marked values are **0.3, 0.4, and 0.5**.

### Detailed Analysis

The heatmap displays a 3x16 grid of colored cells (3 rows for components, 16 columns for the even-numbered layers 0-30). The color intensity in each cell corresponds to the Avg JS Divergence value for that specific component at that layer.

**Trend Verification & Data Point Extraction:**

1. **Row: "Subj." (Top)**

* **Visual Trend:** Starts with very high divergence in the earliest layers, which then decreases significantly in the later layers.

* **Data Points (Approximate):**

* Layers 0-14: Consistently very dark blue, indicating divergence values at or near the maximum of **~0.6**.

* Layer 16: Color lightens noticeably to a medium blue, approximately **~0.45**.

* Layers 18-22: Continues to lighten, reaching values around **~0.3**.

* Layers 24-30: Becomes very light blue/white, indicating low divergence values of **~0.2 to 0.25**.

2. **Row: "Attn." (Middle)**

* **Visual Trend:** Shows generally low divergence across all layers, with a subtle, localized increase in the middle layers.

* **Data Points (Approximate):**

* Layers 0-8: Very light blue/white, divergence **~0.2**.

* Layers 10-18: A band of light-to-medium blue appears, peaking around layers 12-16 with values of approximately **~0.3 to 0.35**.

* Layers 20-30: Returns to very light blue, divergence **~0.2**.

3. **Row: "Last." (Bottom)**

* **Visual Trend:** Shows the inverse pattern of "Subj." – divergence starts very low and increases steadily in the later layers.

* **Data Points (Approximate):**

* Layers 0-14: Very light blue/white, divergence **~0.2**.

* Layer 16: Begins to darken to a light blue, approximately **~0.25**.

* Layers 18-24: Progressively darkens, reaching values of **~0.35 to 0.4**.

* Layers 26-30: Becomes a solid medium blue, indicating divergence values of **~0.45 to 0.5**.

### Key Observations

* **Inverse Relationship:** There is a clear inverse relationship between the "Subj." and "Last." components across the model depth. High early-layer divergence in "Subj." corresponds to low divergence in "Last.", and vice-versa in later layers.

* **"Attn." Stability:** The "Attn." component maintains a relatively low and stable divergence profile, with only a minor, transient increase in the middle layers (10-18).

* **Layer Transition Zone:** Layers 14-18 appear to be a critical transition zone where the divergence profiles for "Subj." and "Last." begin their significant shifts.

### Interpretation

This heatmap likely analyzes the internal dynamics of a deep learning model, such as a Transformer. Jensen-Shannon Divergence measures the difference between probability distributions.

* **What the data suggests:** The "Subj." component (possibly related to subject representation or early feature extraction) is highly distinct or variable in the initial processing layers, becoming more stable and uniform in deeper layers. Conversely, the "Last." component (potentially the final layer output or a high-level representation) starts as a uniform distribution and becomes increasingly specialized or divergent in deeper layers.

* **How elements relate:** The model's processing appears to follow a pattern where early layers handle diverse, low-level features ("Subj."), while later layers consolidate this information into more specific, high-level representations ("Last."). The "Attn." (Attention mechanism) shows a consistent, low-level divergence, suggesting its role is more about modulating information flow rather than creating highly divergent representations itself.

* **Notable pattern:** The most striking finding is the clean, complementary hand-off of divergence from the "Subj." to the "Last." component as data flows through the network layers. This could indicate a successful hierarchical feature learning process.