## Heatmap: Average JS Divergence Across Layers and Categories

### Overview

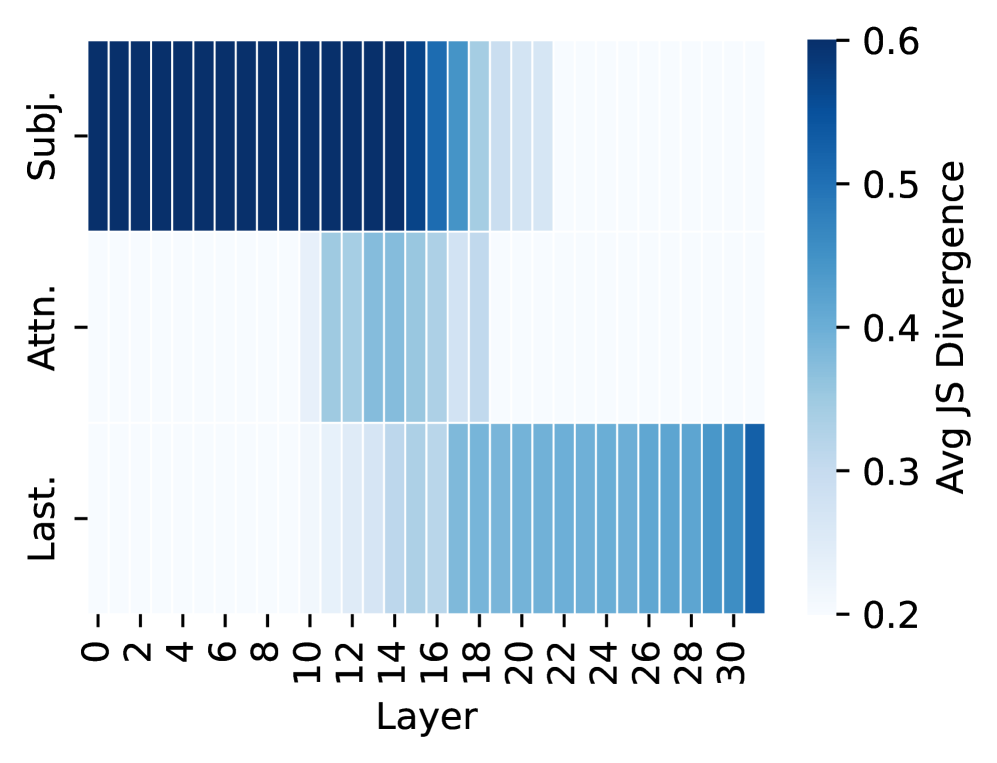

The image is a heatmap visualizing the average JS divergence across three categories ("Subj.", "Attn.", "Last.") and 31 layers (0–30). The color intensity represents divergence magnitude, with darker blue indicating higher values (up to 0.6) and lighter blue indicating lower values (down to 0.2).

### Components/Axes

- **Y-Axis (Categories)**:

- "Subj." (Subject)

- "Attn." (Attention)

- "Last." (Last)

- **X-Axis (Layers)**:

- Layer indices from 0 to 30, incremented by 2.

- **Color Bar (Legend)**:

- Label: "Avg JS Divergence"

- Scale: 0.2 (lightest blue) to 0.6 (darkest blue).

### Detailed Analysis

- **Subj. (Subject)**:

- Layers 0–14: Dark blue (0.5–0.6 divergence).

- Layers 16–18: Medium blue (0.4–0.5).

- Layers 20–30: Light blue (0.2–0.3).

- **Attn. (Attention)**:

- Layers 12–18: Medium blue (0.4–0.5).

- Layers 20–30: Light blue (0.2–0.3).

- **Last. (Last)**:

- Layers 20–30: Medium to dark blue (0.3–0.6).

### Key Observations

1. **Subj.** shows the highest divergence in early layers (0–14), dropping sharply after layer 14.

2. **Attn.** peaks in mid-layers (12–18) but declines afterward.

3. **Last.** exhibits increasing divergence from layer 20 onward, reaching the highest values (0.5–0.6) in later layers.

4. The color gradient aligns with the legend: darker blues correspond to higher divergence values.

### Interpretation

The heatmap suggests a dynamic shift in divergence patterns across layers:

- **Early layers (0–14)**: Dominated by "Subj." with high divergence, possibly indicating initial focus on subject-specific features.

- **Mid-layers (12–18)**: "Attn." becomes prominent, suggesting attention mechanisms engage during this phase.

- **Later layers (20–30)**: "Last." dominates, with divergence increasing sharply, potentially reflecting final processing or output generation.

The data implies a layered computational process where subject analysis dominates early, attention mechanisms modulate mid-layers, and final layers exhibit heightened divergence, possibly due to complex decision-making or output refinement.