## Diagram: Mask Entity Prediction Pipeline

### Overview

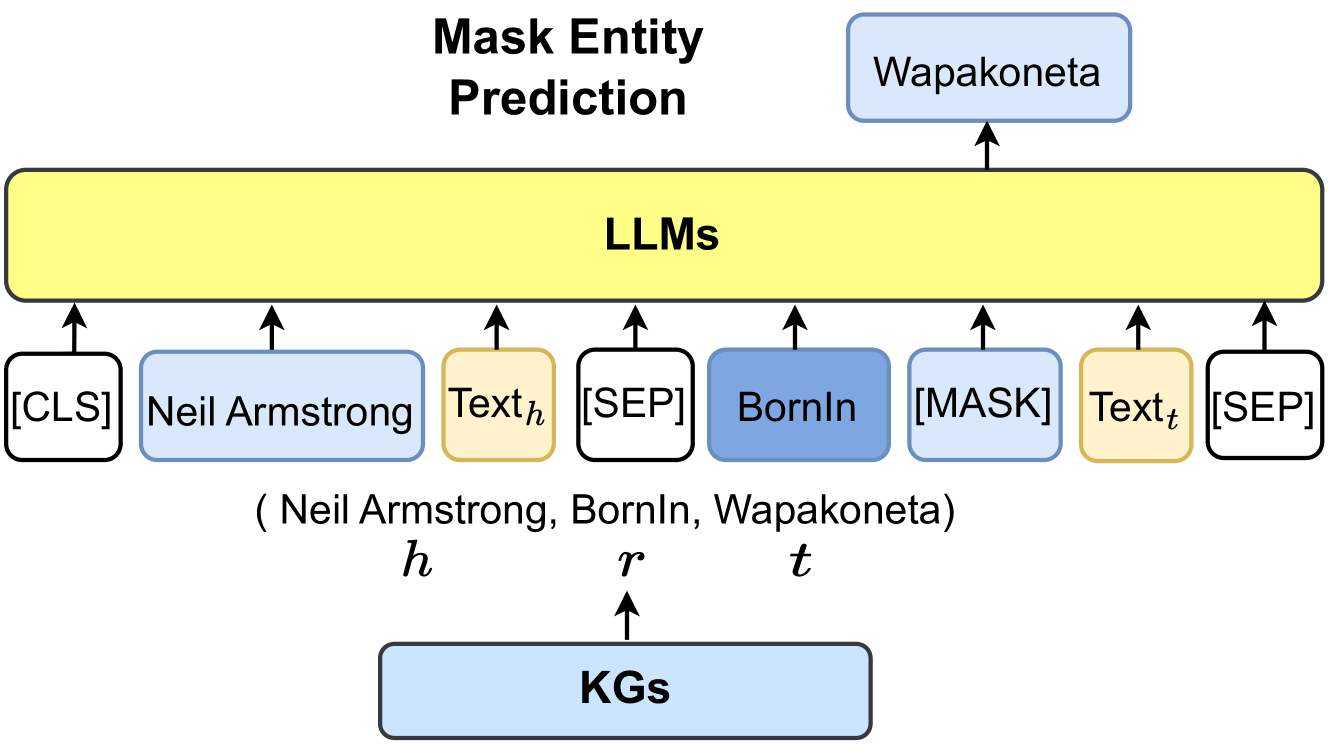

The diagram illustrates a machine learning pipeline for entity prediction using Large Language Models (LLMs) and Knowledge Graphs (KGs). It shows the flow of input text through an LLM, with a masked entity prediction task, and integration with a KG to resolve the masked entity.

### Components/Axes

1. **Input Sequence**:

- `[CLS]`: Start-of-sequence token

- `Neil Armstrong`: Input text (entity name)

- `Text_h`: Contextual text before the masked token

- `[SEP]`: Separator token

- `BornIn`: Relation type (property)

- `[MASK]`: Masked token to be predicted

- `Text_t`: Contextual text after the masked token

- `[SEP]`: End-of-sequence token

2. **Processing**:

- `LLMs`: Large Language Models block (yellow)

- `KGs`: Knowledge Graphs block (blue)

3. **Output**:

- `Wapakoneta`: Predicted entity name

- Arrows indicate flow from input → LLM → KG → final prediction

4. **Variables**:

- `h`: Head entity (Neil Armstrong)

- `r`: Relation (BornIn)

- `t`: Tail entity (Wapakoneta)

### Detailed Analysis

- **Input Structure**: The input follows a typical transformer model format with `[CLS]` and `[SEP]` tokens. The masked token `[MASK]` is positioned between the relation `BornIn` and contextual text `Text_t`.

- **LLM Processing**: The LLM block processes the entire sequence, including the masked token, to predict the missing entity.

- **KG Integration**: The predicted entity (`Wapakoneta`) is linked to the KG, suggesting post-processing validation or enrichment using KG data.

### Key Observations

1. The pipeline explicitly models the task of completing a knowledge triple `(h, r, t)` where `h = Neil Armstrong`, `r = BornIn`, and `t = Wapakoneta`.

2. The use of `[MASK]` indicates a fill-in-the-blank prediction task common in masked language modeling (MLM).

3. The KG block acts as a downstream component, likely used to verify or enhance the LLM's prediction.

### Interpretation

This diagram represents a hybrid NLP-KG system where:

- LLMs generate entity predictions through contextual understanding

- KGs provide structured knowledge to resolve ambiguous or complex entities

- The pipeline mirrors real-world applications like named entity recognition (NER) with knowledge grounding

The architecture suggests that while LLMs can predict entities based on textual context alone, integrating KGs improves accuracy by leveraging structured world knowledge. For example, the LLM might predict "Wapakoneta" as Neil Armstrong's birthplace by combining textual patterns with KG facts about his biography.