## Line Chart: Phi-3-mini-4k-Chat Training Loss

### Overview

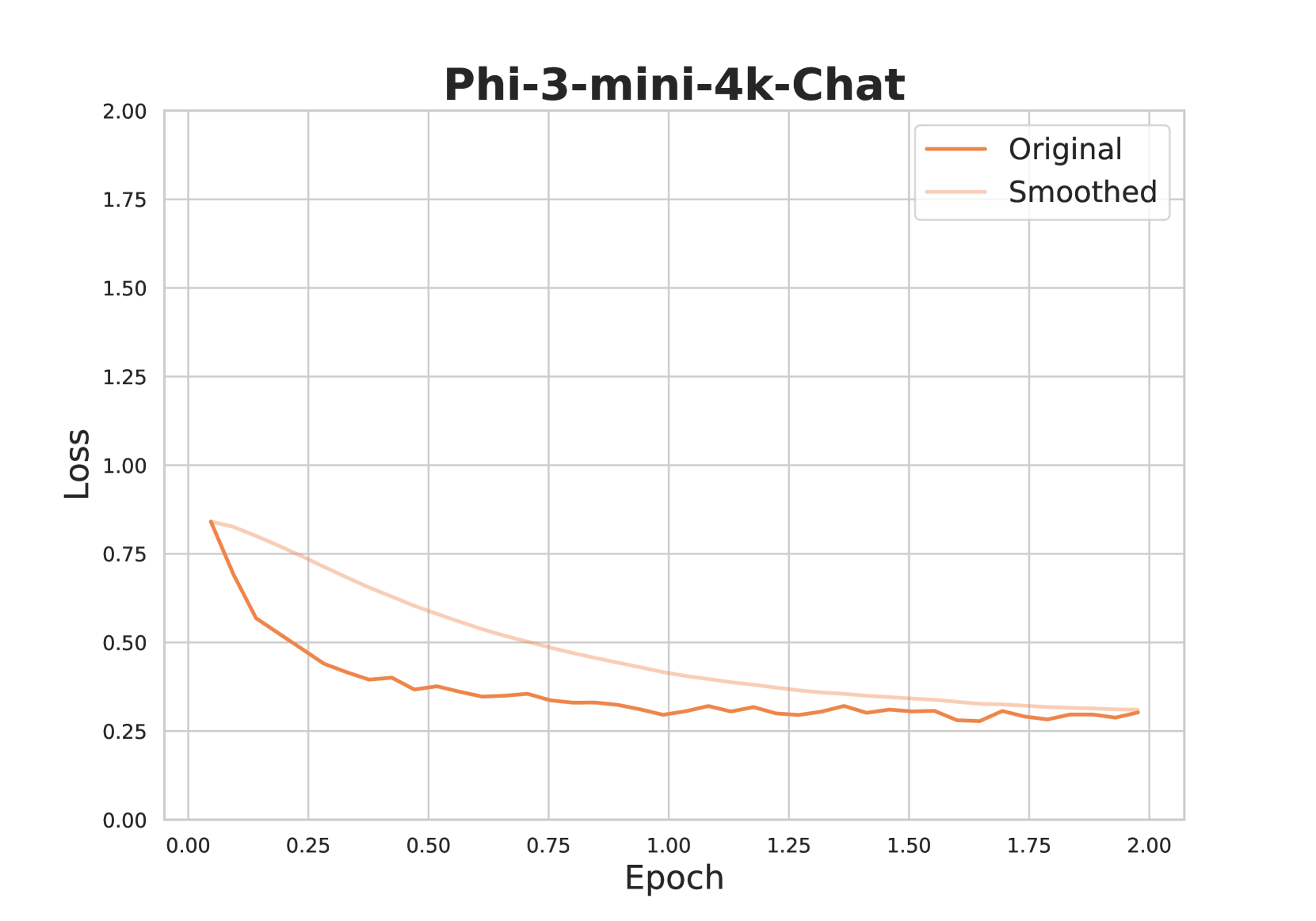

This image is a line chart visualizing the training loss of a machine learning model named "Phi-3-mini-4k-Chat" over a period of 2 epochs. It displays two data series: the raw, fluctuating loss values ("Original") and a smoothed version of the same data ("Smoothed").

### Components/Axes

* **Chart Title:** "Phi-3-mini-4k-Chat" (centered at the top).

* **Y-Axis (Vertical):** Labeled "Loss". The scale runs from 0.00 to 2.00, with major gridlines and numerical markers at intervals of 0.25 (0.00, 0.25, 0.50, 0.75, 1.00, 1.25, 1.50, 1.75, 2.00).

* **X-Axis (Horizontal):** Labeled "Epoch". The scale runs from 0.00 to 2.00, with major gridlines and numerical markers at intervals of 0.25 (0.00, 0.25, 0.50, 0.75, 1.00, 1.25, 1.50, 1.75, 2.00).

* **Legend:** Located in the top-right corner of the plot area. It contains two entries:

* "Original" - represented by a solid, darker orange line.

* "Smoothed" - represented by a solid, lighter peach-colored line.

* **Plot Area:** Contains a light gray grid for reference.

### Detailed Analysis

**Trend Verification:**

* **Original Line (Darker Orange):** This line exhibits a steep downward slope initially, followed by a gradual flattening with persistent small-scale fluctuations (noise). The overall trend is a clear decrease in loss.

* **Smoothed Line (Lighter Peach):** This line shows a smooth, monotonic decrease, curving gently from the top-left to the bottom-right. It represents the underlying trend of the "Original" data without the noise.

**Data Point Extraction (Approximate Values):**

* **Epoch 0.00:** Both lines originate at the same point. Loss ≈ 0.85.

* **Epoch 0.25:**

* Original: Loss ≈ 0.55 (steep drop from start).

* Smoothed: Loss ≈ 0.70.

* **Epoch 0.50:**

* Original: Loss ≈ 0.40 (with a small local peak just before).

* Smoothed: Loss ≈ 0.55.

* **Epoch 1.00:**

* Original: Loss ≈ 0.30 (with minor oscillations).

* Smoothed: Loss ≈ 0.40.

* **Epoch 1.50:**

* Original: Loss ≈ 0.28 (oscillating between ~0.27 and 0.30).

* Smoothed: Loss ≈ 0.32.

* **Epoch 2.00 (End):**

* Original: Loss ≈ 0.29 (ending with a slight upward tick).

* Smoothed: Loss ≈ 0.30. The two lines nearly converge at the end of the plotted period.

### Key Observations

1. **Rapid Initial Learning:** The most significant reduction in loss occurs within the first 0.5 epochs, where the "Original" loss drops by more than half its initial value.

2. **Noise vs. Trend:** The "Original" line contains high-frequency noise, which is effectively filtered out in the "Smoothed" line, making the long-term trend easier to interpret.

3. **Convergence:** The gap between the "Original" and "Smoothed" lines narrows considerably after epoch 1.00, suggesting the rate of change in the underlying trend is slowing and the noise is centered around this slower trend.

4. **Plateauing:** Both lines show clear signs of plateauing after epoch 1.25, indicating the model's rate of improvement (reduction in loss) has significantly diminished.

### Interpretation

This chart demonstrates the typical learning curve of a neural network during training. The "Loss" metric quantifies the model's error; a decreasing trend indicates the model is learning from the training data.

* **What the data suggests:** The Phi-3-mini-4k-Chat model learns rapidly at the beginning of training (first half-epoch). As training progresses, learning continues but at a diminishing rate, eventually approaching a plateau by the end of the second epoch. The presence of noise in the "Original" loss is normal and can be caused by mini-batch sampling during stochastic gradient descent.

* **Relationship between elements:** The "Smoothed" line is derived from the "Original" line, likely using a moving average or similar technique. Its purpose is to reveal the central tendency of the loss, making it easier to assess whether the model is still fundamentally improving, despite step-to-step fluctuations.

* **Notable trends/anomalies:** There are no major anomalies. The curve is well-behaved. The slight uptick in the "Original" loss at the very end (epoch 2.00) is minor and within the range of normal noise; it does not yet suggest overfitting, which would typically be indicated by a sustained increase in loss on a *validation* set (not shown here). The primary takeaway is that the model has largely converged within the observed 2-epoch window.