## Diagram: Neural Network Attention Mechanism for Sequential Data Processing

### Overview

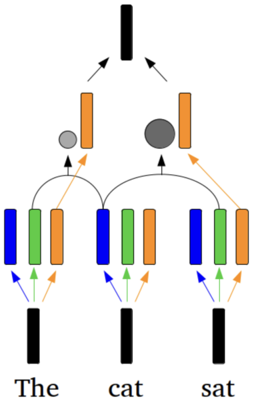

The image is a technical diagram illustrating a neural network architecture, specifically an attention-based model processing a three-word sequence ("The cat sat"). It visualizes how input tokens are transformed, how attention is applied between them, and how information is aggregated into a final output representation. The diagram uses color-coded elements and directional arrows to show data flow and relationships.

### Components/Axes

**Input Layer (Bottom):**

- Three distinct input tokens, each represented by a black vertical bar.

- Text labels below each bar: "The" (left), "cat" (center), "sat" (right).

**Feature/Embedding Layer (Middle-Lower):**

- Above each input token, three colored vertical bars appear in a fixed sequence:

- **Blue bar** (leftmost for each token)

- **Green bar** (center for each token)

- **Orange bar** (rightmost for each token)

- These likely represent different feature dimensions or embedding channels for each token.

**Attention Mechanism (Middle-Upper):**

- Two **orange rectangular blocks** positioned above the feature bars. These are the query/key/value components or attention heads.

- Two **gray circles** of different sizes, positioned between the feature bars and the orange blocks.

- A **smaller gray circle** is located above and between the "The" and "cat" feature sets.

- A **larger gray circle** is located above and between the "cat" and "sat" feature sets.

- **Curved black arrows** connect the feature bars to the gray circles, indicating information flow for attention calculation.

- **Straight orange arrows** connect the feature bars directly to the orange rectangular blocks.

**Output Layer (Top):**

- A single **black vertical bar** at the top center of the diagram.

- Two straight black arrows point from the two orange rectangular blocks to this final output bar, indicating aggregation.

**Flow & Connections:**

- The diagram shows a clear bottom-to-top data flow.

- Information from the three input tokens is processed in parallel through the colored feature bars.

- This information is then combined via an attention mechanism (represented by the gray circles and connecting arrows) and further processed by the orange blocks.

- The results from the orange blocks are finally combined to produce the single output representation.

### Detailed Analysis

**Spatial Grounding & Component Isolation:**

1. **Bottom Region (Inputs):** Three isolated input units. Each unit has a black bar (token) and three associated colored feature bars (blue, green, orange).

2. **Middle Region (Processing):** This is the most complex region.

- The **smaller gray circle** (position: center-left) receives curved arrow inputs primarily from the blue and green bars of "The" and the blue bar of "cat".

- The **larger gray circle** (position: center-right) receives curved arrow inputs primarily from the green and orange bars of "cat" and the blue and green bars of "sat".

- The **left orange block** receives straight orange arrow inputs from the orange bars of "The" and "cat".

- The **right orange block** receives straight orange arrow inputs from the orange bars of "cat" and "sat".

3. **Top Region (Output):** A single aggregated output.

**Trend & Relationship Verification:**

- The diagram does not present numerical data or trends in a charting sense. Instead, it illustrates a **structural and relational flow**.

- The key relationship shown is the **many-to-one mapping** from multiple input tokens and their features to a single contextual output.

- The **varying size of the gray circles** is a critical visual cue. The circle between "cat" and "sat" is noticeably larger than the one between "The" and "cat". In attention mechanism diagrams, circle size often correlates with the **attention weight or strength** of the connection. This suggests the model is placing stronger attention between the words "cat" and "sat" than between "The" and "cat".

### Key Observations

1. **Asymmetric Attention:** The attention mechanism is not uniformly distributed. The connection between the second and third tokens ("cat" and "sat") is visually emphasized (larger circle), implying a stronger syntactic or semantic relationship is being captured.

2. **Dual Processing Pathways:** The diagram shows two parallel pathways for information: one through the attention circles (curved arrows) and one directly to the orange blocks (straight arrows). This could represent a residual connection or a multi-head attention setup where different heads capture different types of relationships.

3. **Hierarchical Aggregation:** The process is clearly hierarchical: Token -> Features -> Pairwise Attention/Processing -> Final Aggregation.

4. **Color Consistency:** The color coding (blue, green, orange) is consistent across all three input tokens, indicating the same feature types are extracted from each word.

### Interpretation

This diagram is a schematic representation of a **self-attention mechanism**, a core component of Transformer models used in natural language processing. It visually answers the question: "How does the model understand the word 'cat' in the context of the full sentence 'The cat sat'?"

- **What it demonstrates:** The model doesn't process words in isolation. It dynamically computes relationships (attention) between all words in the sequence. The larger gray circle indicates the model has learned that the relationship between "cat" (the subject) and "sat" (the verb) is particularly important for understanding this sentence, more so than the relationship between the determiner "The" and the subject "cat".

- **How elements relate:** The colored bars represent the initial, static representation of each word. The attention circles (gray) and processing blocks (orange) represent the dynamic, context-aware computations that transform these static representations into a rich, contextual understanding. The final black bar is the output—a new representation for the sequence (or a specific token) that incorporates information from the entire context.

- **Notable Anomaly/Insight:** The diagram explicitly shows that not all connections are equal. The **size variation of the gray circles** is the most important piece of data-like information here. It moves beyond a simple flowchart to encode a quantitative relationship (attention strength) into a qualitative diagram. This suggests the underlying model has non-uniform attention patterns, which is a key reason for the effectiveness of Transformer architectures in capturing linguistic dependencies like subject-verb agreement.