## Line Chart: Model Accuracy Over Time (t)

### Overview

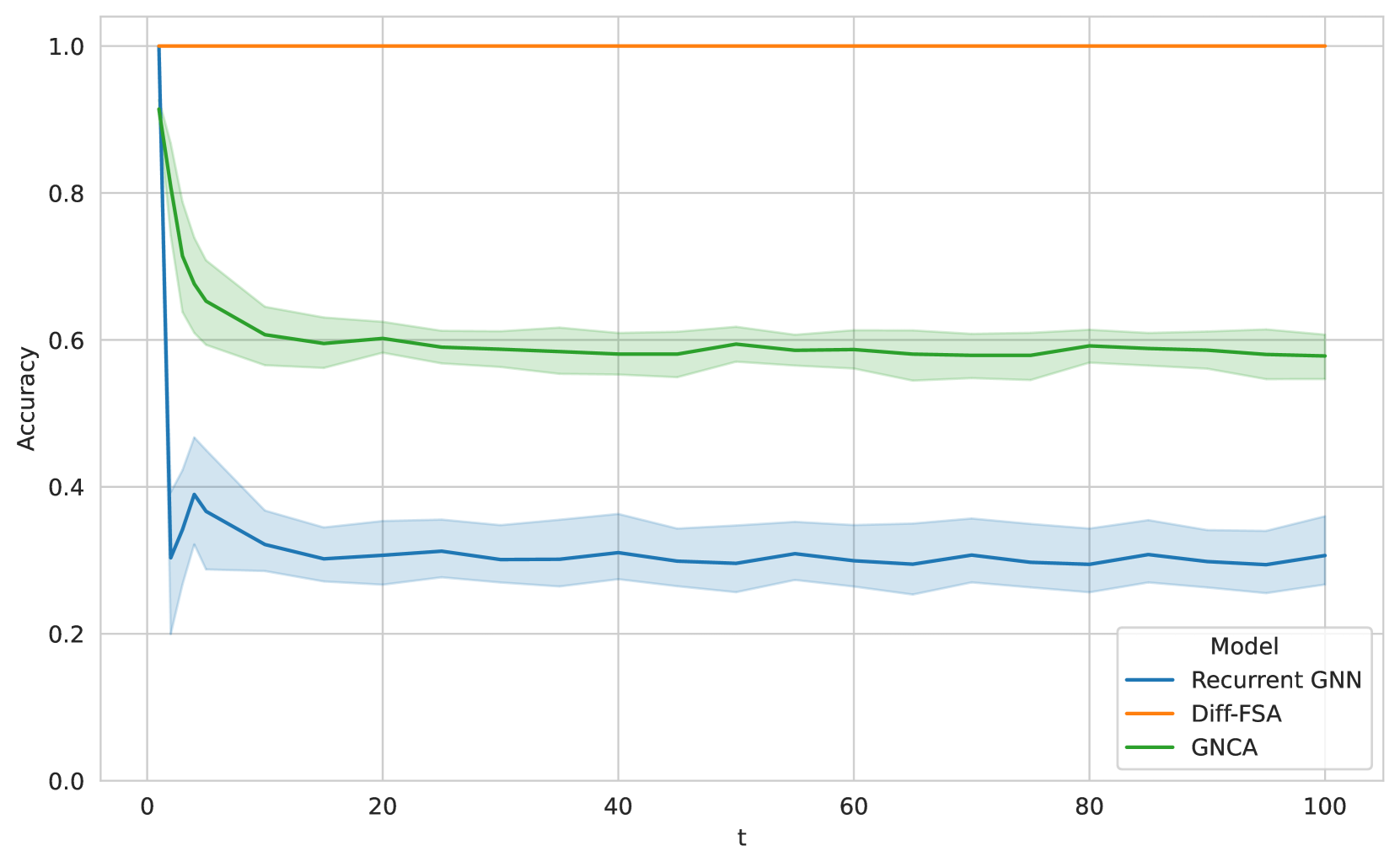

The image is a line chart comparing the accuracy of three different models—Recurrent GNN, Diff-FSA, and GNCA—over a variable denoted as "t" (likely representing time steps, iterations, or sequence length). The chart includes shaded regions around two of the lines, indicating variability or confidence intervals.

### Components/Axes

* **X-Axis:** Labeled "t". The scale runs from 0 to 100 with major tick marks at intervals of 20 (0, 20, 40, 60, 80, 100).

* **Y-Axis:** Labeled "Accuracy". The scale runs from 0.0 to 1.0 with major tick marks at intervals of 0.2 (0.0, 0.2, 0.4, 0.6, 0.8, 1.0).

* **Legend:** Located in the bottom-right corner of the chart area. It is titled "Model" and lists three entries:

* A blue line labeled "Recurrent GNN"

* An orange line labeled "Diff-FSA"

* A green line labeled "GNCA"

* **Data Series:** Three distinct lines with associated shaded regions (for two series):

1. **Diff-FSA (Orange Line):** A perfectly horizontal line at the top of the chart.

2. **GNCA (Green Line):** A line that starts high and decreases, accompanied by a light green shaded region.

3. **Recurrent GNN (Blue Line):** A line that starts high, drops sharply, and then fluctuates at a lower level, accompanied by a light blue shaded region.

### Detailed Analysis

**Trend Verification & Data Points (Approximate):**

1. **Diff-FSA (Orange Line):**

* **Trend:** Perfectly flat, horizontal line.

* **Data Points:** Maintains an accuracy of **1.0** for all values of `t` from 0 to 100.

2. **GNCA (Green Line):**

* **Trend:** Sharp initial decline followed by a stable plateau.

* **Data Points:**

* At `t=0`: Accuracy starts at approximately **0.9**.

* By `t≈5`: Accuracy drops steeply to about **0.65**.

* From `t≈10` to `t=100`: Accuracy stabilizes, fluctuating slightly around **0.6** (range ~0.58 to 0.62).

* **Shaded Region (Green):** Represents variability. The band is widest during the initial drop (t=0 to t≈10) and narrows slightly as the line stabilizes, spanning approximately ±0.05 around the main line.

3. **Recurrent GNN (Blue Line):**

* **Trend:** Very sharp initial decline, followed by low-level fluctuations.

* **Data Points:**

* At `t=0`: Accuracy starts near **1.0**.

* By `t≈2`: Accuracy plummets to a low of approximately **0.3**.

* From `t≈5` to `t=100`: Accuracy recovers slightly and fluctuates in a band between roughly **0.28 and 0.35**, with a central tendency around **0.3**.

* **Shaded Region (Blue):** Represents variability. The band is very wide during the initial drop and subsequent recovery (t=0 to t≈10), indicating high variance. It remains moderately wide for the remainder of the chart, spanning approximately ±0.08 around the main line.

### Key Observations

1. **Performance Hierarchy:** There is a clear and consistent performance hierarchy: Diff-FSA (perfect) > GNCA (~0.6) > Recurrent GNN (~0.3).

2. **Initial Degradation:** Both GNCA and Recurrent GNN experience a significant drop in accuracy at very low values of `t` (within the first 10 units). Recurrent GNN's drop is more severe.

3. **Stability:** After the initial drop, all three models show stable performance for the remainder of the `t` range (t=20 to 100). Diff-FSA is perfectly stable, while GNCA and Recurrent GNN show minor fluctuations within their respective bands.

4. **Variability:** The shaded regions indicate that GNCA and Recurrent GNN have measurable variance in their performance, with Recurrent GNN showing higher variance, especially at low `t`. Diff-FSA shows no visible variance (or it is too small to plot).

### Interpretation

This chart demonstrates a comparative analysis of model robustness or generalization over an increasing parameter `t`. The data suggests:

* **Diff-FSA is perfectly robust** to the factor represented by `t`, maintaining maximum accuracy regardless of its value. This implies it may be the ideal model for this specific task or metric.

* **GNCA degrades but stabilizes** at a moderate accuracy level. It is not immune to the effect of `t` but reaches a consistent, sub-optimal performance plateau.

* **Recurrent GNN is highly sensitive** to `t`, suffering a catastrophic drop in accuracy almost immediately. Its subsequent low-level performance suggests it fails to generalize or maintain state effectively as `t` increases.

The **Peircean investigative reading** points to a fundamental difference in model architecture. The perfect performance of Diff-FSA might indicate it uses a different, more suitable inductive bias (perhaps related to differential equations or finite-state automata, given the name) for the underlying problem. The sharp initial drops for the other models suggest a "breaking point" where their internal mechanisms (recurrence in GNNs, or the specific graph cellular automata approach of GNCA) can no longer cope with the complexity or length introduced by `t`. The chart effectively argues for the superiority of the Diff-FSA approach in this context.