TECHNICAL ASSET FINGERPRINT

8421bb8c4e0cff66f6dc9c8a

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

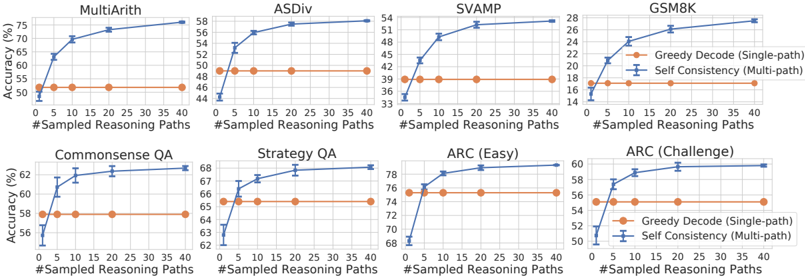

## Chart Type: Multiple Line Charts Comparing Reasoning Methods

### Overview

The image presents eight line charts arranged in a 2x4 grid. Each chart compares the accuracy (%) of two reasoning methods, "Greedy Decode (Single-path)" and "Self Consistency (Multi-path)", across different numbers of sampled reasoning paths (from 0 to 40). The charts are grouped by the task they evaluate: MultiArith, ASDiv, SVAMP, GSM8K, Commonsense QA, Strategy QA, ARC (Easy), and ARC (Challenge).

### Components/Axes

* **X-axis (Horizontal):** "#Sampled Reasoning Paths". The scale ranges from 0 to 40 in increments of 5.

* **Y-axis (Vertical):** "Accuracy (%)". The scale varies for each chart, but generally covers a range relevant to the observed accuracy.

* **Legend (Right of GSM8K and ARC(Challenge) charts):**

* Orange line with circular markers: "Greedy Decode (Single-path)"

* Blue line with error bars: "Self Consistency (Multi-path)"

* **Chart Titles:**

* Top Row: MultiArith, ASDiv, SVAMP, GSM8K

* Bottom Row: Commonsense QA, Strategy QA, ARC (Easy), ARC (Challenge)

### Detailed Analysis

**1. MultiArith**

* Y-axis: 50 to 75

* Greedy Decode (Single-path) (Orange): Constant at approximately 51%.

* Self Consistency (Multi-path) (Blue): Starts at approximately 51% and increases sharply to approximately 75% by 40 sampled reasoning paths.

**2. ASDiv**

* Y-axis: 44 to 58

* Greedy Decode (Single-path) (Orange): Constant at approximately 49%.

* Self Consistency (Multi-path) (Blue): Starts at approximately 45% and increases to approximately 58% by 40 sampled reasoning paths.

**3. SVAMP**

* Y-axis: 33 to 54

* Greedy Decode (Single-path) (Orange): Constant at approximately 39%.

* Self Consistency (Multi-path) (Blue): Starts at approximately 36% and increases to approximately 53% by 40 sampled reasoning paths.

**4. GSM8K**

* Y-axis: 14 to 28

* Greedy Decode (Single-path) (Orange): Constant at approximately 17%.

* Self Consistency (Multi-path) (Blue): Starts at approximately 16% and increases to approximately 28% by 40 sampled reasoning paths.

**5. Commonsense QA**

* Y-axis: 56 to 63

* Greedy Decode (Single-path) (Orange): Constant at approximately 58%.

* Self Consistency (Multi-path) (Blue): Starts at approximately 57% and increases to approximately 62% by 40 sampled reasoning paths.

**6. Strategy QA**

* Y-axis: 62 to 68

* Greedy Decode (Single-path) (Orange): Constant at approximately 65%.

* Self Consistency (Multi-path) (Blue): Starts at approximately 63% and increases to approximately 68% by 40 sampled reasoning paths.

**7. ARC (Easy)**

* Y-axis: 68 to 78

* Greedy Decode (Single-path) (Orange): Constant at approximately 76%.

* Self Consistency (Multi-path) (Blue): Starts at approximately 68% and increases to approximately 78% by 40 sampled reasoning paths.

**8. ARC (Challenge)**

* Y-axis: 50 to 60

* Greedy Decode (Single-path) (Orange): Constant at approximately 55%.

* Self Consistency (Multi-path) (Blue): Starts at approximately 50% and increases to approximately 60% by 40 sampled reasoning paths.

### Key Observations

* The "Self Consistency (Multi-path)" method consistently shows improved accuracy as the number of sampled reasoning paths increases across all tasks.

* The "Greedy Decode (Single-path)" method maintains a relatively constant accuracy regardless of the number of sampled reasoning paths.

* The magnitude of improvement from "Self Consistency" varies across tasks. MultiArith shows the most significant improvement, while Strategy QA shows the least.

* Error bars are present on the "Self Consistency" data, indicating the variability in the results.

### Interpretation

The data suggests that using multiple reasoning paths ("Self Consistency") generally improves the accuracy of the model compared to using a single reasoning path ("Greedy Decode"). The improvement is more pronounced for some tasks (e.g., MultiArith, SVAMP, GSM8K) than others (e.g., Strategy QA, Commonsense QA). This could be due to the nature of the tasks themselves, where some tasks benefit more from exploring multiple reasoning strategies. The constant accuracy of "Greedy Decode" indicates that simply increasing the number of samples without exploring diverse reasoning paths does not lead to better performance. The error bars on the "Self Consistency" data suggest that the improvement is not always consistent and may depend on the specific samples used.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

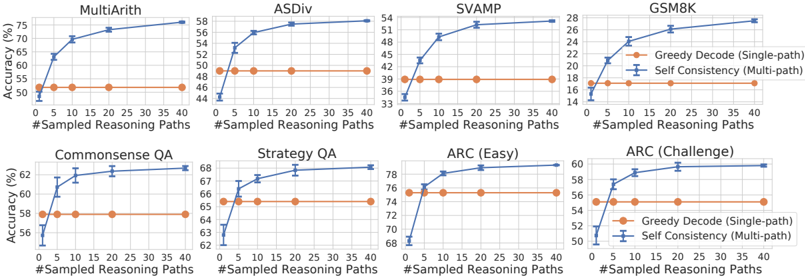

## Line Chart: Accuracy vs. Sampled Reasoning Paths for Different Datasets

### Overview

The image presents a series of line charts, each representing the accuracy of a model on a different dataset as a function of the number of sampled reasoning paths. Two methods are compared: "Greedy Decode (Single-path)" and "Self Consistency (Multi-path)". The charts visually demonstrate how accuracy changes with an increasing number of reasoning paths for each dataset and method.

### Components/Axes

* **X-axis:** "#Sampled Reasoning Paths" - Ranges from 0 to 35, with markers at 0, 5, 10, 15, 20, 25, 30, and 35.

* **Y-axis:** "Accuracy (%)" - Ranges from approximately 40% to 62%, with markers at 40, 45, 50, 55, 60.

* **Datasets (Chart Titles):** MultiArith, ASDiv, SVAMP, GSM8K, Commonsense QA, Strategy QA, ARC (Easy), ARC (Challenge).

* **Legend:**

* Orange Line with Circle Markers: "Greedy Decode (Single-path)"

* Blue Line with Cross Markers: "Self Consistency (Multi-path)"

### Detailed Analysis or Content Details

**MultiArith:**

* Self Consistency (Blue): Starts at approximately 55% accuracy at 0 paths, rises sharply to around 58% at 10 paths, and plateaus around 58.5% from 20 paths onwards.

* Greedy Decode (Orange): Starts at approximately 55% accuracy at 0 paths, rises slightly to around 56% at 10 paths, and remains relatively flat around 56% for the rest of the paths.

**ASDiv:**

* Self Consistency (Blue): Starts at approximately 46% accuracy at 0 paths, increases rapidly to around 56% at 15 paths, and plateaus around 57% from 20 paths onwards.

* Greedy Decode (Orange): Starts at approximately 46% accuracy at 0 paths, rises slightly to around 48% at 10 paths, and remains relatively flat around 48% for the rest of the paths.

**SVAMP:**

* Self Consistency (Blue): Starts at approximately 38% accuracy at 0 paths, increases rapidly to around 52% at 15 paths, and plateaus around 52.5% from 20 paths onwards.

* Greedy Decode (Orange): Starts at approximately 38% accuracy at 0 paths, rises slightly to around 40% at 10 paths, and remains relatively flat around 40% for the rest of the paths.

**GSM8K:**

* Self Consistency (Blue): Starts at approximately 18% accuracy at 0 paths, increases rapidly to around 26% at 15 paths, and plateaus around 26.5% from 20 paths onwards.

* Greedy Decode (Orange): Starts at approximately 18% accuracy at 0 paths, rises slightly to around 20% at 10 paths, and remains relatively flat around 20% for the rest of the paths.

**Commonsense QA:**

* Self Consistency (Blue): Starts at approximately 60% accuracy at 0 paths, rises to around 62% at 10 paths, and plateaus around 62% from 15 paths onwards.

* Greedy Decode (Orange): Starts at approximately 60% accuracy at 0 paths, rises slightly to around 61% at 10 paths, and remains relatively flat around 61% for the rest of the paths.

**Strategy QA:**

* Self Consistency (Blue): Starts at approximately 66% accuracy at 0 paths, rises to around 68% at 10 paths, and plateaus around 68.5% from 15 paths onwards.

* Greedy Decode (Orange): Starts at approximately 66% accuracy at 0 paths, rises slightly to around 67% at 10 paths, and remains relatively flat around 67% for the rest of the paths.

**ARC (Easy):**

* Self Consistency (Blue): Starts at approximately 70% accuracy at 0 paths, increases rapidly to around 77% at 15 paths, and plateaus around 77.5% from 20 paths onwards.

* Greedy Decode (Orange): Starts at approximately 70% accuracy at 0 paths, rises slightly to around 72% at 10 paths, and remains relatively flat around 72% for the rest of the paths.

**ARC (Challenge):**

* Self Consistency (Blue): Starts at approximately 52% accuracy at 0 paths, increases rapidly to around 58% at 15 paths, and plateaus around 58.5% from 20 paths onwards.

* Greedy Decode (Orange): Starts at approximately 52% accuracy at 0 paths, rises slightly to around 54% at 10 paths, and remains relatively flat around 54% for the rest of the paths.

### Key Observations

* The "Self Consistency (Multi-path)" method consistently outperforms the "Greedy Decode (Single-path)" method across all datasets.

* The performance gains from increasing the number of sampled reasoning paths diminish after approximately 15-20 paths for most datasets.

* The GSM8K dataset shows the largest performance gap between the two methods, indicating that it benefits the most from multi-path reasoning.

* The Commonsense QA and Strategy QA datasets show the smallest performance gap, suggesting that single-path reasoning is relatively effective for these tasks.

### Interpretation

The data strongly suggests that employing a "Self Consistency" approach with multiple sampled reasoning paths significantly improves the accuracy of the model across a diverse range of tasks. The diminishing returns observed after a certain number of paths indicate an optimal point where further increasing the number of paths does not yield substantial improvements. The varying degrees of improvement across datasets suggest that the complexity and nature of the task influence the effectiveness of multi-path reasoning. Datasets like GSM8K, which require more complex reasoning, benefit more from the increased exploration of solution paths. The consistent outperformance of "Self Consistency" highlights the value of considering multiple perspectives and aggregating results to arrive at a more robust and accurate solution. The charts provide empirical evidence supporting the hypothesis that exploring a wider solution space through multiple reasoning paths enhances the model's ability to solve complex problems.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Accuracy vs. Sampled Reasoning Paths Across Eight Datasets

### Overview

The image displays a 2x4 grid of eight line charts. Each chart compares the performance of two methods—"Greedy Decode (Single-path)" and "Self Consistency (Multi-path)"—on a specific reasoning or question-answering dataset. The charts collectively demonstrate how accuracy changes as the number of sampled reasoning paths increases.

### Components/Axes

* **Chart Titles (Datasets):** The eight datasets are, from top-left to bottom-right: MultiArith, ASDiv, SVAMP, GSM8K, Commonsense QA, Strategy QA, ARC (Easy), and ARC (Challenge).

* **X-Axis (All Charts):** Labeled "#Sampled Reasoning Paths". The axis markers are at 0, 5, 10, 15, 20, 25, 30, 35, and 40.

* **Y-Axis (All Charts):** Labeled "Accuracy (%)". The scale and range vary per chart to best fit the data.

* **Legend:** Located in the bottom-right corner of each individual chart. It defines two series:

* **Orange line with circle markers:** "Greedy Decode (Single-path)"

* **Blue line with square markers and error bars:** "Self Consistency (Multi-path)"

### Detailed Analysis

**Chart-by-Chart Data Extraction (Approximate Values):**

1. **MultiArith**

* **Greedy Decode (Orange):** Flat line at approximately 52% accuracy.

* **Self Consistency (Blue):** Starts at ~50% (0 paths), rises steeply to ~65% (5 paths), then continues a steady upward trend to ~75% (40 paths). Error bars are visible.

2. **ASDiv**

* **Greedy Decode (Orange):** Flat line at approximately 49% accuracy.

* **Self Consistency (Blue):** Starts at ~44% (0 paths), jumps to ~52% (5 paths), and increases gradually to ~58% (40 paths).

3. **SVAMP**

* **Greedy Decode (Orange):** Flat line at approximately 39% accuracy.

* **Self Consistency (Blue):** Starts at ~34% (0 paths), rises sharply to ~45% (5 paths), and climbs to ~54% (40 paths).

4. **GSM8K**

* **Greedy Decode (Orange):** Flat line at approximately 17% accuracy.

* **Self Consistency (Blue):** Starts at ~14% (0 paths), increases to ~22% (5 paths), and reaches ~28% (40 paths).

5. **Commonsense QA**

* **Greedy Decode (Orange):** Flat line at approximately 58% accuracy.

* **Self Consistency (Blue):** Starts at ~56% (0 paths), rises to ~61% (5 paths), and plateaus near ~62% (40 paths).

6. **Strategy QA**

* **Greedy Decode (Orange):** Flat line at approximately 65% accuracy.

* **Self Consistency (Blue):** Starts at ~63% (0 paths), increases to ~67% (5 paths), and reaches ~68% (40 paths).

7. **ARC (Easy)**

* **Greedy Decode (Orange):** Flat line at approximately 76% accuracy.

* **Self Consistency (Blue):** Starts at ~68% (0 paths), jumps to ~77% (5 paths), and climbs to ~79% (40 paths).

8. **ARC (Challenge)**

* **Greedy Decode (Orange):** Flat line at approximately 55% accuracy.

* **Self Consistency (Blue):** Starts at ~50% (0 paths), rises to ~57% (5 paths), and reaches ~60% (40 paths).

### Key Observations

1. **Consistent Trend:** In all eight datasets, the "Self Consistency (Multi-path)" method (blue line) shows a clear, monotonic increase in accuracy as the number of sampled reasoning paths increases from 0 to 40.

2. **Baseline Performance:** The "Greedy Decode (Single-path)" method (orange line) serves as a flat baseline, showing constant accuracy regardless of the x-axis value (which is logical, as it uses only one path).

3. **Diminishing Returns:** The most significant accuracy gain for the Self Consistency method typically occurs within the first 5-10 sampled paths. The rate of improvement slows but remains positive as more paths are added.

4. **Performance Gap:** The final accuracy gap between the two methods at 40 paths varies by dataset, from a modest ~4% (Commonsense QA, Strategy QA) to a substantial ~20% (MultiArith, SVAMP).

5. **Error Bars:** The blue line (Self Consistency) includes vertical error bars, indicating variability or confidence intervals in the measurements. The orange line (Greedy Decode) does not show error bars.

### Interpretation

This set of charts provides strong empirical evidence for the effectiveness of the "Self Consistency" decoding strategy over standard "Greedy Decode" for complex reasoning tasks. The core finding is that **aggregating multiple reasoning paths (sampling) leads to more accurate final answers than relying on a single, greedily-decoded path.**

The data suggests that the underlying reasoning process for these tasks has inherent variability or stochasticity. By generating multiple diverse reasoning chains and selecting the most consistent answer (the principle behind Self Consistency), the model can overcome errors present in any single chain. The steep initial rise in the blue lines indicates that even a small amount of sampling (5-10 paths) captures significant benefits, making the method practically efficient. The continued, though slower, improvement up to 40 paths shows that further sampling continues to refine performance.

The variation in the magnitude of improvement across datasets (e.g., large gains on arithmetic datasets like MultiArith vs. smaller gains on commonsense QA) implies that the benefit of multi-path sampling is particularly pronounced for tasks where the reasoning process is more complex or has a higher chance of containing a logical misstep that a single greedy path might commit to. This visualization effectively argues for moving beyond single-path generation in favor of ensemble-like methods for robust AI reasoning.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Accuracy vs. Sampled Reasoning Paths Across Datasets

### Overview

The image contains eight line graphs comparing the performance of two reasoning methods: **Greedy Decode (Single-path)** (orange) and **Self Consistency (Multi-path)** (blue) across diverse datasets. Each graph plots accuracy (%) against the number of sampled reasoning paths (0–40). The datasets include mathematical reasoning (MultiArith, ASDiv, SVAMP, GSM8K) and question-answering tasks (Commonsense QA, Strategy QA, ARC Easy/Challenge).

---

### Components/Axes

- **X-axis**: "#Sampled Reasoning Paths" (0–40, increments of 5).

- **Y-axis**: "Accuracy (%)" (ranges vary by dataset, e.g., 44–78%).

- **Legends**:

- Orange circles: Greedy Decode (Single-path).

- Blue squares: Self Consistency (Multi-path).

- **Graph Titles**: Dataset names (e.g., "MultiArith", "ARC (Challenge)").

---

### Detailed Analysis

#### MultiArith

- **Greedy Decode**: Starts at ~50% accuracy (0 paths), plateaus at ~55% by 40 paths.

- **Self Consistency**: Rises sharply from ~55% to ~75%, with error bars indicating moderate uncertainty.

#### ASDiv

- **Greedy Decode**: Flat at ~48% accuracy across all paths.

- **Self Consistency**: Increases from ~44% to ~58%, with error bars showing higher variability.

#### SVAMP

- **Greedy Decode**: Flat at ~39% accuracy.

- **Self Consistency**: Rises from ~33% to ~54%, with error bars suggesting significant uncertainty.

#### GSM8K

- **Greedy Decode**: Flat at ~18% accuracy.

- **Self Consistency**: Increases from ~14% to ~26%, with error bars indicating low confidence.

#### Commonsense QA

- **Greedy Decode**: Flat at ~58% accuracy.

- **Self Consistency**: Rises from ~56% to ~62%, with error bars showing minimal uncertainty.

#### Strategy QA

- **Greedy Decode**: Flat at ~66% accuracy.

- **Self Consistency**: Increases from ~63% to ~68%, with error bars indicating slight variability.

#### ARC (Easy)

- **Greedy Decode**: Flat at ~74% accuracy.

- **Self Consistency**: Rises from ~68% to ~78%, with error bars showing low uncertainty.

#### ARC (Challenge)

- **Greedy Decode**: Flat at ~56% accuracy.

- **Self Consistency**: Sharp increase from ~52% to ~60%, with error bars suggesting moderate uncertainty.

---

### Key Observations

1. **Self Consistency (Multi-path)** consistently outperforms **Greedy Decode (Single-path)** across all datasets.

2. **Mathematical Reasoning Datasets** (MultiArith, ASDiv, SVAMP, GSM8K) show the largest performance gaps between methods.

3. **ARC (Challenge)** exhibits the most dramatic improvement with Self Consistency, suggesting it handles complex reasoning better.

4. **Greedy Decode** performance plateaus early, indicating limited benefit from additional reasoning paths.

---

### Interpretation

The data demonstrates that **Self Consistency (Multi-path)** significantly enhances accuracy by exploring multiple reasoning paths, particularly in complex tasks like ARC (Challenge) and GSM8K. In contrast, **Greedy Decode (Single-path)** relies on a single path, leading to suboptimal performance in datasets requiring iterative reasoning. The error bars highlight that Self Consistency’s gains are statistically significant in most cases, though uncertainty increases with path complexity in datasets like SVAMP. These results underscore the value of multi-path exploration in reasoning-intensive tasks.

DECODING INTELLIGENCE...