## Stacked Bar Chart: GPT-2 xl Attention Head Distribution by Layer

### Overview

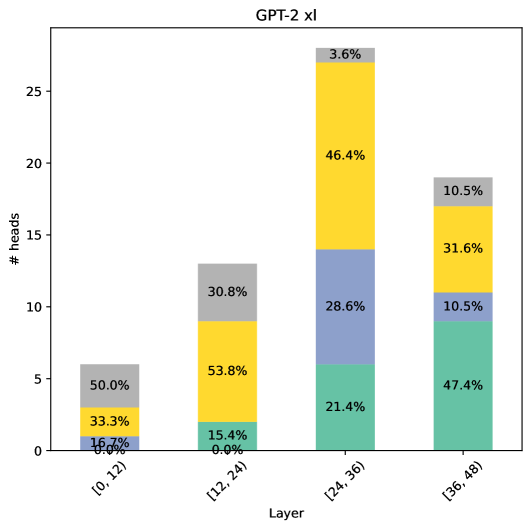

This image displays a stacked bar chart titled "GPT-2 xl". It visualizes the distribution of different categories of attention heads across four consecutive layer ranges (blocks) of the GPT-2 xl model. The chart quantifies the number and proportional composition of heads within each layer block.

### Components/Axes

* **Chart Title:** "GPT-2 xl" (centered at the top).

* **X-Axis:** Labeled "Layer". It represents four discrete, contiguous ranges of model layers:

* `[0, 12)`

* `[12, 24)`

* `[24, 36)`

* `[36, 48)`

* **Y-Axis:** Labeled "# heads". It represents the count of attention heads, with a linear scale marked at intervals of 5, from 0 to 25.

* **Data Series (Inferred from consistent color coding across bars):** The chart uses four distinct colors to represent different categories of attention heads. While no explicit legend is present, the colors and their associated percentage labels are consistent. The segments within each bar are stacked in the following order from bottom to top: Green, Blue, Yellow, Gray.

### Detailed Analysis

The chart contains four stacked bars, one for each layer range. Each bar's total height represents the total number of attention heads in that block of layers. The segments within each bar show the percentage contribution of each head category.

**1. Layer Range [0, 12)**

* **Total Height (Approximate):** 6 heads.

* **Segment Composition (from bottom to top):**

* **Green:** 0.0% (0 heads)

* **Blue:** 16.7% (~1 head)

* **Yellow:** 33.3% (~2 heads)

* **Gray:** 50.0% (~3 heads)

**2. Layer Range [12, 24)**

* **Total Height (Approximate):** 13 heads.

* **Segment Composition (from bottom to top):**

* **Green:** 15.4% (~2 heads)

* **Blue:** 0.0% (0 heads)

* **Yellow:** 53.8% (~7 heads)

* **Gray:** 30.8% (~4 heads)

**3. Layer Range [24, 36)**

* **Total Height (Approximate):** 28 heads.

* **Segment Composition (from bottom to top):**

* **Green:** 21.4% (~6 heads)

* **Blue:** 28.6% (~8 heads)

* **Yellow:** 46.4% (~13 heads)

* **Gray:** 3.6% (~1 head)

**4. Layer Range [36, 48)**

* **Total Height (Approximate):** 19 heads.

* **Segment Composition (from bottom to top):**

* **Green:** 47.4% (~9 heads)

* **Blue:** 10.5% (~2 heads)

* **Yellow:** 31.6% (~6 heads)

* **Gray:** 10.5% (~2 heads)

### Key Observations

* **Total Head Count Trend:** The total number of attention heads per layer block is not constant. It increases from the first block (6) to a peak in the third block (28), then decreases in the final block (19).

* **Category Trends:**

* **Green Segment:** Shows a clear, consistent upward trend across layers, starting at 0% in the first block and becoming the dominant category (47.4%) in the final block.

* **Yellow Segment:** Is the most prevalent category in the middle two blocks (53.8% and 46.4%) but decreases in the first and last blocks.

* **Blue Segment:** Exhibits a volatile pattern. It is present in the first block, absent in the second, peaks in the third, and is present again in the fourth.

* **Gray Segment:** Shows a general downward trend, being most prominent in the first block (50.0%) and least prominent in the third (3.6%).

* **Notable Anomaly:** The second layer block ([12, 24)) is the only one where the Blue category is completely absent (0.0%).

### Interpretation

This chart provides a structural analysis of the GPT-2 xl transformer model, specifically examining the functional specialization of its multi-head attention layers. The data suggests a **progression of role specialization from early to late layers**:

1. **Early Layers ([0, 12)):** Dominated by the "Gray" category (50%), with a significant "Yellow" component. This suggests these layers may handle more fundamental or general syntactic processing.

2. **Middle Layers ([12, 24) & [24, 36)):** These layers show the highest total head count and are dominated by the "Yellow" category. The third block also sees a major rise in the "Blue" category. This indicates these middle layers are the core computational engine, likely handling complex, integrated features of the input.

3. **Late Layers ([36, 48)):** The "Green" category becomes dominant (47.4%), while others recede. This points to a shift in function in the final layers, possibly towards task-specific output formatting, final prediction, or a distinct type of contextual integration.

The absence of the "Blue" category in the second block is a curious architectural or functional anomaly that may indicate a specific design choice or a phase in the model's processing pipeline where that type of attention is not required. Overall, the chart illustrates that attention heads in a large language model are not uniform; they are heterogeneous and their functional composition evolves systematically through the network's depth.