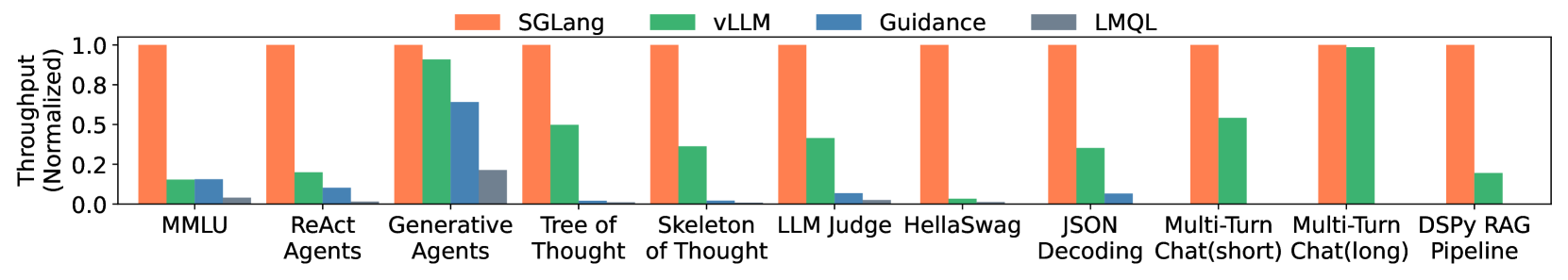

# Technical Analysis of Throughput Chart

## Axis Labels

- **X-Axis**: Categories (Models/Tasks)

- MMLU

- ReAct Agents

- Generative Agents

- Tree of Thought

- Skeleton of Thought

- LLM Judge

- HellaSwag

- JSON

- Multi-Turn Chat(short)

- Multi-Turn Chat(long)

- DSPy RAG Pipeline

- **Y-Axis**: Throughput (Normalized)

- Scale: 0.0 to 1.0 (increments of 0.2)

## Legend

- **Colors and Labels**:

- Orange: SGLang

- Green: vLLM

- Blue: Guidance

- Gray: LMQL

## Key Trends and Data Points

1. **SGLang (Orange)**:

- Consistently highest throughput across all categories.

- Peaks at 1.0 in most tasks (e.g., MMLU, Generative Agents, Multi-Turn Chat(long)).

2. **vLLM (Green)**:

- Second-highest throughput in most categories.

- Notable exceptions:

- Low in HellaSwag (near 0.0).

- Moderate in JSON (~0.3).

3. **Guidance (Blue)**:

- Minimal throughput in most tasks (e.g., MMLU, Tree of Thought).

- Exception: Generative Agents (~0.7).

4. **LMQL (Gray)**:

- Lowest throughput across all categories.

- Rarely exceeds 0.2 (e.g., Generative Agents: ~0.2).

## Category-Specific Observations

- **MMLU**:

- SGLang: ~1.0

- vLLM: ~0.15

- Guidance: ~0.15

- LMQL: ~0.05

- **Generative Agents**:

- SGLang: ~1.0

- vLLM: ~0.9

- Guidance: ~0.7

- LMQL: ~0.2

- **Multi-Turn Chat(long)**:

- SGLang: ~1.0

- vLLM: ~0.6

- Guidance: 0.0

- LMQL: 0.0

- **DSPy RAG Pipeline**:

- SGLang: ~1.0

- vLLM: ~0.15

- Guidance: 0.0

- LMQL: 0.0

## General Observations

- **SGLang Dominance**: Outperforms all other methods in throughput across nearly all tasks.

- **vLLM Consistency**: Maintains second-place performance but varies significantly (e.g., near 0 in HellaSwag).

- **Guidance and LMQL**: Limited utility, with Guidance occasionally outperforming LMQL in specific tasks (e.g., Generative Agents).