## Diagram: Inference-Scaling and Learning-to-Reason Process Flow

### Overview

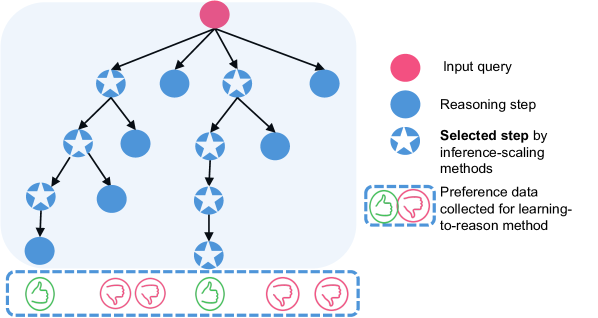

The image is a technical diagram illustrating a two-phase process for improving AI reasoning. It depicts a tree-structured reasoning process initiated by an input query, where certain steps are selected by "inference-scaling methods." The outcomes of these selected steps are then used to collect preference data, which feeds into a "learning-to-reason method." The diagram uses a legend to define its symbolic elements.

### Components/Axes

The diagram is composed of two main sections:

1. **Main Process Diagram (Left/Center):** A tree-like flowchart.

2. **Legend (Right):** A key explaining the symbols used in the diagram.

**Legend Content (Right Side, Top to Bottom):**

* **Pink Circle:** Labeled "Input query".

* **Blue Circle:** Labeled "Reasoning step".

* **Blue Circle with a White Star:** Labeled "**Selected step** by inference-scaling methods". The text "Selected step" is in bold.

* **Dashed Box containing a Green Thumbs-Up and a Red Thumbs-Down icon:** Labeled "Preference data collected for learning-to-reason method".

### Detailed Analysis

**Spatial Layout & Flow:**

* The process begins at the **top-center** with a single pink circle (Input query).

* From this input, arrows point downward to four initial blue circles (Reasoning steps), forming the first level of the tree.

* The tree expands downward with further branching. Some blue circles have a white star inside, indicating they are "Selected steps."

* The flow is hierarchical and branching, moving from the single input at the top to multiple potential reasoning paths below.

* At the **bottom** of the diagram, aligned horizontally, is a dashed box containing a sequence of preference data icons (thumbs-up/down). This box is positioned directly beneath the terminal nodes of the reasoning tree.

**Component Isolation & Symbol Mapping:**

* **Header/Top:** The single pink "Input query" node.

* **Main Chart/Center:** The reasoning tree. It contains:

* **Unselected Reasoning Steps:** Plain blue circles.

* **Selected Reasoning Steps:** Blue circles with a white star. These are scattered at various depths within the tree, not just at the leaves.

* **Footer/Bottom:** The "Preference data" collection box. It contains a specific sequence of icons: Green Thumbs-Up, Red Thumbs-Down, Red Thumbs-Down, Green Thumbs-Up, Red Thumbs-Down, Red Thumbs-Down. This sequence is not directly connected by arrows to specific nodes above it, implying it represents aggregated or sampled feedback from the process.

### Key Observations

1. **Non-Linear Selection:** The "Selected steps" (starred nodes) are not exclusively at the end of a path. They appear at intermediate branching points, suggesting the inference-scaling method evaluates and selects promising reasoning steps *during* the process, not just final answers.

2. **Preference Data Structure:** The preference data is presented as a discrete sequence of binary outcomes (thumbs-up/down), not as a continuous score. This suggests a pairwise comparison or ranking-based learning signal.

3. **Process Segmentation:** The diagram clearly separates the *exploration/execution* phase (the reasoning tree) from the *evaluation/learning* phase (the preference data collection). The dashed box around the preference data visually isolates it as a distinct output or dataset.

### Interpretation

This diagram models a **two-stage framework for enhancing AI reasoning capabilities**:

1. **Inference-Scaling Phase:** Given an input query, the system generates a diverse tree of potential reasoning steps. "Inference-scaling methods" (which could involve techniques like search algorithms, sampling, or heuristic evaluation) actively select the most promising steps at various points in this tree. This is akin to exploring a problem space and identifying the most fruitful paths.

2. **Learning-to-Reason Phase:** The outcomes or paths from the selected steps are used to generate "preference data" (e.g., determining which reasoning path led to a better answer). This data, represented by the thumbs-up/down icons, serves as training signal. A "learning-to-reason method" (likely a machine learning model) would then use this preference data to improve its ability to select good reasoning steps in the future, creating a feedback loop.

**The core insight** is that the system doesn't just generate one answer; it generates a structured exploration of possibilities, uses a selection mechanism to focus on the best parts of that exploration, and then uses the results of that focused exploration to train itself to be better at the selection process next time. This represents a move from static reasoning to an iterative, self-improving reasoning cycle.