## Dual-Axis Line Chart: Comparison of Accuracy Metrics Across Methods

### Overview

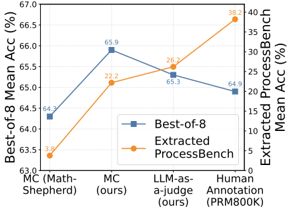

The image displays a dual-axis line chart comparing two accuracy metrics—"Best-of-8 Mean Acc (%)" and "Extracted ProcessBench Mean Acc (%)"—across three different methods or evaluation approaches. The chart illustrates how performance changes between a baseline method and two proposed methods ("ours").

### Components/Axes

* **Chart Type:** Dual-axis line chart with markers.

* **X-Axis (Categorical):** Lists three methods.

* Label 1 (Left): `MC (Math-Shepherd)`

* Label 2 (Center): `MC (ours)`

* Label 3 (Right): `LLM-as-Judge (ours)`

* **Primary Y-Axis (Left):**

* Title: `Best-of-8 Mean Acc (%)`

* Scale: Linear, ranging from 63.0 to 67.0, with major ticks at 0.5% intervals.

* **Secondary Y-Axis (Right):**

* Title: `Extracted ProcessBench Mean Acc (%)`

* Scale: Linear, ranging from 0 to 40, with major ticks at 5% intervals.

* **Legend:** Positioned at the bottom center of the chart area.

* Entry 1: `Best-of-8` - Represented by a blue line with square markers.

* Entry 2: `Extracted ProcessBench` - Represented by an orange line with circular markers.

* **Data Points (Approximate Values):**

* **Best-of-8 (Blue Line, Left Axis):**

* At `MC (Math-Shepherd)`: ~64.3%

* At `MC (ours)`: ~65.9%

* At `LLM-as-Judge (ours)`: ~64.9%

* **Extracted ProcessBench (Orange Line, Right Axis):**

* At `MC (Math-Shepherd)`: ~63.3%

* At `MC (ours)`: ~65.2%

* At `LLM-as-Judge (ours)`: ~66.7%

### Detailed Analysis

The chart plots two distinct performance trends across the three evaluated methods.

1. **Best-of-8 Accuracy (Blue Line, Left Axis):** This series shows an initial increase followed by a slight decrease.

* The line slopes upward from `MC (Math-Shepherd)` (~64.3%) to `MC (ours)` (~65.9%), indicating a performance gain of approximately 1.6 percentage points.

* It then slopes slightly downward from `MC (ours)` to `LLM-as-Judge (ours)` (~64.9%), a decrease of about 1.0 percentage point.

* **Trend:** Inverted-V shape, peaking at the central method.

2. **Extracted ProcessBench Accuracy (Orange Line, Right Axis):** This series shows a consistent upward trend.

* The line slopes upward from `MC (Math-Shepherd)` (~63.3%) to `MC (ours)` (~65.2%), a gain of approximately 1.9 percentage points.

* It continues to slope upward more steeply from `MC (ours)` to `LLM-as-Judge (ours)` (~66.7%), a further gain of about 1.5 percentage points.

* **Trend:** Consistently ascending line.

### Key Observations

* **Diverging Final Performance:** While both metrics improve from the baseline (`MC (Math-Shepherd)`) to the first proposed method (`MC (ours)`), their paths diverge at the final method (`LLM-as-Judge (ours)`). Best-of-8 accuracy dips slightly, whereas Extracted ProcessBench accuracy continues to rise.

* **Scale Disparity:** The two metrics operate on vastly different scales (0-40% vs. 63-67%), necessitating the dual-axis format. The absolute values for Extracted ProcessBench are significantly lower than for Best-of-8.

* **Peak Performance Points:** The highest value for Best-of-8 is achieved by `MC (ours)`. The highest value for Extracted ProcessBench is achieved by `LLM-as-Judge (ours)`.

### Interpretation

This chart likely evaluates different methods for solving or judging mathematical problems (suggested by "MC" for Multiple Choice and "Math-Shepherd," a known math reasoning dataset/benchmark). The "ours" labels indicate novel methods proposed by the authors.

The data suggests that the authors' methods (`MC (ours)` and `LLM-as-Judge (ours)`) generally improve performance over the baseline (`MC (Math-Shepherd)`) on both metrics. However, the nature of the improvement differs:

* The `MC (ours)` method provides a balanced boost to both the "Best-of-8" metric (which may measure raw answer accuracy from multiple attempts) and the "Extracted ProcessBench" metric (which likely evaluates the quality of the reasoning process or steps).

* The `LLM-as-Judge (ours)` method shows a trade-off: it yields the best performance on the process-oriented metric (Extracted ProcessBench) but results in a slight regression on the final answer accuracy metric (Best-of-8) compared to `MC (ours)`.

This could imply that using an LLM as a judge is particularly effective for evaluating or improving the reasoning process itself, but this focus might come at a minor cost to the optimization of the final answer selection in a "best-of-N" setting. The chart effectively argues that the authors' contributions improve upon the baseline, with different methods excelling on different evaluation criteria.