# Technical Document: LLM Function Calling and Conversation Architecture

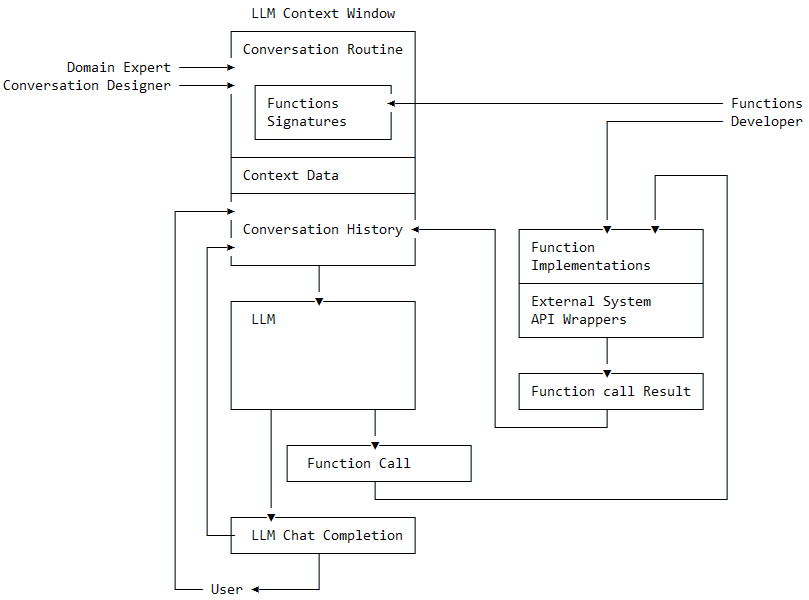

This document provides a detailed extraction and analysis of the provided architectural diagram, which outlines the flow of information between a Large Language Model (LLM), developers, designers, and end-users within a function-calling framework.

## 1. Component Isolation

The diagram is structured into three primary functional regions:

* **Region A: LLM Context Window (Top Left):** Defines the static and dynamic data provided to the model.

* **Region B: Execution & Processing (Center/Bottom):** The core LLM logic and output generation.

* **Region C: Backend Implementation (Right):** The technical infrastructure supporting function execution.

---

## 2. Detailed Component Transcription

### Region A: LLM Context Window

This is the primary container for the model's immediate memory and instructions.

* **Header Label:** `LLM Context Window`

* **Sub-components:**

* **Conversation Routine:** The top-level instructional framework.

* **Nested Box:** `Functions Signatures` (The definitions of available tools).

* **Context Data:** Static or retrieved information relevant to the session.

* **Conversation History:** The log of previous turns in the dialogue.

### Region B: Execution & Processing

* **LLM:** The central processing unit of the diagram.

* **Function Call:** A specific output generated by the LLM when it determines a tool is needed.

* **LLM Chat Completion:** The final natural language response generated for the user.

### Region C: Backend Implementation

* **Function Implementations:** The actual code (e.g., Python/JavaScript) that executes the logic.

* **External System API Wrappers:** The interface used to communicate with outside services.

* **Function call Result:** The data returned after the function has been executed.

### External Actors (Input/Output)

* **Domain Expert:** Provides input to the Conversation Routine.

* **Conversation Designer:** Provides input to the Conversation Routine.

* **Functions Developer:** Provides input to both the `Functions Signatures` and the `Function Implementations`.

* **User:** The end-point receiving the `LLM Chat Completion` and providing input back into the `Conversation History`.

---

## 3. Flow and Logic Analysis

The diagram illustrates a cyclical and interconnected workflow:

### Design & Development Phase

1. **Domain Experts** and **Conversation Designers** define the **Conversation Routine**.

2. The **Functions Developer** defines the **Functions Signatures** (what the LLM sees) and writes the **Function Implementations** (what the server executes).

### Execution Phase (The Loop)

1. **Input:** The **LLM** receives data from the **LLM Context Window** (Routine, Signatures, Context Data, and History).

2. **Decision:** The **LLM** can produce two types of outputs:

* **Direct Path:** It generates an **LLM Chat Completion** directly.

* **Action Path:** It generates a **Function Call**.

3. **Function Execution:**

* The **Function Call** triggers the **Function Implementations**.

* This interacts with **External System API Wrappers**.

* The result is captured in **Function call Result**.

4. **Feedback Loop:**

* The **Function call Result** is fed back into the **Conversation History**.

* The **LLM Chat Completion** is sent to the **User**.

* The **User's** subsequent response is fed back into the **Conversation History**, restarting the cycle.

---

## 4. Summary of Textual Elements

| Category | Extracted Text |

| :--- | :--- |

| **Main Containers** | LLM Context Window, LLM |

| **Context Elements** | Conversation Routine, Functions Signatures, Context Data, Conversation History |

| **Output Elements** | Function Call, LLM Chat Completion, Function call Result |

| **Backend Elements** | Function Implementations, External System API Wrappers |

| **Actors** | Domain Expert, Conversation Designer, Functions Developer, User |