## Scatter Plot Series: Model Accuracy vs. Thinking Length

### Overview

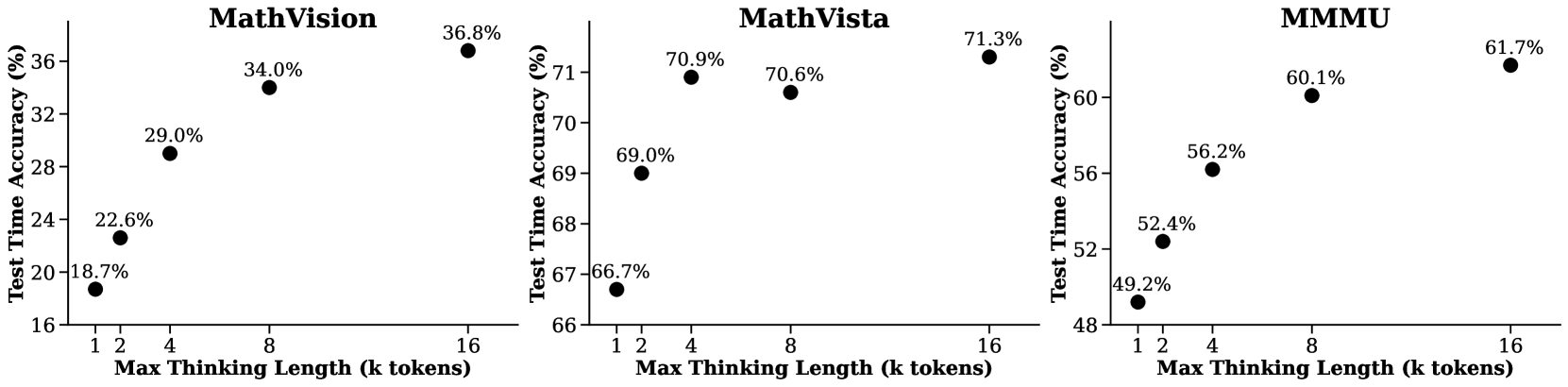

The image displays three separate scatter plots arranged horizontally. Each plot illustrates the relationship between a model's "Max Thinking Length" (in thousands of tokens) and its "Test Time Accuracy" (as a percentage) on a specific benchmark. The benchmarks are, from left to right: **MathVision**, **MathVista**, and **MMMU**. All plots share the same x-axis label and scale but have different y-axis scales and data ranges.

### Components/Axes

* **Titles:** Each plot has a bold, centered title at the top: "MathVision", "MathVista", "MMMU".

* **X-Axis (Common):** Labeled "Max Thinking Length (k tokens)". The axis has discrete tick marks at the values: 1, 2, 4, 8, and 16.

* **Y-Axis (Per Plot):** Labeled "Test Time Accuracy (%)". The scale and range differ for each plot:

* **MathVision:** Ranges from 16% to 36%, with major ticks at 16, 20, 24, 28, 32, 36.

* **MathVista:** Ranges from 66% to 71%, with major ticks at 66, 67, 68, 69, 70, 71.

* **MMMU:** Ranges from 48% to 60%, with major ticks at 48, 52, 56, 60.

* **Data Series:** Each plot contains a single data series represented by black circular markers. Each marker is annotated with its precise percentage value.

### Detailed Analysis

**1. MathVision Plot (Left)**

* **Trend:** Shows a strong, positive, and roughly logarithmic trend. Accuracy increases rapidly with thinking length initially, then the rate of improvement slows.

* **Data Points:**

* At 1k tokens: 18.7%

* At 2k tokens: 22.6%

* At 4k tokens: 29.0%

* At 8k tokens: 34.0%

* At 16k tokens: 36.8%

**2. MathVista Plot (Center)**

* **Trend:** Shows a positive trend that plateaus significantly after 4k tokens. The improvement from 4k to 16k tokens is minimal.

* **Data Points:**

* At 1k tokens: 66.7%

* At 2k tokens: 69.0%

* At 4k tokens: 70.9%

* At 8k tokens: 70.6% (Note: A very slight decrease from the 4k point)

* At 16k tokens: 71.3%

**3. MMMU Plot (Right)**

* **Trend:** Shows a consistent, positive, and nearly linear trend across the measured range. The rate of improvement is steady.

* **Data Points:**

* At 1k tokens: 49.2%

* At 2k tokens: 52.4%

* At 4k tokens: 56.2%

* At 8k tokens: 60.1%

* At 16k tokens: 61.7%

### Key Observations

1. **Universal Positive Correlation:** All three benchmarks demonstrate that increasing the maximum thinking length (computational budget) leads to higher test accuracy.

2. **Diminishing Returns:** The benefit of additional thinking length is not uniform. MathVision shows the most dramatic gains, MathVista plateaus early, and MMMU shows steady but less dramatic gains.

3. **Performance Ceiling:** MathVista appears to approach a performance ceiling near 71% accuracy with thinking lengths beyond 4k tokens.

4. **Anomaly:** The MathVista data point at 8k tokens (70.6%) is marginally lower than the point at 4k tokens (70.9%). This could be statistical noise or indicate a minor instability in the scaling trend for that specific benchmark.

### Interpretation

This data suggests a fundamental trade-off in AI reasoning models between computational cost (thinking length) and performance (accuracy). The relationship is not linear and is highly dependent on the nature of the task (benchmark).

* **MathVision** tasks likely involve complex, multi-step reasoning where additional "thinking" directly translates to solving more problems, hence the strong, sustained improvement.

* **MathVista** tasks may have a inherent complexity ceiling; after a certain point, throwing more tokens at the problem yields negligible benefit, suggesting the model's reasoning capability or the problem's solvability saturates.

* **MMMU** (Massive Multi-discipline Multimodal Understanding) tasks show a reliable, scalable benefit, indicating that broader knowledge integration and reasoning continue to improve with more processing.

The key takeaway is that "thinking longer" is a powerful lever for improving AI performance, but its effectiveness is task-dependent. Optimizing for efficiency would require understanding where diminishing returns set in for a given class of problems, as seen starkly in the MathVista plot. The slight dip at 8k tokens in MathVista also hints that scaling behavior can have non-monotonic quirks worth investigating.