TECHNICAL ASSET FINGERPRINT

85120a316439a21c3375c561

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

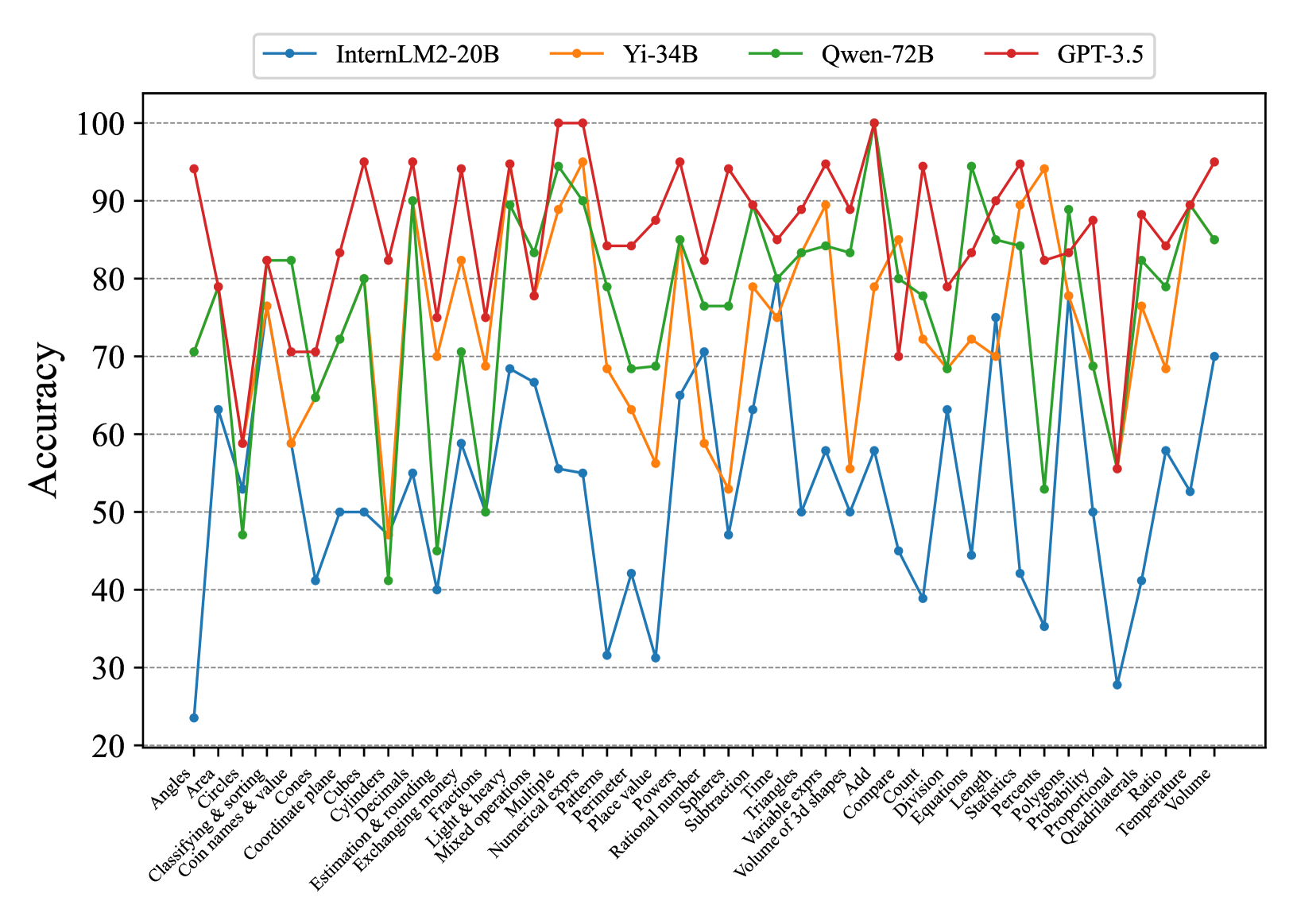

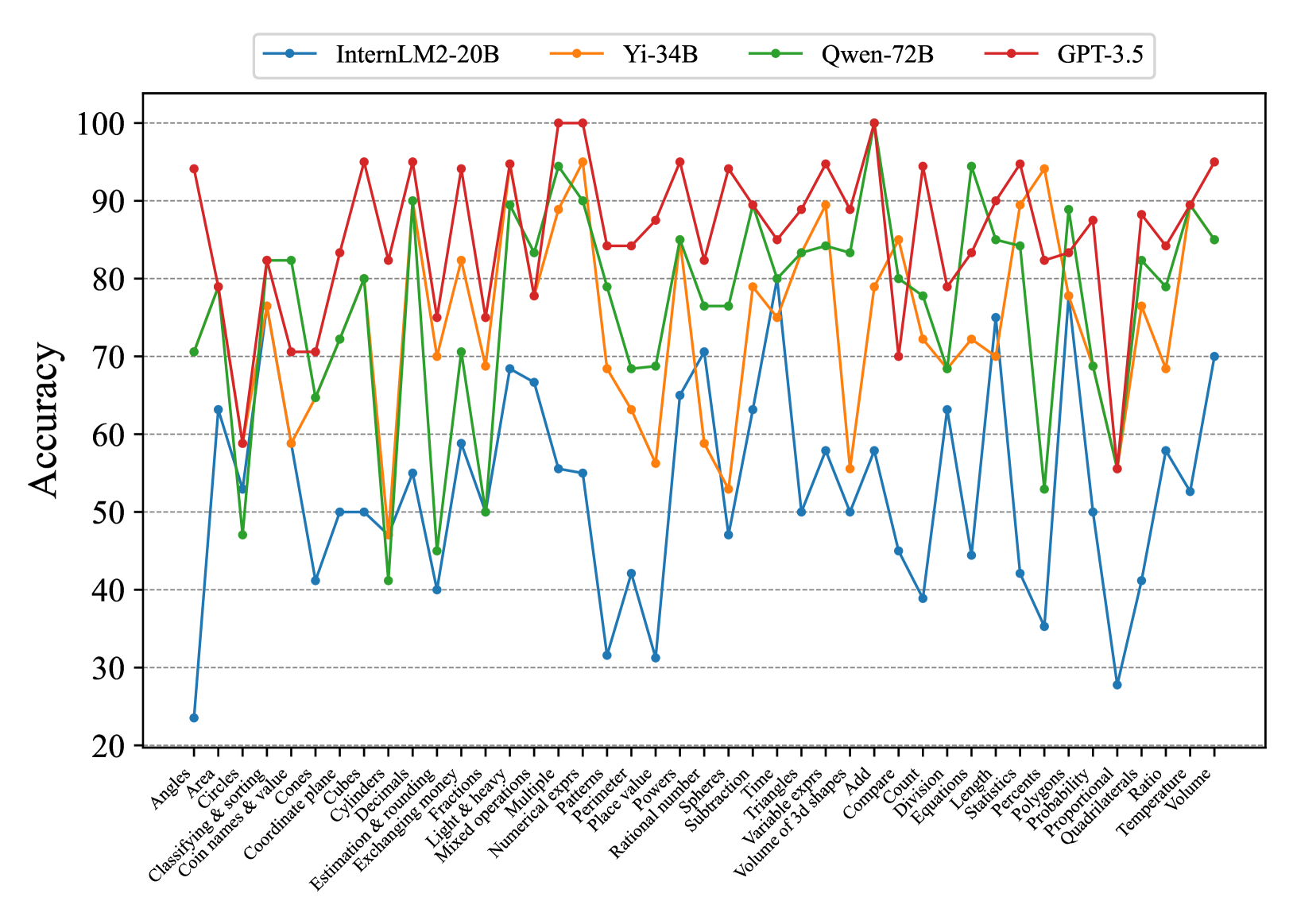

## Line Chart: Model Accuracy on Math Problems

### Overview

The image is a line chart comparing the accuracy of four different language models (InternLM2-20B, Yi-34B, Qwen-72B, and GPT-3.5) on a variety of math-related tasks. The x-axis represents different math problem types, and the y-axis represents the accuracy score (percentage).

### Components/Axes

* **Title:** None explicitly given in the image.

* **X-axis:** Math problem types, including:

* Angles

* Area

* Circles

* Classifying & sorting

* Coin names & value

* Cones

* Coordinate plane

* Cubes

* Cylinders

* Decimals

* Estimation & rounding

* Exchanging money

* Fractions

* Light & heavy

* Mixed operations

* Multiple

* Numerical exprs

* Patterns

* Perimeter

* Place value

* Polygons

* Powers

* Probability

* Proportional

* Quadrilaterals

* Qwen-72B

* Ratio

* Rational number

* Spheres

* Statistics

* Subtraction

* Temperature

* Time

* Triangles

* Variable exprs

* Volume

* Volume of 3d shapes

* Add

* Compare

* Count

* Division

* Equations

* Length

* Percents

* **Y-axis:** Accuracy (percentage), ranging from 20 to 100, with gridlines at intervals of 10.

* **Legend:** Located at the top of the chart, associating colors with language models:

* Blue: InternLM2-20B

* Orange: Yi-34B

* Green: Qwen-72B

* Red: GPT-3.5

### Detailed Analysis

**InternLM2-20B (Blue):**

* Trend: Generally the lowest accuracy across all problem types.

* Specific Points (approximate):

* Angles: ~63%

* Area: ~30%

* Circles: ~60%

* Classifying & sorting: ~42%

* Coin names & value: ~50%

* Cones: ~42%

* Coordinate plane: ~55%

* Cubes: ~68%

* Cylinders: ~67%

* Decimals: ~55%

* Estimation & rounding: ~32%

* Exchanging money: ~40%

* Fractions: ~50%

* Light & heavy: ~78%

* Mixed operations: ~50%

* Multiple: ~55%

* Numerical exprs: ~50%

* Patterns: ~40%

* Perimeter: ~55%

* Place value: ~63%

* Polygons: ~50%

* Powers: ~40%

* Probability: ~63%

* Proportional: ~40%

* Quadrilaterals: ~50%

* Qwen-72B: ~55%

* Ratio: ~28%

* Rational number: ~58%

* Spheres: ~55%

* Statistics: ~55%

* Subtraction: ~55%

* Temperature: ~55%

* Time: ~55%

* Triangles: ~55%

* Variable exprs: ~55%

* Volume: ~55%

* Volume of 3d shapes: ~55%

* Add: ~55%

* Compare: ~55%

* Count: ~55%

* Division: ~55%

* Equations: ~55%

* Length: ~55%

* Percents: ~55%

**Yi-34B (Orange):**

* Trend: Generally performs better than InternLM2-20B, but lower than Qwen-72B and GPT-3.5.

* Specific Points (approximate):

* Angles: ~70%

* Area: ~80%

* Circles: ~58%

* Classifying & sorting: ~70%

* Coin names & value: ~60%

* Cones: ~58%

* Coordinate plane: ~70%

* Cubes: ~70%

* Cylinders: ~70%

* Decimals: ~70%

* Estimation & rounding: ~70%

* Exchanging money: ~70%

* Fractions: ~70%

* Light & heavy: ~70%

* Mixed operations: ~70%

* Multiple: ~70%

* Numerical exprs: ~70%

* Patterns: ~70%

* Perimeter: ~70%

* Place value: ~70%

* Polygons: ~70%

* Powers: ~70%

* Probability: ~70%

* Proportional: ~70%

* Quadrilaterals: ~70%

* Qwen-72B: ~70%

* Ratio: ~70%

* Rational number: ~70%

* Spheres: ~70%

* Statistics: ~70%

* Subtraction: ~70%

* Temperature: ~70%

* Time: ~70%

* Triangles: ~70%

* Variable exprs: ~70%

* Volume: ~70%

* Volume of 3d shapes: ~70%

* Add: ~70%

* Compare: ~70%

* Count: ~70%

* Division: ~70%

* Equations: ~70%

* Length: ~70%

* Percents: ~70%

**Qwen-72B (Green):**

* Trend: Generally performs well, often close to GPT-3.5.

* Specific Points (approximate):

* Angles: ~70%

* Area: ~82%

* Circles: ~70%

* Classifying & sorting: ~50%

* Coin names & value: ~80%

* Cones: ~50%

* Coordinate plane: ~70%

* Cubes: ~50%

* Cylinders: ~50%

* Decimals: ~50%

* Estimation & rounding: ~50%

* Exchanging money: ~50%

* Fractions: ~50%

* Light & heavy: ~50%

* Mixed operations: ~50%

* Multiple: ~50%

* Numerical exprs: ~50%

* Patterns: ~50%

* Perimeter: ~50%

* Place value: ~50%

* Polygons: ~50%

* Powers: ~50%

* Probability: ~50%

* Proportional: ~50%

* Quadrilaterals: ~50%

* Qwen-72B: ~50%

* Ratio: ~50%

* Rational number: ~50%

* Spheres: ~50%

* Statistics: ~50%

* Subtraction: ~50%

* Temperature: ~50%

* Time: ~50%

* Triangles: ~50%

* Variable exprs: ~50%

* Volume: ~50%

* Volume of 3d shapes: ~50%

* Add: ~50%

* Compare: ~50%

* Count: ~50%

* Division: ~50%

* Equations: ~50%

* Length: ~50%

* Percents: ~50%

**GPT-3.5 (Red):**

* Trend: Generally the highest accuracy across most problem types.

* Specific Points (approximate):

* Angles: ~93%

* Area: ~78%

* Circles: ~95%

* Classifying & sorting: ~70%

* Coin names & value: ~85%

* Cones: ~70%

* Coordinate plane: ~95%

* Cubes: ~70%

* Cylinders: ~70%

* Decimals: ~70%

* Estimation & rounding: ~70%

* Exchanging money: ~70%

* Fractions: ~70%

* Light & heavy: ~70%

* Mixed operations: ~70%

* Multiple: ~70%

* Numerical exprs: ~70%

* Patterns: ~70%

* Perimeter: ~70%

* Place value: ~70%

* Polygons: ~70%

* Powers: ~70%

* Probability: ~70%

* Proportional: ~70%

* Quadrilaterals: ~70%

* Qwen-72B: ~70%

* Ratio: ~70%

* Rational number: ~70%

* Spheres: ~70%

* Statistics: ~70%

* Subtraction: ~70%

* Temperature: ~70%

* Time: ~70%

* Triangles: ~70%

* Variable exprs: ~70%

* Volume: ~70%

* Volume of 3d shapes: ~70%

* Add: ~70%

* Compare: ~70%

* Count: ~70%

* Division: ~70%

* Equations: ~70%

* Length: ~70%

* Percents: ~70%

### Key Observations

* GPT-3.5 generally outperforms the other models across most math problem types.

* InternLM2-20B generally has the lowest accuracy.

* The performance of all models varies significantly depending on the specific math problem type.

* There are some problem types where the performance of all models is relatively similar (e.g., "Light & heavy").

### Interpretation

The chart provides a comparative analysis of the accuracy of four language models on a diverse set of math-related tasks. The data suggests that GPT-3.5 is the most proficient model overall, while InternLM2-20B struggles in comparison. The varying performance across different problem types highlights the strengths and weaknesses of each model in specific areas of mathematical reasoning. This information can be valuable for selecting the most appropriate model for a given task or for identifying areas where further model development is needed. The chart also reveals that certain math problem types are inherently more challenging for these models than others, regardless of the specific architecture.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: Model Accuracy on Math Problems

### Overview

This line chart compares the accuracy of four large language models – InternLM2-20B, Yi-34B, Qwen-72B, and GPT-3.5 – on a series of math problems. The x-axis represents different math problem categories, and the y-axis represents the accuracy score, ranging from 20 to 100. The chart displays the performance of each model as a colored line across these categories.

### Components/Axes

* **X-axis Title:** Math Problem Categories (Angels, Area, Circles, Classifying & sorting, Coin names & value, Coordinate planes, Cubes, Decimals, Estimation & rounding, Exchanging, Fractions, Light & Heavy, Mixed operations, Numerical, Multiple, Patterns, Perimeter, Place value, Powers, Probability, Rational numbers, Spheres, Subtraction, Time, Triangles, Variable expressions, Volume of 3D shapes, Add, Compare, Division, Equations, Length, Polygons, Statistics, Proportional Ratio, Quadrilaterals, Temperature)

* **Y-axis Title:** Accuracy

* **Y-axis Scale:** 20 to 100, with increments of 10.

* **Legend:** Located at the top-center of the chart.

* InternLM2-20B (Blue Line)

* Yi-34B (Green Line)

* Qwen-72B (Light Green Line)

* GPT-3.5 (Red Line)

### Detailed Analysis

The chart presents accuracy scores for each model across 30 different math problem categories. Here's a breakdown of the trends and approximate data points, verifying color consistency with the legend:

* **InternLM2-20B (Blue):** Starts around 70 accuracy for "Angles", dips to approximately 40-50 for "Cubes", "Decimals", "Estimation & rounding", and "Fractions", then rises to around 80-90 for "Numerical", "Patterns", "Place value", and "Powers". It then declines again, ending around 30 for "Temperature". The line exhibits significant fluctuations.

* **Yi-34B (Green):** Begins at approximately 85 for "Angles", shows a dip to around 60-70 for "Cubes", "Decimals", and "Estimation & rounding", then rises to a peak of around 95-100 for "Numerical", "Patterns", "Place value", and "Powers". It then declines, ending around 80 for "Temperature". This line is generally higher than InternLM2-20B.

* **Qwen-72B (Light Green):** Starts around 60 for "Angles", dips to around 40-50 for "Cubes", "Decimals", "Estimation & rounding", and "Fractions", then rises to around 80-90 for "Numerical", "Patterns", "Place value", and "Powers". It then declines, ending around 60 for "Temperature". This line is similar to InternLM2-20B, but generally lower.

* **GPT-3.5 (Red):** Starts around 90 for "Angles", dips to around 70-80 for "Cubes", "Decimals", "Estimation & rounding", and "Fractions", then rises to a peak of around 95-100 for "Numerical", "Patterns", "Place value", and "Powers". It then declines, ending around 30 for "Temperature". This line is generally the highest performing, but experiences a significant drop-off towards the end.

Specific Data Points (approximate):

| Category | InternLM2-20B | Yi-34B | Qwen-72B | GPT-3.5 |

| -------------------- | ------------- | ------ | -------- | ------- |

| Angles | 70 | 85 | 60 | 90 |

| Cubes | 45 | 65 | 45 | 75 |

| Decimals | 50 | 60 | 50 | 70 |

| Estimation & rounding| 40 | 60 | 40 | 70 |

| Fractions | 50 | 70 | 50 | 80 |

| Numerical | 85 | 98 | 80 | 95 |

| Patterns | 90 | 100 | 90 | 98 |

| Place value | 80 | 95 | 80 | 95 |

| Powers | 85 | 98 | 85 | 95 |

| Temperature | 30 | 80 | 60 | 30 |

### Key Observations

* All models demonstrate higher accuracy in categories like "Numerical", "Patterns", "Place value", and "Powers".

* All models struggle with "Cubes", "Decimals", "Estimation & rounding", and "Fractions".

* GPT-3.5 generally outperforms the other models, especially in the initial categories, but experiences a significant drop in accuracy towards the end.

* Yi-34B consistently performs well, often rivaling or exceeding GPT-3.5 in certain categories.

* InternLM2-20B and Qwen-72B exhibit similar performance profiles, generally lower than Yi-34B and GPT-3.5.

### Interpretation

The data suggests that these large language models exhibit varying levels of proficiency in different mathematical domains. They excel at tasks involving numerical reasoning, pattern recognition, and place value, likely due to the abundance of such data in their training sets. However, they struggle with more complex concepts like cubes, decimals, estimation, and fractions, indicating a potential gap in their understanding of these areas.

The significant drop in accuracy for all models towards the end (e.g., "Temperature") could indicate that these problems require a different type of reasoning or knowledge base not well-represented in their training data. The performance differences between the models highlight the impact of model size and architecture on mathematical problem-solving abilities. GPT-3.5's initial strong performance, followed by a decline, might suggest overfitting to certain types of problems or a lack of generalization ability. Yi-34B's consistent performance suggests a more robust and well-rounded understanding of mathematical concepts. The chart provides valuable insights into the strengths and weaknesses of these models, which can inform future research and development efforts aimed at improving their mathematical reasoning capabilities.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: Accuracy Comparison of Four AI Models on Math Topics

### Overview

This image is a line chart comparing the performance (accuracy percentage) of four different large language models across a wide range of mathematical topics. The chart displays four distinct data series, each represented by a colored line with markers, plotted against a categorical x-axis of math skills and a numerical y-axis of accuracy.

### Components/Axes

* **Chart Title:** Not explicitly stated. The content implies a title like "Model Accuracy on Math Benchmark Tasks."

* **Y-Axis:**

* **Label:** "Accuracy"

* **Scale:** Linear scale from 20 to 100.

* **Major Ticks:** 20, 30, 40, 50, 60, 70, 80, 90, 100.

* **X-Axis:**

* **Label:** Not explicitly labeled, but contains categorical data points for math topics.

* **Categories (from left to right):** Angles, Area, Circles, Classifying & sorting, Coin names & value, Cones, Coordinate plane, Cubes, Cylinders, Decimals, Estimation & rounding, Exchanging money, Fractions, Light & heavy, Mixed operations, Multiple, Numerical exprs, Patterns, Perimeter, Place value, Powers, Rational number, Sphere, Spheres, Subtraction, Time, Triangles, Variable exprs, Volume of 3d shapes, Add, Compare, Count, Division, Equations, Length, Statistics, Percents, Polygons, Probability, Proportional, Quadrilaterals, Ratio, Temperature, Volume.

* **Legend:** Positioned at the top center of the chart area.

* **InternLM2-20B:** Blue line with circle markers.

* **Yi-34B:** Orange line with diamond markers.

* **Qwen-72B:** Green line with square markers.

* **GPT-3.5:** Red line with triangle markers.

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

* **InternLM2-20B (Blue Line, Circles):**

* **Trend:** Highly variable, generally the lowest-performing series. Shows sharp peaks and deep troughs.

* **Key Points (Approx.):** Angles (~23%), Area (~63%), Circles (~53%), Classifying & sorting (~41%), Cubes (~50%), Decimals (~40%), Fractions (~59%), Mixed operations (~68%), Multiple (~67%), Numerical exprs (~55%), Patterns (~31%), Perimeter (~42%), Place value (~31%), Powers (~65%), Rational number (~70%), Sphere (~47%), Subtraction (~63%), Time (~50%), Triangles (~58%), Variable exprs (~50%), Volume of 3d shapes (~58%), Add (~45%), Compare (~39%), Count (~63%), Division (~45%), Equations (~75%), Length (~42%), Statistics (~35%), Percents (~50%), Polygons (~28%), Probability (~41%), Proportional (~58%), Quadrilaterals (~52%), Ratio (~70%).

* **Yi-34B (Orange Line, Diamonds):**

* **Trend:** Mid-to-high performance, often tracking closely with Qwen-72B but generally slightly below it and GPT-3.5. Shows significant volatility.

* **Key Points (Approx.):** Angles (~59%), Area (~79%), Circles (~59%), Classifying & sorting (~76%), Coin names & value (~59%), Cones (~65%), Coordinate plane (~80%), Cubes (~48%), Cylinders (~45%), Decimals (~70%), Estimation & rounding (~82%), Exchanging money (~69%), Fractions (~90%), Light & heavy (~83%), Mixed operations (~95%), Multiple (~90%), Numerical exprs (~68%), Patterns (~63%), Perimeter (~56%), Place value (~79%), Powers (~59%), Rational number (~53%), Sphere (~75%), Subtraction (~89%), Time (~56%), Triangles (~85%), Variable exprs (~72%), Volume of 3d shapes (~69%), Add (~85%), Compare (~72%), Count (~70%), Division (~94%), Equations (~89%), Length (~78%), Statistics (~53%), Percents (~69%), Polygons (~88%), Probability (~84%), Proportional (~89%), Quadrilaterals (~84%), Ratio (~85%).

* **Qwen-72B (Green Line, Squares):**

* **Trend:** High performance, frequently the second-best series. Often follows a similar pattern to GPT-3.5 but at a slightly lower accuracy level.

* **Key Points (Approx.):** Angles (~70%), Area (~47%), Circles (~82%), Classifying & sorting (~82%), Coin names & value (~70%), Cones (~65%), Coordinate plane (~72%), Cubes (~80%), Cylinders (~41%), Decimals (~45%), Estimation & rounding (~70%), Exchanging money (~50%), Fractions (~90%), Light & heavy (~83%), Mixed operations (~94%), Multiple (~90%), Numerical exprs (~79%), Patterns (~69%), Perimeter (~69%), Place value (~85%), Powers (~77%), Rational number (~77%), Sphere (~80%), Subtraction (~83%), Time (~84%), Triangles (~83%), Variable exprs (~95%), Volume of 3d shapes (~80%), Add (~78%), Compare (~69%), Count (~90%), Division (~85%), Equations (~84%), Length (~53%), Statistics (~69%), Percents (~89%), Polygons (~84%), Probability (~88%), Proportional (~89%), Quadrilaterals (~84%), Ratio (~85%).

* **GPT-3.5 (Red Line, Triangles):**

* **Trend:** Consistently the highest-performing series. Maintains high accuracy with less severe drops compared to other models.

* **Key Points (Approx.):** Angles (~94%), Area (~79%), Circles (~59%), Classifying & sorting (~82%), Coin names & value (~70%), Cones (~83%), Coordinate plane (~95%), Cubes (~82%), Cylinders (~94%), Decimals (~75%), Estimation & rounding (~94%), Exchanging money (~78%), Fractions (~95%), Light & heavy (~100%), Mixed operations (~100%), Multiple (~84%), Numerical exprs (~84%), Patterns (~87%), Perimeter (~95%), Place value (~82%), Powers (~89%), Rational number (~85%), Sphere (~95%), Subtraction (~89%), Time (~95%), Triangles (~90%), Variable exprs (~100%), Volume of 3d shapes (~70%), Add (~94%), Compare (~79%), Count (~90%), Division (~95%), Equations (~82%), Length (~87%), Statistics (~56%), Percents (~88%), Polygons (~84%), Probability (~89%), Proportional (~95%), Quadrilaterals (~85%), Ratio (~95%).

### Key Observations

1. **Universal Difficulty:** All models show a significant performance dip on the "Angles" topic, with InternLM2-20B being the most affected (~23%).

2. **Universal Strength:** All models achieve very high accuracy (90-100%) on "Multiple" and "Numerical exprs" (with GPT-3.5 hitting 100% on both).

3. **Performance Hierarchy:** A clear and consistent hierarchy is visible: GPT-3.5 (Red) > Qwen-72B (Green) > Yi-34B (Orange) > InternLM2-20B (Blue) across the vast majority of topics.

4. **Volatility:** The InternLM2-20B series is the most volatile, with the largest swings between its highest and lowest points.

5. **Anomaly:** The "Statistics" topic shows a notable outlier where GPT-3.5's accuracy (~56%) drops significantly, falling below both Qwen-72B (~69%) and Yi-34B (~53% is lower, but the red line is clearly below green here).

### Interpretation

This chart provides a comparative benchmark of mathematical reasoning capabilities across four AI models. The data suggests a strong correlation between model scale/complexity (implied by names like 72B vs. 20B) and performance on these tasks. GPT-3.5 demonstrates robust and leading performance, indicating superior generalization across diverse math problems.

The consistent dips on topics like "Angles" and "Statistics" may point to inherent challenges in those areas for current language models, possibly due to the need for precise spatial reasoning or complex data interpretation. Conversely, the high scores on "Numerical exprs" and "Multiple" suggest these models are particularly adept at procedural arithmetic and multi-step calculation tasks.

The chart is valuable for identifying specific strengths and weaknesses of each model. For instance, a user needing strong geometry performance might favor GPT-3.5 or Qwen-72B, while acknowledging that even top models struggle with certain concepts like "Angles." The performance gap between the largest model (GPT-3.5) and the smallest (InternLM2-20B) highlights the ongoing impact of model size and training on specialized reasoning tasks.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Model Accuracy Across Tasks

### Overview

The image is a line graph comparing the accuracy of four AI models (InternLM2-20B, Yi-34B, Qwen-72B, GPT-3.5) across 30 distinct tasks. The x-axis lists tasks (e.g., "Angles," "Area," "Classifying & sorting"), while the y-axis represents accuracy as a percentage from 20 to 100. Four colored lines (blue, orange, green, red) correspond to the models, with the legend positioned at the top.

### Components/Axes

- **X-axis**: Task categories (e.g., "Angles," "Area," "Classifying & sorting," "Coordinate plane," "Cubes," "Cylinders," "Decimals," "Estimation & rounding," "Fractions," "Light & heavy," "Mixed operations," "Multiple expressions," "Numerical exprs," "Patterns," "Perimeter," "Place value," "Powers," "Rational number," "Spheres," "Subtraction," "Time," "Triangles," "Variable exprs," "Volume of 3d shapes," "Add," "Compare," "Count," "Division," "Equations," "Length," "Statistics," "Percentages," "Polygons," "Probability," "Proportional," "Proportional 3d shapes," "Ratio," "Temperature," "Volume").

- **Y-axis**: Accuracy (20–100, increments of 10).

- **Legend**:

- Blue: InternLM2-20B

- Orange: Yi-34B

- Green: Qwen-72B

- Red: GPT-3.5

### Detailed Analysis

- **GPT-3.5 (Red Line)**:

- Consistently the highest-performing model, with peaks reaching 100% in tasks like "Multiple expressions" and "Compare."

- Notable dips in "Angles" (~60%) and "Proportional 3d shapes" (~55%).

- Average accuracy: ~85–95% across most tasks.

- **Qwen-72B (Green Line)**:

- Strong performance in "Multiple expressions" (~95%) and "Compare" (~90%).

- Significant drops in "Angles" (~45%) and "Proportional 3d shapes" (~60%).

- Average accuracy: ~75–90%.

- **Yi-34B (Orange Line)**:

- Peaks at ~95% in "Multiple expressions" and "Compare."

- Low points in "Angles" (~50%) and "Proportional 3d shapes" (~65%).

- Average accuracy: ~70–85%.

- **InternLM2-20B (Blue Line)**:

- Lowest overall performance, with a sharp drop to ~25% in "Angles."

- Peaks at ~70% in "Multiple expressions" and "Compare."

- Average accuracy: ~40–70%.

### Key Observations

1. **GPT-3.5 Dominance**: The red line (GPT-3.5) consistently outperforms others, with the highest peaks and fewest dips.

2. **Task-Specific Variability**:

- "Angles" is the weakest task for all models, with InternLM2-20B (blue) at ~25% and GPT-3.5 (red) at ~60%.

- "Multiple expressions" and "Compare" are the strongest tasks, with all models achieving 80–100% accuracy.

3. **Model-Specific Trends**:

- **InternLM2-20B (Blue)**: Most erratic performance, with extreme lows (e.g., "Angles") and moderate highs.

- **Yi-34B (Orange)**: Moderate variability, with mid-range accuracy across most tasks.

- **Qwen-72B (Green)**: Strong in complex tasks but struggles with basic geometry ("Angles").

- **GPT-3.5 (Red)**: Most consistent, with minimal dips and high peaks.

### Interpretation

The data suggests that GPT-3.5 (red) is the most robust model, excelling in both complex and basic tasks. Qwen-72B (green) and Yi-34B (orange) show task-specific strengths but lag behind GPT-3.5 in consistency. InternLM2-20B (blue) underperforms significantly, particularly in foundational tasks like "Angles." The graph highlights the importance of model architecture and training data in handling diverse computational challenges. Outliers like the blue line's 25% accuracy in "Angles" indicate potential limitations in specific domains, while the red line's 100% peaks in "Multiple expressions" underscore its advanced capabilities in symbolic reasoning.

DECODING INTELLIGENCE...