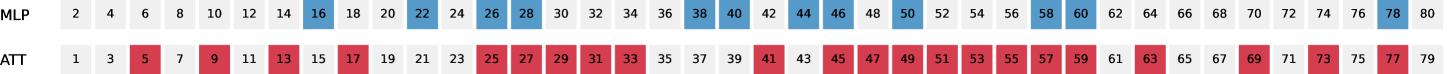

## Sequence Activation Chart: MLP vs. ATT Layer/Neuron Indices

### Overview

The image displays a horizontal sequence chart comparing the activation patterns of two models or components, labeled "MLP" and "ATT". The chart visualizes specific numerical indices (from 1 to 80) that are "active" or selected for each component, represented by colored cells against a light gray background grid of all possible indices.

### Components/Axes

* **Row Labels (Left Side):**

* Top Row: `MLP`

* Bottom Row: `ATT`

* **Data Axis (Horizontal):** A linear sequence of integers from 1 to 80, representing indices (e.g., layer numbers, neuron IDs, or token positions). Each integer occupies a discrete cell.

* **Legend/Color Coding:**

* **Blue Cells:** Correspond to the `MLP` row.

* **Red Cells:** Correspond to the `ATT` row.

* **Light Gray Cells:** Represent inactive or non-selected indices for both components.

### Detailed Analysis

The chart is a direct mapping of active indices for two separate series.

**1. MLP (Top Row - Blue Cells):**

* **Trend/Pattern:** The blue cells are sparsely and irregularly distributed across the 80 positions. There is no simple arithmetic progression; they appear in small clusters and isolated points.

* **Active Indices (Blue):** 16, 22, 26, 28, 38, 44, 46, 50, 58, 60, 78.

* **Spatial Distribution:** The first activation appears at index 16. There is a notable cluster between 22-28. Another cluster exists from 38-50. The final two activations are at 58 and 60, with a final isolated point at 78.

**2. ATT (Bottom Row - Red Cells):**

* **Trend/Pattern:** The red cells are much more frequent and densely packed than the blue cells. They form long, nearly continuous blocks with only occasional gaps.

* **Active Indices (Red):** 5, 9, 13, 17, 25, 27, 29, 31, 33, 41, 45, 47, 49, 51, 53, 55, 57, 63, 69, 73, 77.

* **Spatial Distribution:** Activations begin early at index 5. A dense block runs from 25 to 33. The densest block spans from 41 to 57, with activations at every odd index in that range. Further activations are spaced at 63, 69, 73, and 77.

### Key Observations

1. **Density Disparity:** The `ATT` component has significantly more active indices (21 red cells) compared to the `MLP` component (11 blue cells).

2. **Pattern Contrast:** `ATT` activations form long, regular sequences (often at odd numbers), suggesting a structured, periodic, or widespread engagement. `MLP` activations are more sporadic and clustered, indicating targeted or conditional engagement.

3. **Overlap:** There is **no direct overlap** in the active indices between the two rows. At no point do a blue and red cell occupy the same column (index).

4. **Spatial Grounding:** The row labels are positioned to the immediate left of their respective data sequences. The entire chart is laid out on a uniform grid, with each number centered in its cell.

### Interpretation

This chart likely visualizes the internal activation patterns of a neural network architecture that has both Multi-Layer Perceptron (MLP) and Attention (ATT) components, possibly across 80 layers or processing steps.

* **What the data suggests:** The `ATT` mechanism appears to be broadly and consistently engaged throughout the sequence, with a particularly high concentration of activity in the middle-to-late stages (indices 41-57). This aligns with the role of attention in capturing relationships across a sequence. The `MLP` component shows more selective, burst-like activity, which may correspond to specific feature transformations or computations triggered at particular points.

* **Relationship between elements:** The complete lack of index overlap is striking. It suggests a potential **division of labor** or **specialization** within the model, where attention and MLP functions are activated at distinct, non-interfering stages of processing. They are complementary rather than redundant.

* **Anomalies/Notable Points:** The dense, odd-numbered sequence in the `ATT` row from 41 to 57 is the most prominent feature. This could indicate a core processing phase where the attention mechanism is applied in a sliding-window or iterative fashion. The isolated `MLP` activation at the very end (index 78) might represent a final, high-level feature integration step.