## Grouped Bar Chart: Brain Alignment vs. Number of Units by Model Size

### Overview

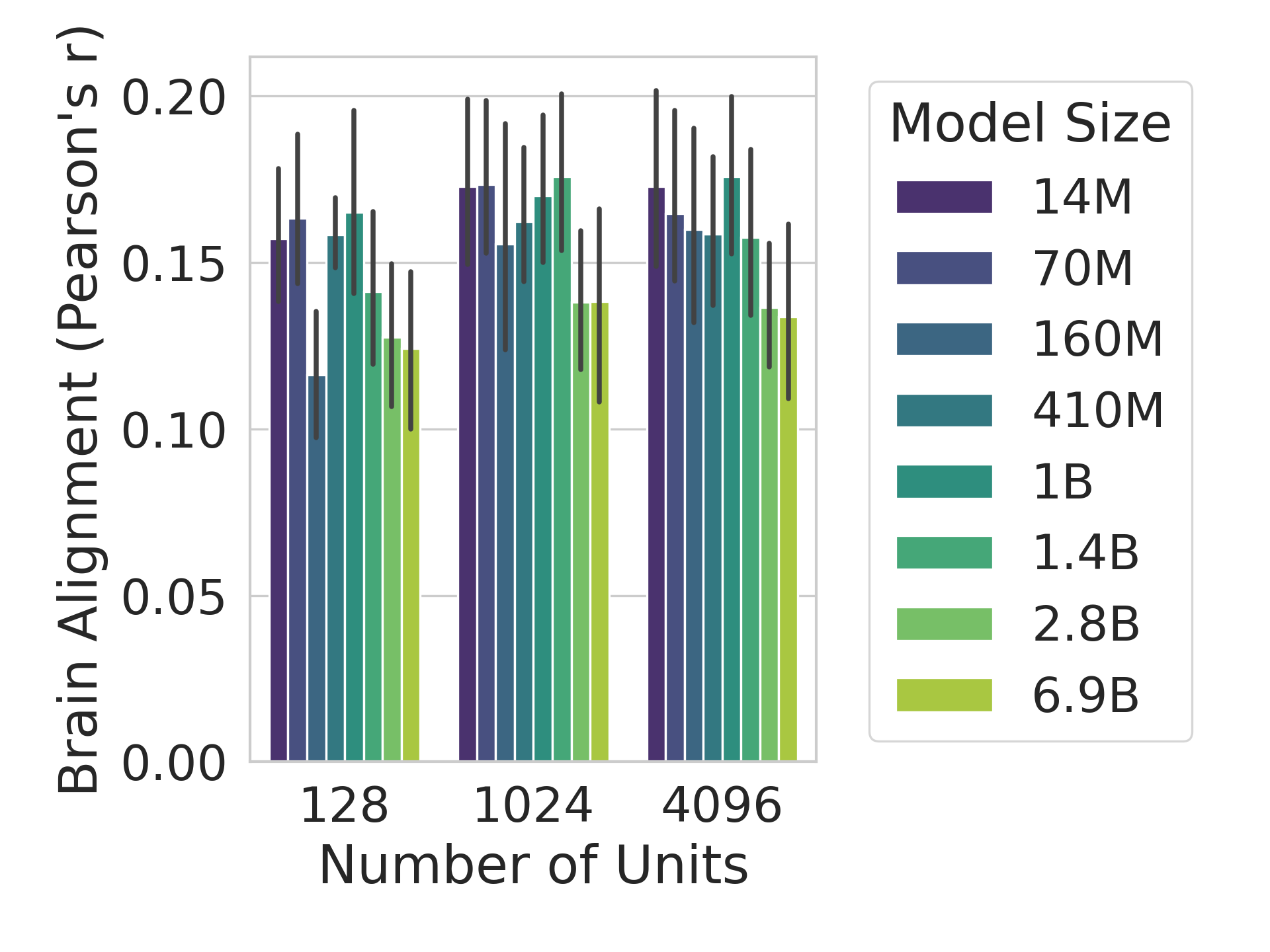

This is a grouped bar chart with error bars, illustrating the relationship between "Brain Alignment (Pearson's r)" and the "Number of Units" for various neural network model sizes. The chart compares performance across three distinct unit counts (128, 1024, 4096) for eight different model sizes, ranging from 14 million (14M) to 6.9 billion (6.9B) parameters.

### Components/Axes

* **Y-Axis (Vertical):**

* **Label:** "Brain Alignment (Pearson's r)"

* **Scale:** Linear, ranging from 0.00 to 0.20.

* **Major Tick Marks:** 0.00, 0.05, 0.10, 0.15, 0.20.

* **X-Axis (Horizontal):**

* **Label:** "Number of Units"

* **Categories:** Three discrete groups labeled "128", "1024", and "4096".

* **Legend (Positioned to the right of the chart):**

* **Title:** "Model Size"

* **Entries (from top to bottom, with associated color):**

1. 14M (Dark Purple)

2. 70M (Dark Blue)

3. 160M (Medium Blue)

4. 410M (Teal)

5. 1B (Green-Teal)

6. 1.4B (Medium Green)

7. 2.8B (Light Green)

8. 6.9B (Yellow-Green)

* **Data Representation:** Each of the three x-axis categories contains a cluster of eight vertical bars, one for each model size in the legend order. Each bar has a thin black vertical line extending from its top, representing an error bar (likely standard deviation or confidence interval).

### Detailed Analysis

**Data Point Extraction (Approximate Values):**

Values are estimated based on bar height relative to the y-axis grid lines. Error bar lengths are noted qualitatively.

**Group 1: Number of Units = 128**

* **Trend:** Within this group, alignment generally increases from the smallest model (14M) to a peak around the 1B model, then decreases for the largest models.

* **Values (Model Size: Approx. Pearson's r, Error Bar Note):**

* 14M: ~0.155, Medium error bar.

* 70M: ~0.160, Medium error bar.

* 160M: ~0.115, **Notably lower** than adjacent bars, medium error bar.

* 410M: ~0.155, Medium error bar.

* 1B: ~0.165, **Highest in this group**, medium error bar.

* 1.4B: ~0.140, Medium error bar.

* 2.8B: ~0.125, Medium error bar.

* 6.9B: ~0.120, Medium error bar.

**Group 2: Number of Units = 1024**

* **Trend:** Alignment values are generally higher and more consistent across model sizes compared to the 128-unit group. The smallest models (14M, 70M) show high alignment, with a slight dip for mid-sized models and a peak at the 1.4B model.

* **Values (Model Size: Approx. Pearson's r, Error Bar Note):**

* 14M: ~0.170, Medium error bar.

* 70M: ~0.170, Medium error bar.

* 160M: ~0.155, Medium error bar.

* 410M: ~0.160, Medium error bar.

* 1B: ~0.170, Medium error bar.

* 1.4B: ~0.175, **Highest in this group**, medium error bar.

* 2.8B: ~0.135, Medium error bar.

* 6.9B: ~0.135, Medium error bar.

**Group 3: Number of Units = 4096**

* **Trend:** Similar to the 1024-unit group, alignment is relatively high. The smallest model (14M) is high, there's a peak at the 1B model, and a general decline for the largest models (2.8B, 6.9B).

* **Values (Model Size: Approx. Pearson's r, Error Bar Note):**

* 14M: ~0.170, Medium error bar.

* 70M: ~0.160, Medium error bar.

* 160M: ~0.155, Medium error bar.

* 410M: ~0.155, Medium error bar.

* 1B: ~0.175, **Highest in this group**, medium error bar.

* 1.4B: ~0.155, Medium error bar.

* 2.8B: ~0.135, Medium error bar.

* 6.9B: ~0.130, Medium error bar.

### Key Observations

1. **Unit Count Impact:** Moving from 128 to 1024 units generally increases the Brain Alignment score for most model sizes. The performance at 4096 units is similar to, but often slightly lower than, the performance at 1024 units.

2. **Model Size Impact:** There is no simple linear relationship between model size and alignment. Performance often peaks at intermediate model sizes (1B or 1.4B) within each unit group, rather than with the largest (6.9B) or smallest (14M) models.

3. **Notable Outlier:** The 160M model at 128 units shows a distinct dip in alignment (~0.115) compared to its neighbors, which is not replicated at higher unit counts.

4. **Consistency:** The error bars are of similar magnitude across all data points, suggesting consistent variability in the measurements. No single measurement has an exceptionally large or small error bar.

### Interpretation

The data suggests that "Brain Alignment," as measured by Pearson's correlation coefficient, is influenced by an interaction between model size (parameter count) and the number of units (likely hidden layer width or a similar architectural dimension).

* **Optimal Configuration:** The highest alignment scores (~0.175) are achieved with intermediate model sizes (1B, 1.4B) paired with a higher number of units (1024 or 4096). This indicates a "sweet spot" where model capacity and architectural scale are balanced for this specific metric.

* **Diminishing Returns:** Simply increasing model size to the largest tested (6.9B) does not yield better alignment and often results in lower scores than smaller models. Similarly, increasing units from 1024 to 4096 provides no clear benefit and may slightly reduce alignment.

* **Architectural Sensitivity:** The poor performance of the 160M model at 128 units, which disappears at higher unit counts, hints at a potential architectural instability or suboptimal configuration for that specific combination. This anomaly underscores that scaling rules may not be uniform across all model sizes.

* **Practical Implication:** For tasks where maximizing "Brain Alignment" is the goal, this chart argues against blindly scaling up both model size and unit count. Instead, it supports a more nuanced approach of tuning these two hyperparameters together, with intermediate values often proving most effective. The metric appears to saturate, and performance can degrade with over-scaling.