\n

## Diagram: State Transition Flowchart with Decision Paths

### Overview

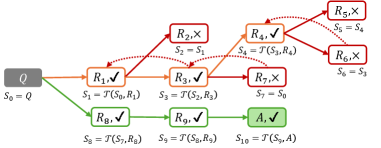

The image displays a directed graph or flowchart illustrating a state transition process, likely representing a search algorithm, decision tree, or reinforcement learning trajectory. It maps the progression from an initial state `Q` through various intermediate states (`R₁` to `R₁₀`) to a final state `A`. The diagram uses color-coding, symbols (✓/✗), and mathematical notation to denote success/failure and state transformations.

### Components/Axes

* **Node Types & Labels:** Each node is a rounded rectangle containing a label (e.g., `R₁`, `A`, `Q`) and a symbol (✓ or ✗). Below each node is a state transition equation.

* **Color Coding:**

* **Orange:** Nodes `R₁`, `R₂`, `R₃`, `R₄`, `R₅`, `R₇`, `R₁₀`. This appears to be the primary or explored branch.

* **Green:** Nodes `Q`, `R₈`, `R₉`, `A`. This appears to be a successful or alternative branch.

* **Red:** Nodes `R₂`, `R₅`, `R₇`, `R₆`. These nodes are marked with ✗, indicating failure or termination.

* **Connections:** Solid arrows indicate primary flow. Dotted red arrows indicate secondary or alternative transitions (e.g., from `R₄` to `R₆` and `R₅`).

* **Spatial Layout:** The flowchart originates from a single node `Q` on the far left. It branches into two main paths: an upper/orange path and a lower/green path. The final node `A` is positioned at the bottom right.

### Detailed Analysis

**Path 1 (Upper/Orange Branch):**

1. **Start:** `Q` (Green) - `S₀ = Q`

2. **Step 1:** `R₁, ✓` (Orange) - `S₁ = T(S₀, R₁)`

3. **Step 2 (from R₁):**

* `R₂, ✗` (Red) - `S₂ = S₁` (No state change)

* `R₃, ✓` (Orange) - `S₃ = T(S₂, R₃)`

4. **Step 3 (from R₂):**

* `R₄, ✓` (Orange) - `S₄ = T(S₃, R₄)`

* `R₅, ✗` (Red) - `S₅ = S₄` (No state change)

5. **Step 4 (from R₃):**

* `R₇, ✗` (Red) - `S₇ = S₆` (Note: `S₆` is defined later, suggesting a loop or reference).

6. **Step 5 (from R₄):**

* `R₆, ✗` (Red) - `S₆ = S₄` (No state change)

* `R₁₀, ✓` (Orange) - `S₁₀ = T(S₇, R₁₀)` (References `S₇` from the `R₃` branch).

**Path 2 (Lower/Green Branch):**

1. **Start:** `Q` (Green) - `S₀ = Q`

2. **Step 1:** `R₈, ✓` (Green) - `S₈ = T(S₀, R₈)`

3. **Step 2:** `R₉, ✓` (Green) - `S₉ = T(S₈, R₉)`

4. **Step 3 (Final):** `A, ✓` (Green) - `S₁₀ = T(S₉, A)`

**State Transition Notation:** The notation `Sₙ = T(Sₘ, Rₖ)` indicates that state `Sₙ` is the result of applying transformation `T` to previous state `Sₘ` using action/rule `Rₖ`. Notation like `S₂ = S₁` indicates a terminal or non-transformative step.

### Key Observations

1. **Two Distinct Outcomes:** The green path (`Q -> R₈ -> R₉ -> A`) leads directly to a successful terminal state `A`. The orange/red path is more complex, involving multiple branches, failures (✗), and loops (e.g., `R₇` referencing `S₆`).

2. **Failure States:** Nodes marked with ✗ (`R₂`, `R₅`, `R₇`, `R₆`) are all colored red and often result in no state change (`Sₙ = Sₘ`), indicating dead ends.

3. **Complex Interconnection:** The orange path is not linear. It features branching (`R₁` to `R₂/R₃`), convergence (dotted lines from `R₄`), and cross-branch references (`R₁₀` using `S₇` from the `R₃` sub-branch).

4. **Final State Convergence:** Both main branches ultimately reference a state `S₁₀`. The green path defines it as `T(S₉, A)`, while the orange path defines it via `R₁₀` as `T(S₇, R₁₀)`. This suggests `S₁₀` is a goal state achievable via different sequences.

### Interpretation

This diagram models a **search or decision-making process** where an agent explores possible actions from an initial state `Q`. The green path represents an **optimal or successful policy**—a direct, three-step sequence (`R₈, R₉, A`) that achieves the goal. The orange/red path represents a **more exploratory, trial-and-error process**. It includes successful steps (`R₁, R₃, R₄, R₁₀`) but also encounters failures (`R₂, R₅, R₇, R₆`) and complex state dependencies.

The notation `T(S, R)` strongly suggests a **formal transition function**, common in fields like:

* **Reinforcement Learning:** Where an agent takes actions (`R`) in states (`S`) to receive rewards and new states.

* **Automated Theorem Proving or Planning:** Where `R` represents rule applications to transform a logical state `S`.

* **Algorithmic Search:** Where nodes represent states and edges represent operations.

The key insight is that **multiple pathways can lead to the same goal state (`S₁₀`)**, but they differ dramatically in efficiency and risk of failure. The diagram visually argues for the superiority of the direct green policy over the convoluted orange exploration. The presence of failures (✗) and non-transformative steps highlights the cost of suboptimal decision-making.