## Neural Network Diagram: Simple Feedforward Network

### Overview

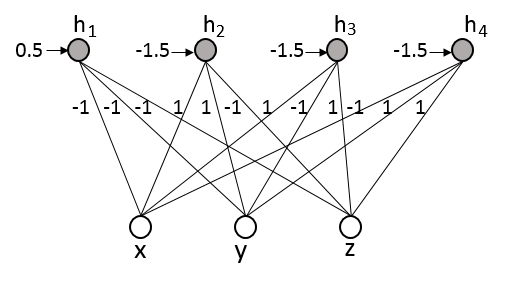

The image depicts a simple feedforward neural network with one hidden layer. It shows the connections between input nodes (x, y, z) and hidden nodes (h1, h2, h3, h4), along with the associated weights.

### Components/Axes

* **Nodes:**

* Input Nodes: x, y, z (represented as white circles)

* Hidden Nodes: h1, h2, h3, h4 (represented as gray circles)

* **Connections:** Lines connecting input nodes to hidden nodes, each labeled with a weight.

* **Weights:** Numerical values associated with each connection, indicating the strength and direction of the connection. Weights are labeled near the lines.

* **Input Values to Hidden Nodes:** Numerical values associated with each hidden node, indicating the input value.

### Detailed Analysis or ### Content Details

* **Input Nodes:** x, y, and z are located at the bottom of the diagram.

* **Hidden Nodes:** h1, h2, h3, and h4 are located at the top of the diagram.

* **Connections and Weights:**

* h1:

* x: -1

* y: -1

* z: -1

* Input Value: 0.5

* h2:

* x: 1

* y: 1

* z: -1

* Input Value: -1.5

* h3:

* x: 1

* y: -1

* z: 1

* Input Value: -1.5

* h4:

* x: -1

* y: 1

* z: 1

* Input Value: -1.5

### Key Observations

* The network has a fully connected architecture between the input and hidden layers.

* The weights are either 1 or -1, indicating positive or negative influence.

* The input values to the hidden nodes vary.

### Interpretation

The diagram illustrates a basic neural network architecture. The weights on the connections determine how the input signals are combined and transformed in the hidden layer. The input values to the hidden nodes represent the result of this weighted summation. This network could be used for simple classification or regression tasks, where the input nodes represent features and the hidden nodes represent learned representations of those features. The specific weights and input values would be determined by the training process.