\n

## Diagram: Prompt-Response Token Annotation

### Overview

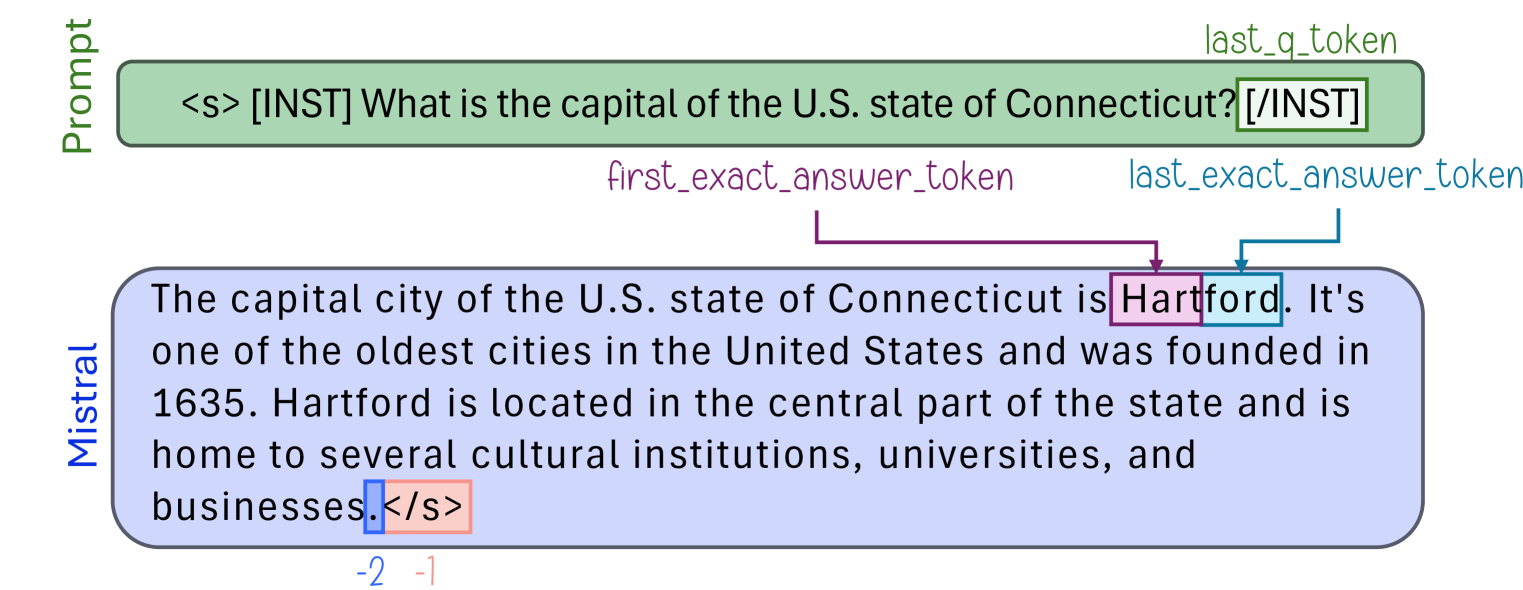

The image is a technical diagram illustrating the tokenization and annotation process for a prompt-response pair in a language model interaction. It visually maps specific tokens within a user's prompt and the model's generated response, highlighting key segments like the question, the exact answer, and end-of-sequence markers.

### Components/Axes

The diagram consists of two primary rounded rectangular boxes connected by annotated arrows, set against a plain white background.

1. **Prompt Box (Top, Green Fill):**

* **Label:** "Prompt" (written vertically on the left side).

* **Content:** `<s> [INST] What is the capital of the U.S. state of Connecticut? [/INST]`

* **Annotations:**

* A green arrow labeled `last_q_token` points to the closing `[/INST]` tag.

* The entire prompt text is enclosed in a green box.

2. **Response Box (Bottom, Blue Fill):**

* **Label:** "Mistral" (written vertically on the left side).

* **Content:** `The capital city of the U.S. state of Connecticut is Hartford. It's one of the oldest cities in the United States and was founded in 1635. Hartford is located in the central part of the state and is home to several cultural institutions, universities, and businesses.</s>`

* **Annotations:**

* A purple arrow labeled `first_exact_answer_token` points to the start of the word "Hartford".

* A blue arrow labeled `last_exact_answer_token` points to the end of the word "Hartford".

* The word "Hartford" is enclosed in a purple box.

* The final token `</s>` is enclosed in an orange box.

* Below the orange box, the numbers `-2` (in blue) and `-1` (in orange) are written, likely indicating token indices relative to the end of the sequence.

### Detailed Analysis

The diagram explicitly maps the structure of a single instruction-following interaction:

* **Prompt Structure:** The user prompt is formatted with special tokens: `<s>` (start of sequence), `[INST]` (instruction start), the question text, and `[/INST]` (instruction end). The annotation `last_q_token` identifies the final token of the question segment.

* **Response Structure:** The model's response is a continuous block of text ending with the `</s>` (end of sequence) token.

* **Answer Extraction:** The diagram isolates the core factual answer ("Hartford") within the longer response. It defines the "first_exact_answer_token" and "last_exact_answer_token" to precisely bracket this answer span.

* **Token Indexing:** The numbers `-2` and `-1` beneath the `</s>` token suggest a reverse indexing scheme for the final tokens in the sequence, where `-1` is the last token (`</s>`) and `-2` is the token immediately preceding it (the period after "businesses").

### Key Observations

1. **Instruction Format:** The prompt uses a clear `[INST]...[/INST]` delimiter format, common in models fine-tuned for instruction following.

2. **Answer Localization:** The model's response contains the exact answer ("Hartford") embedded within a longer, informative sentence. The annotation system is designed to locate this exact answer span automatically.

3. **End-of-Sequence Handling:** The diagram explicitly marks the model's stop token (`</s>`) and provides a mechanism (negative indexing) to reference tokens at the very end of the generated sequence.

4. **Visual Coding:** Color is used systematically: green for prompt elements, blue for response elements, purple for the answer span, and orange for the end token.

### Interpretation

This diagram serves as a technical schematic for a **token-level evaluation or analysis pipeline**. It demonstrates a method for:

* **Parsing Structured Prompts:** Identifying the boundaries of the user's instruction within a formatted input string.

* **Extracting Model Answers:** Precisely locating the core answer within a verbose model generation, which is crucial for automated scoring (e.g., exact match, F1 score) against a ground truth.

* **Understanding Model Output Structure:** Analyzing how a model like "Mistral" structures its responses, including its use of end-of-sequence tokens.

The relationship shown is a **mapping from raw text to annotated token spans**. The "Prompt" is the input, the "Mistral" box is the output, and the arrows/boxes represent the metadata extracted by an analysis tool. This process is fundamental for benchmarking model performance, debugging generation issues, and training reward models for reinforcement learning from human feedback (RLHF). The inclusion of negative indices (`-2`, `-1`) is particularly insightful, as it reveals a common programming practice for handling sequences of variable length when focusing on the final elements.