## Diagram: Question Answering Example

### Overview

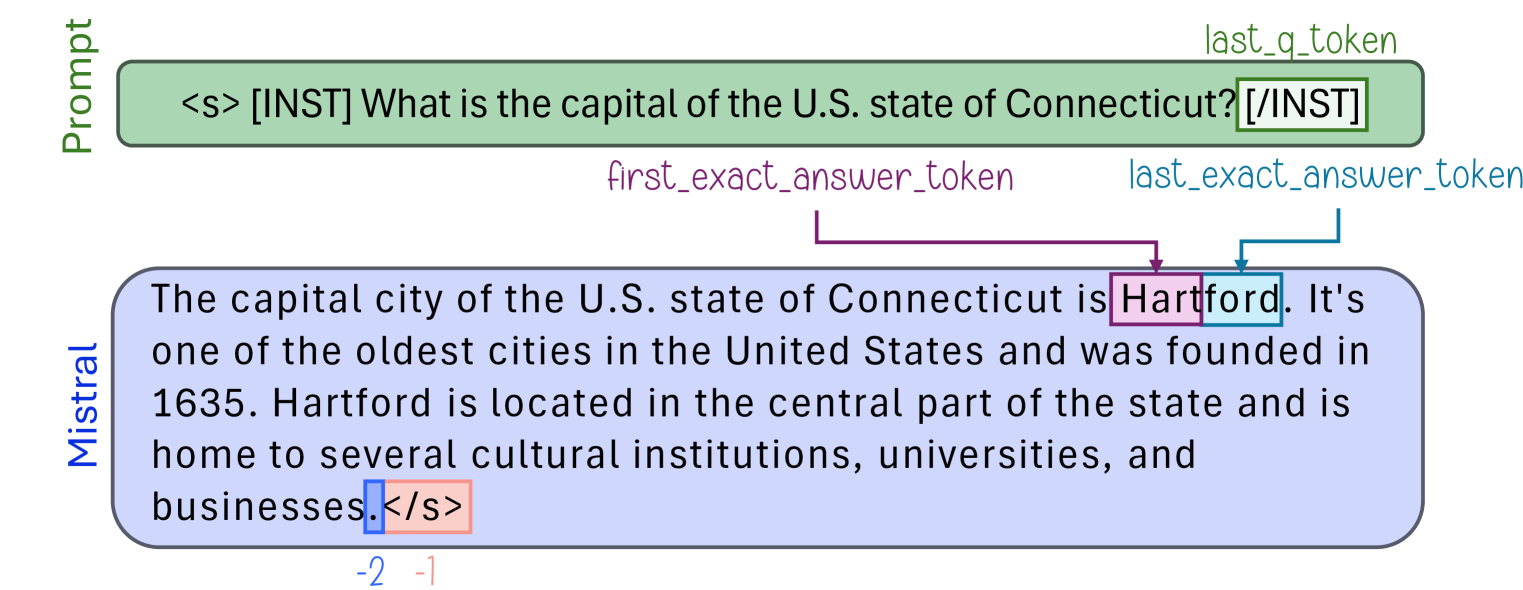

The image illustrates an example of a question-answering interaction, likely demonstrating how a model like Mistral processes a prompt and generates a response. It highlights the prompt, the model's answer, and the tokenization of the answer.

### Components/Axes

* **Prompt:** The input question provided to the model.

* **Mistral:** The model generating the answer.

* **first_exact_answer_token:** Indicates the start of the correct answer within the model's response.

* **last_exact_answer_token:** Indicates the end of the correct answer within the model's response.

* **last_q_token:** Indicates the last token of the question.

* **Tags:** "\<s>", "[INST]", "[/INST]", "\</s>" are special tokens used for formatting and instruction.

* **Numerical Indices:** "-2", "-1" are numerical indices, likely representing token positions relative to the end of the sequence.

### Detailed Analysis or ### Content Details

* **Prompt (Top, Green Box):**

* Text: "\<s> [INST] What is the capital of the U.S. state of Connecticut? [/INST]"

* **Mistral Response (Bottom, Blue Box):**

* Text: "The capital city of the U.S. state of Connecticut is Hartford. It's one of the oldest cities in the United States and was founded in 1635. Hartford is located in the central part of the state and is home to several cultural institutions, universities, and businesses.\</s>"

* **Token Annotations:**

* "first_exact_answer_token" (Purple Line): Points to the word "Hartford".

* "last_exact_answer_token" (Teal Line): Points to the word "Hartford".

* "last_q_token" (Green Line): Points to the end of the prompt.

* **Numerical Indices:**

* "-2" (Orange Box): Located near the end of the Mistral response.

* "-1" (Orange Box): Located near the end of the Mistral response.

### Key Observations

* The model correctly identifies "Hartford" as the capital of Connecticut.

* The "first_exact_answer_token" and "last_exact_answer_token" both point to "Hartford", indicating that the model's answer is precise.

* The model provides additional context about Hartford beyond just stating its name.

* The tags \<s>, [INST], [/INST], and \</s> are used to structure the prompt and response.

### Interpretation

The diagram demonstrates a successful question-answering interaction. The model (Mistral) receives a prompt, processes it, and generates a relevant and accurate response. The annotations highlight the specific tokens that constitute the correct answer, suggesting a mechanism for evaluating the model's performance. The additional context provided in the response indicates a degree of understanding beyond simple keyword matching. The numerical indices at the end of the response likely represent token positions, potentially used for further analysis or processing of the output.