## Diagram: Prompt-Response Interaction with Token Highlighting

### Overview

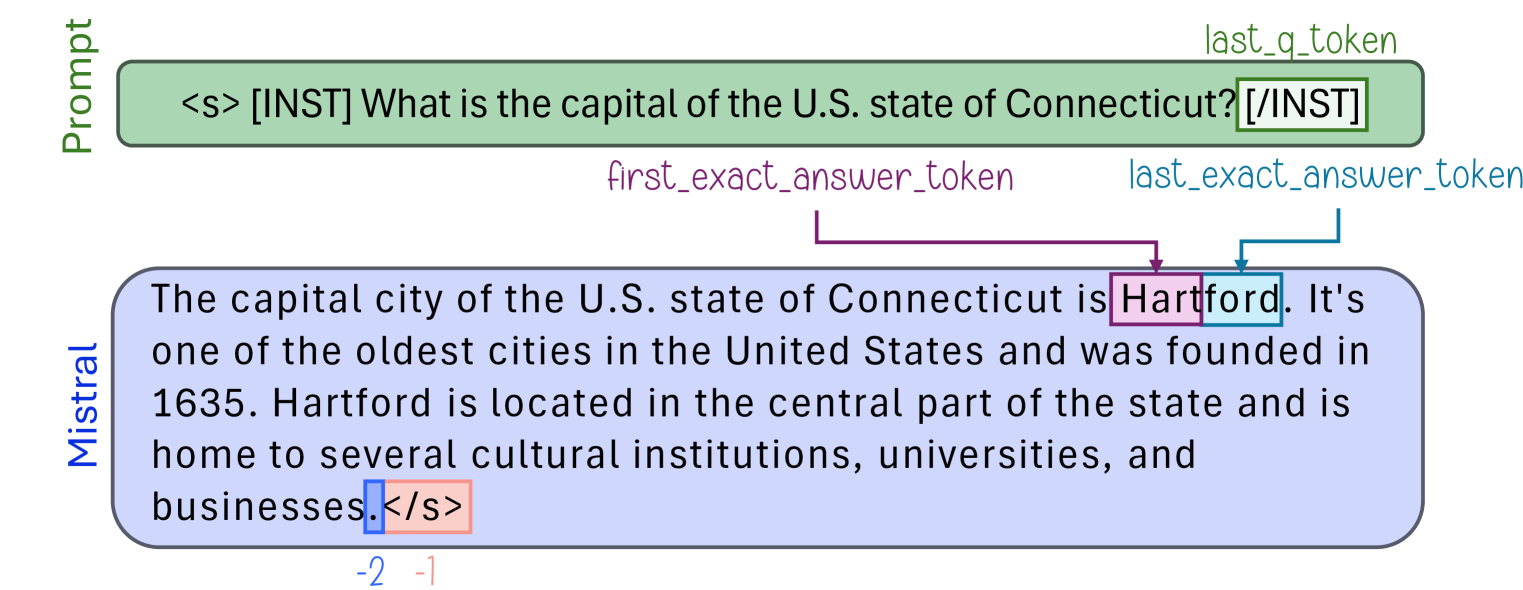

The diagram illustrates a question-answering interaction where a prompt asks for the capital of Connecticut, and the response provides "Hartford" as the answer. Key elements include color-coded tokens, arrows indicating relationships, and positional markers for evaluation metrics.

### Components/Axes

1. **Prompt Section**:

- Green box labeled `<s> [INST] What is the capital of the U.S. state of Connecticut? [/INST]`.

- Contains the question and instruction tokens (`[INST]`, `[/INST]`).

2. **Response Section**:

- Blue box containing the answer: "The capital city of the U.S. state of Connecticut is Hartford. It's one of the oldest cities...".

- Highlighted tokens:

- **Purple box**: "Hartford" (labeled `first_exact_answer_token`).

- **Blue box**: "Hartford" (labeled `last_exact_answer_token`).

- **Red box**: `</s>` (end-of-sequence token).

3. **Arrows and Labels**:

- Purple arrow from `first_exact_answer_token` to "Hartford".

- Blue arrow from `last_exact_answer_token` to "Hartford".

- Text labels: `last_q_token` (top-right of prompt), `last_exact_answer_token` (blue arrow).

4. **Evaluation Metrics**:

- Numerical values `-2 -1` at the bottom-left, possibly indicating scoring or positional offsets.

### Detailed Analysis

- **Prompt**: The question is framed within instruction tokens (`[INST]` and `[/INST]`), a common format for structured input in language models.

- **Response**: The answer "Hartford" is explicitly highlighted twice, suggesting it is the target of extraction or evaluation.

- **Token Highlighting**:

- Purple (`first_exact_answer_token`) and blue (`last_exact_answer_token`) boxes both point to "Hartford", indicating it is the extracted answer.

- Red box (`</s>`) marks the end of the response sequence.

- **Spatial Grounding**:

- Prompt is positioned at the top, response below.

- Arrows connect tokens to their respective labels, emphasizing the flow from question to answer.

- `-2 -1` is placed at the bottom-left, separate from the main content.

### Key Observations

- The answer "Hartford" is the only token highlighted with both `first_exact_answer_token` and `last_exact_answer_token`, confirming it as the correct response.

- The `-2 -1` values lack context but may represent positional indices or evaluation scores (e.g., BLEU, ROUGE).

- No other tokens in the response are highlighted, suggesting the model focused solely on "Hartford".

### Interpretation

This diagram demonstrates how a language model processes a question and identifies the exact answer within its response. The dual highlighting of "Hartford" emphasizes its role as the target answer, while the `</s>` token marks the response's completion. The `-2 -1` values likely relate to evaluation metrics but require additional context for precise interpretation. The diagram underscores the model's ability to isolate and extract factual answers from generated text, a critical capability for QA systems.