## Diagram: Language Model Reasoning Process Examples

### Overview

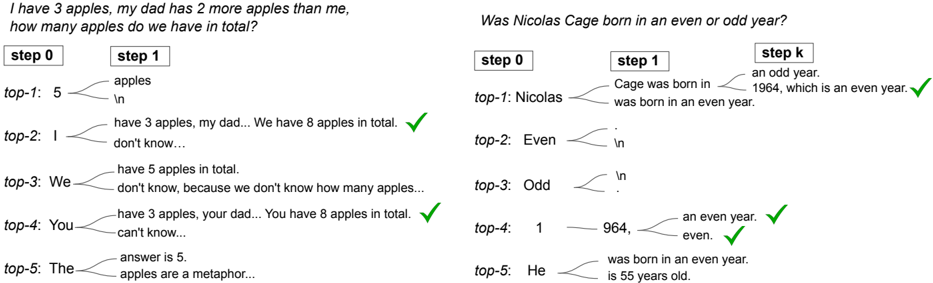

The image displays two side-by-side examples illustrating the step-by-step reasoning process of a language model when solving different types of questions. Each example shows a question at the top, followed by a sequence of steps (labeled "step 0", "step 1", and "step k" on the right) that break down the model's thought process. Below each step, multiple candidate reasoning paths (labeled "top-1" through "top-5") are shown, with branching text and green checkmarks (✓) indicating which paths lead to a correct final answer.

### Components/Axes

* **Layout:** Two distinct panels, left and right.

* **Left Panel:**

* **Question (Top):** "I have 3 apples, my dad has 2 more apples than me, how many apples do we have in total?"

* **Steps:** "step 0" and "step 1".

* **Candidate Paths:** "top-1" through "top-5", each with branching text and a final outcome.

* **Checkmarks (✓):** Placed next to the final text of "top-2" and "top-4".

* **Right Panel:**

* **Question (Top):** "Was Nicolas Cage born in an even or odd year?"

* **Steps:** "step 0", "step 1", and "step k".

* **Candidate Paths:** "top-1" through "top-5", each with branching text and a final outcome.

* **Checkmarks (✓):** Placed next to the final text of "top-1" and "top-4".

### Detailed Analysis

**Left Panel - Apple Problem:**

* **Step 0:** The initial prompt or question is processed.

* **Step 1:** The model generates multiple potential reasoning continuations.

* **top-1:** Branches to "apples" and "\n". No final answer or checkmark.

* **top-2:** Branches to "have 3 apples, my dad... We have 8 apples in total." **(✓)**. This is a correct, concise reasoning path.

* **top-3:** Branches to "have 5 apples in total." and "don't know, because we don't know how many apples...". Incorrect reasoning (5 is the dad's count, not the total).

* **top-4:** Branches to "have 3 apples, your dad... You have 8 apples in total." **(✓)**. Another correct path, phrased differently.

* **top-5:** Branches to "answer is 5." and "apples are a metaphor...". Incorrect and divergent reasoning.

**Right Panel - Nicolas Cage Problem:**

* **Step 0:** The initial prompt is processed.

* **Step 1:** The model generates initial continuations.

* **top-1:** Branches to "Cage was born in" and "was born in an even year."

* **top-2:** Branches to "Even" and "\n".

* **top-3:** Branches to "Odd" and "\n".

* **top-4:** Branches to "1" and "964,".

* **top-5:** Branches to "He" and "is 55 years old."

* **Step k (Final Step):** Shows the conclusion of the reasoning chains.

* **top-1 (continued):** From "Cage was born in", it branches to "an odd year." and "1964, which is an even year." **(✓)**. This shows self-correction: it initially states "odd year" but then correctly identifies 1964 as even.

* **top-4 (continued):** From "964,", it branches to "an even year." **(✓)** and "even." **(✓)**. This path correctly identifies the year and its parity.

### Key Observations

1. **Multiple Reasoning Paths:** For each question, the model generates several distinct potential continuations ("top-N"), exploring different linguistic and logical pathways.

2. **Self-Correction Demonstrated:** In the right panel (top-1), the model's reasoning chain includes an initial incorrect statement ("an odd year.") which is later corrected within the same chain ("1964, which is an even year.").

3. **Correctness Verification:** Green checkmarks (✓) are used to annotate the final text segments that constitute a correct answer or a valid reasoning endpoint.

4. **Divergent Outputs:** Some paths (e.g., left panel top-5, right panel top-5) lead to irrelevant or incorrect conclusions, showing the model's exploration of less optimal continuations.

5. **Step-Based Structure:** The process is explicitly broken into discrete steps ("step 0", "step 1", "step k"), suggesting a structured, iterative reasoning or generation framework.

### Interpretation

This diagram visually represents the internal "beam search" or multi-path generation process of a language model during complex reasoning tasks. It demonstrates that the model does not simply produce a single, linear answer. Instead, it simultaneously explores multiple potential lines of reasoning (the "top-N" candidates) from a given point.

The presence of both correct and incorrect paths, along with self-correction (as seen in the Nicolas Cage example), highlights the model's ability to generate, evaluate, and refine its own outputs. The checkmarks likely represent an external or internal evaluation step that identifies the valid solution paths among the candidates.

The key takeaway is the **non-deterministic and exploratory nature** of advanced language model inference. The final, user-visible answer is likely selected from these multiple candidates based on a scoring mechanism (like probability or a reward model). This process is crucial for solving problems that require multi-step deduction, factual recall, or arithmetic, as it allows the model to "consider" different approaches before committing to a final response. The diagram serves as a technical illustration of the model's reasoning diversity and its capacity for self-verification.