\n

## Diagram: Iterative Paper Citation Retrieval Process

### Overview

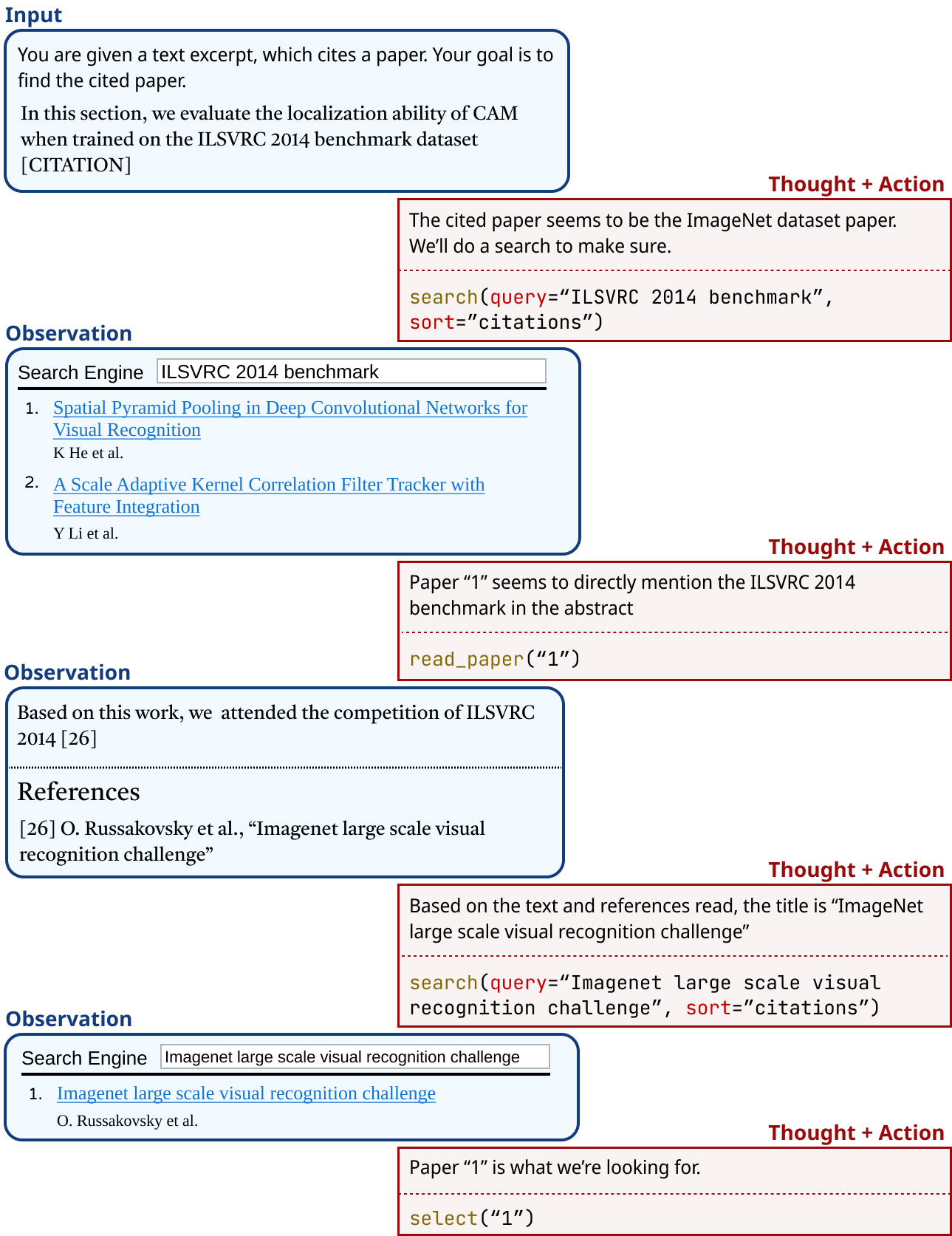

The image is a vertical flowchart illustrating a step-by-step process for identifying and retrieving a specific academic paper cited within a text excerpt. The process involves iterative cycles of reasoning ("Thought + Action") and information retrieval ("Observation"), ultimately leading to the selection of the target paper. The diagram uses a consistent visual language with color-coded boxes and directional arrows to denote the flow of operations.

### Components/Axes

The diagram is structured as a top-to-bottom sequence of interconnected blocks. There are three primary component types:

1. **Input Box (Blue Border):** Contains the initial problem statement.

2. **Thought + Action Box (Red Border):** Contains the system's reasoning and the specific action command it executes.

3. **Observation Box (Blue Border):** Displays the output or result returned from the executed action.

Arrows connect these boxes to show the sequential flow of the process.

### Detailed Analysis

The process unfolds as follows, with all text transcribed verbatim:

**1. Input Stage (Top-Left)**

* **Text:** "You are given a text excerpt, which cites a paper. Your goal is to find the cited paper. In this section, we evaluate the localization ability of CAM when trained on the ILSVRC 2014 benchmark dataset [CITATION]"

**2. First Thought + Action Cycle**

* **Thought:** "The cited paper seems to be the ImageNet dataset paper. We'll do a search to make sure."

* **Action Command:** `search(query="ILSVRC 2014 benchmark", sort="citations")`

**3. First Observation**

* **Header:** "Search Engine | ILSVRC 2014 benchmark"

* **Result 1:** "1. Spatial Pyramid Pooling in Deep Convolutional Networks for Visual Recognition" (Hyperlinked in blue)

* Author: "K He et al."

* **Result 2:** "2. A Scale Adaptive Kernel Correlation Filter Tracker with Feature Integration" (Hyperlinked in blue)

* Author: "Y Li et al."

**4. Second Thought + Action Cycle**

* **Thought:** "Paper "1" seems to directly mention the ILSVRC 2014 benchmark in the abstract"

* **Action Command:** `read_paper("1")`

**5. Second Observation**

* **Content Snippet:** "Based on this work, we attended the competition of ILSVRC 2014 [26]"

* **References Section:**

* "[26] O. Russakovsky et al., “Imagenet large scale visual recognition challenge”"

**6. Third Thought + Action Cycle**

* **Thought:** "Based on the text and references read, the title is "ImageNet large scale visual recognition challenge""

* **Action Command:** `search(query="Imagenet large scale visual recognition challenge", sort="citations")`

**7. Third Observation**

* **Header:** "Search Engine | Imagenet large scale visual recognition challenge"

* **Result 1:** "1. Imagenet large scale visual recognition challenge" (Hyperlinked in blue)

* Author: "O. Russakovsky et al."

**8. Final Thought + Action (Bottom-Right)**

* **Thought:** "Paper "1" is what we're looking for."

* **Action Command:** `select("1")`

### Key Observations

* **Process Logic:** The workflow demonstrates a refinement strategy. An initial broad search ("ILSVRC 2014 benchmark") yields multiple results. The system then reads a promising paper, extracts a precise reference title from it, and performs a new, more targeted search to find the exact cited work.

* **Visual Coding:** The diagram uses color consistently: blue for data/input-output states and red for active reasoning/processing steps.

* **Spatial Flow:** The process flows strictly from top to bottom, with "Thought + Action" boxes positioned to the right of the main vertical flow, indicating they are the driving engine for each step.

* **Data Extraction:** The system successfully extracts key metadata: the paper title ("Imagenet large scale visual recognition challenge") and the primary author ("O. Russakovsky et al.") from the reference list.

### Interpretation

This diagram models an **investigative agent's workflow** for resolving academic citations. It demonstrates a Peircean abductive reasoning pattern: starting with an incomplete clue (a citation placeholder), forming a hypothesis (it's the ImageNet paper), testing it (searching and reading), gathering new evidence (finding a specific reference), and finally converging on the most plausible conclusion (selecting the exact paper).

The process highlights the importance of **contextual cross-referencing**. The initial search term was a benchmark name, but the definitive answer was found by reading a related paper and extracting its reference. This shows that citation retrieval often requires navigating a network of related documents rather than a single direct lookup. The final `select("1")` action signifies the successful completion of the information-seeking task, having moved from a vague citation marker to a specific, verifiable bibliographic entry.