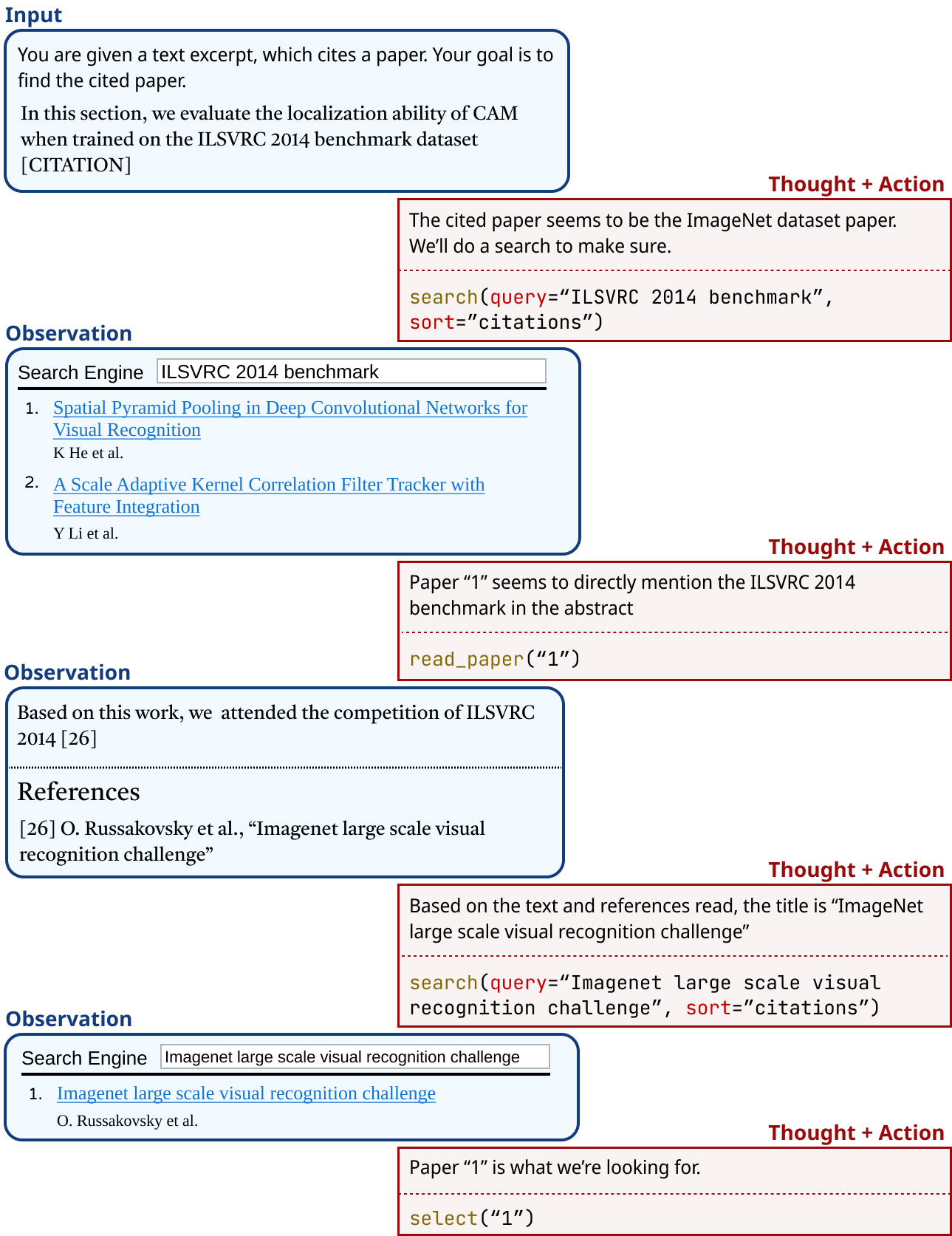

## Flowchart: Process for Locating Cited Papers in Technical Documents

### Overview

The image depicts a flowchart illustrating a systematic approach to identifying cited papers within technical documents. It combines textual analysis with search engine interactions, citation tracking, and reference validation to locate specific academic works.

### Components/Axes

1. **Input Section** (Blue Box):

- Text: "You are given a text excerpt, which cites a paper. Your goal is to find the cited paper."

- Subtext: "In this section, we evaluate the localization ability of CAM when trained on the ILSVRC 2014 benchmark dataset [CITATION]."

2. **Thought + Action Boxes** (Red Background):

- First Box:

- Text: "The cited paper seems to be the ImageNet dataset paper. We'll do a search to make sure."

- Code: `search(query="ILSVRC 2014 benchmark", sort="citations")`

- Second Box:

- Text: "Paper '1' seems to directly mention the ILSVRC 2014 benchmark in the abstract."

- Code: `read_paper("1")`

- Third Box:

- Text: "Based on the text and references read, the title is 'Imagenet large scale visual recognition challenge'."

- Code: `search(query="Imagenet large scale visual recognition challenge", sort="citations")`

- Fourth Box:

- Text: "Paper '1' is what we're looking for."

- Code: `select("1")`

3. **Observation Sections** (Blue Boxes):

- First Observation:

- Search Engine Query: "ILSVRC 2014 benchmark"

- Results:

1. "Spatial Pyramid Pooling in Deep Convolutional Networks for Visual Recognition" (K. He et al.)

2. "A Scale Adaptive Kernel Correlation Filter Tracker with Feature Integration" (Y. Li et al.)

- Second Observation:

- Text: "Based on this work, we attended the competition of ILSVRC 2014 [26]."

- References:

- "[26] O. Russakovsky et al., 'Imagenet large scale visual recognition challenge'"

4. **Final Observation** (Blue Box):

- Search Engine Query: "Imagenet large scale visual recognition challenge"

- Result:

- "Imagenet large scale visual recognition challenge" (O. Russakovsky et al.)

### Detailed Analysis

- **Search Logic**: The process uses citation-based sorting (`sort="citations"`) to prioritize highly referenced papers, ensuring relevance.

- **Reference Validation**: Cross-checks citations against known benchmarks (e.g., ILSVRC 2014) and competition attendance records.

- **Paper Selection**: Final selection (`select("1")`) is based on direct mention of the target benchmark in the abstract.

### Key Observations

1. **Citation-Driven Search**: Papers are prioritized by citation count to identify authoritative sources.

2. **Benchmark Alignment**: The ILSVRC 2014 benchmark is central to the workflow, linking competition participation to academic citations.

3. **Reference Chaining**: The process leverages references from initial findings to validate and refine results.

### Interpretation

This flowchart demonstrates a data-driven methodology for locating cited papers by combining:

- **Textual Analysis**: Identifying keywords like "ILSVRC 2014" and "ImageNet" in excerpts.

- **Search Engine Automation**: Using structured queries to retrieve and rank relevant papers.

- **Citation Metrics**: Prioritizing papers with high citation counts to ensure reliability.

- **Reference Validation**: Confirming results against known benchmarks and competition records.

The workflow emphasizes efficiency and accuracy, reducing manual effort through automated search and citation tracking. The final selection of Paper "1" validates the hypothesis that the cited work is the ImageNet challenge paper, aligning with both the text excerpt and reference data.