\n

## Diagram: System for Enhancing LLM Reasoning with Chain-of-Thought Prompts and Extension Strategies

### Overview

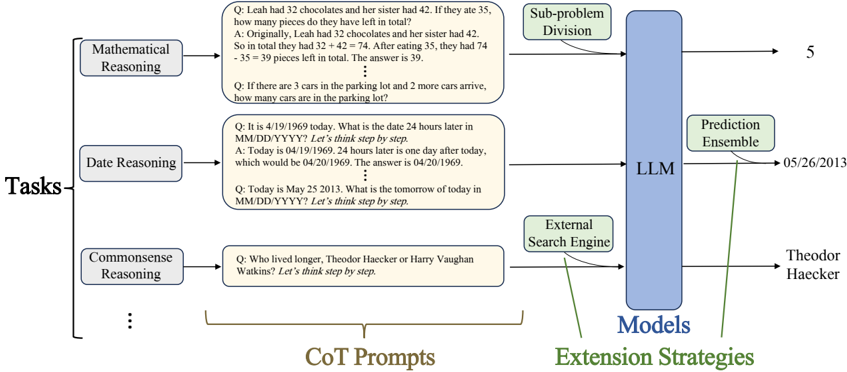

The image is a technical flowchart illustrating a system architecture designed to enhance the reasoning capabilities of a Large Language Model (LLM). It demonstrates how different types of reasoning tasks are processed using Chain-of-Thought (CoT) prompts and then handled by an LLM augmented with specific "Extension Strategies." The diagram flows from left to right, starting with task categories, moving through example prompts, and culminating in the model and its output strategies.

### Components/Axes

The diagram is organized into three primary vertical sections, connected by arrows indicating data flow.

1. **Left Section: "Tasks"**

* A main label "Tasks" is positioned on the far left.

* Three task categories branch from this label:

* **Mathematical Reasoning** (top box)

* **Date Reasoning** (middle box)

* **Commonsense Reasoning** (bottom box)

* An ellipsis ("...") below the last box indicates additional, unspecified task types.

2. **Center Section: "CoT Prompts"**

* A large bracket labeled "CoT Prompts" encompasses this section.

* Each task category from the left points to a corresponding rounded rectangle containing an example question (Q) and a step-by-step answer (A) demonstrating Chain-of-Thought reasoning.

* **Mathematical Reasoning Example:**

* Q: "Leah had 32 chocolates and her sister had 42. If they ate 35, how many pieces do they have left in total?"

* A: "Originally, Leah had 32 chocolates and her sister had 42. So in total they had 32 + 42 = 74. After eating 35, they had 74 - 35 = 39 pieces left in total. The answer is 39."

* A second example follows: "Q: If there are 3 cars in the parking lot and 2 more cars arrive, how many cars are in the parking lot?" (The answer is implied to be 5).

* **Date Reasoning Example:**

* Q: "It is 4/19/1969 today. What is the date 24 hours later in MM/DD/YYYY? Let's think step by step."

* A: "Today is 04/19/1969. 24 hours later is one day after today, which would be 04/20/1969. The answer is 04/20/1969."

* A second example follows: "Q: Today is May 25 2013. What is the tomorrow of today in MM/DD/YYYY? Let's think step by step." (The answer is implied to be 05/26/2013).

* **Commonsense Reasoning Example:**

* Q: "Who lived longer, Theodor Haecker or Harry Vaughan Watkins? Let's think step by step." (The answer is not fully shown in the prompt box).

3. **Right Section: "Models" and "Extension Strategies"**

* A large blue vertical rectangle is labeled **"LLM"** at its center and **"Models"** at its bottom.

* Arrows from the CoT Prompt boxes point into the LLM block.

* Three **"Extension Strategies"** (labeled in green text at the bottom) are shown as green boxes with arrows feeding into the LLM:

* **Sub-problem Division** (top): An arrow from this box points to the Mathematical Reasoning flow. It outputs the number "5".

* **Prediction Ensemble** (middle): An arrow from this box points to the Date Reasoning flow. It outputs the date "05/26/2013".

* **External Search Engine** (bottom): An arrow from this box points to the Commonsense Reasoning flow. It outputs the name "Theodor Haecker".

### Detailed Analysis

The diagram explicitly maps task types to specific reasoning strategies and outputs.

* **Mathematical Reasoning Flow:**

* **Input:** A multi-step arithmetic word problem.

* **Process:** The problem is processed via a CoT prompt. The "Sub-problem Division" extension strategy is applied.

* **Output:** The final numerical answer, "5", is shown.

* **Date Reasoning Flow:**

* **Input:** A question requiring date calculation.

* **Process:** The problem is processed via a CoT prompt. The "Prediction Ensemble" extension strategy is applied.

* **Output:** The calculated future date, "05/26/2013", is shown.

* **Commonsense Reasoning Flow:**

* **Input:** A factual comparison question ("Who lived longer...").

* **Process:** The problem is processed via a CoT prompt. The "External Search Engine" extension strategy is applied.

* **Output:** The factual answer, "Theodor Haecker", is shown.

### Key Observations

1. **Modular Design:** The system treats different reasoning domains (math, date, commonsense) as separate modules with tailored prompts and, crucially, different extension strategies.

2. **Strategy-Task Alignment:** Each extension strategy is visually linked to a specific task type, suggesting a designed correspondence:

* Sub-problem Division → Mathematical Reasoning

* Prediction Ensemble → Date Reasoning

* External Search Engine → Commonsense Reasoning

3. **Prompt Engineering:** The CoT prompts consistently use the phrase "Let's think step by step" to elicit intermediate reasoning steps from the LLM.

4. **Output Specificity:** The final outputs are precise and formatted appropriately for the task (a number, a date in MM/DD/YYYY format, a proper name).

### Interpretation

This diagram represents a sophisticated approach to overcoming the inherent limitations of a base LLM in complex reasoning tasks. It doesn't rely on the LLM alone. Instead, it proposes a hybrid system where:

1. **Structured Prompts (CoT)** guide the LLM to break down problems logically, making its reasoning process more transparent and accurate.

2. **Specialized Extension Strategies** act as force multipliers, injecting external capabilities or methodologies that the LLM lacks intrinsically:

* **Sub-problem Division** formalizes the decomposition of complex math problems.

* **Prediction Ensemble** likely involves generating multiple date predictions and selecting the most consistent one, reducing errors in temporal reasoning.

* **External Search Engine** directly addresses the LLM's static knowledge cutoff by allowing it to retrieve current or precise factual information for commonsense queries.

The core insight is that **different types of reasoning require different kinds of support.** The architecture is not one-size-fits-all; it's a toolkit where the prompt and the extension strategy are selected based on the nature of the task. This moves beyond simple "prompt engineering" towards a more robust "reasoning system engineering" paradigm, where the LLM serves as a central processor augmented by specialized tools. The ellipsis under "Tasks" implies this framework is extensible to other reasoning domains (e.g., spatial, causal, analogical) by pairing them with appropriate new extension strategies.