## Diagram: LLM Extension Strategies with CoT Prompts

### Overview

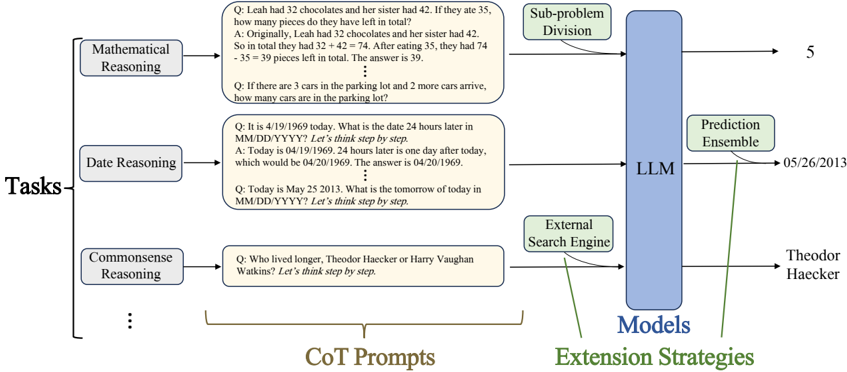

This diagram illustrates how Large Language Models (LLMs) can be extended with various strategies, specifically using Chain-of-Thought (CoT) prompts for different reasoning tasks. It shows how tasks are fed into the LLM, and how the LLM can be augmented with sub-problem division and external search engines to improve performance. The diagram also shows example outputs from these processes.

### Components/Axes

The diagram is structured into three main sections: "Tasks", "Models", and "Extension Strategies".

* **Tasks:** This section lists three types of reasoning tasks: Mathematical Reasoning, Date Reasoning, and Commonsense Reasoning.

* **Models:** This section depicts the LLM as a central blue rectangle, with extensions for Sub-problem Division and an External Search Engine.

* **Extension Strategies:** This section shows the outputs of the LLM and its extensions.

There are also labels for "CoT Prompts" at the bottom, and arrows indicating the flow of information.

### Detailed Analysis or Content Details

**Tasks Section (Left Side):**

* **Mathematical Reasoning:**

* Q: "Leah had 32 chocolates and her sister had 42. If they ate 35, how many pieces do they have left in total?"

* A: "Originally, Leah had 32 chocolates and her sister had 42. So in total they had 32 + 42 = 74. After eating 35, they had 74 - 35 = 39 pieces left in total. The answer is 39."

* **Date Reasoning:**

* Q: "It is 4/19/1969 today. What is the date 24 hours later in MM/DD/YYYY? Let's think step by step."

* A: "Today is 04/19/1969. 24 hours later is one day after today, which would be 04/20/1969. The answer is 04/20/1969."

* **Commonsense Reasoning:**

* Q: "Who lived longer, Theodor Haecker or Harry Vaughan Watkins? Let's think step by step."

**Models Section (Center):**

* **LLM:** A large blue rectangle labeled "LLM".

* **Sub-problem Division:** A smaller rectangle connected to the LLM, labeled "Sub-problem Division". An arrow points from this to a value of "5".

* **External Search Engine:** A smaller rectangle connected to the LLM, labeled "External Search Engine". An arrow points from this to "Theodor Haecker".

**Extension Strategies Section (Right Side):**

* **Prediction Ensemble:** A rectangle labeled "Prediction Ensemble" with a date "05/26/2013".

**Labels:**

* "Tasks" (left side)

* "Models" (center)

* "Extension Strategies" (right side)

* "CoT Prompts" (bottom)

### Key Observations

* The diagram demonstrates a workflow for enhancing LLM capabilities through CoT prompting and external tools.

* The LLM is positioned as the core component, with sub-problem division and external search engines acting as extensions.

* The examples provided show how the LLM can solve mathematical, date-related, and commonsense reasoning problems.

* The outputs from the extensions (5 and Theodor Haecker) suggest that these strategies can provide additional information or refine the LLM's predictions.

### Interpretation

The diagram illustrates a modular approach to LLM development, where the core LLM is augmented with specialized tools to improve its performance on specific tasks. The use of CoT prompts encourages the LLM to break down complex problems into smaller, more manageable steps, leading to more accurate and explainable results. The integration of an external search engine allows the LLM to access and incorporate external knowledge, further enhancing its reasoning abilities. The "Prediction Ensemble" suggests a method for combining the outputs of multiple models or strategies to achieve a more robust and reliable prediction. The diagram highlights the importance of combining the strengths of LLMs with external tools and techniques to create more powerful and versatile AI systems. The outputs of "5" and "Theodor Haecker" are likely the answers to the respective questions, demonstrating the effectiveness of the extension strategies. The date "05/26/2013" associated with the "Prediction Ensemble" might indicate the date of a specific experiment or dataset used in the development of these strategies.