## Diagram: LLM Task Processing Architecture

### Overview

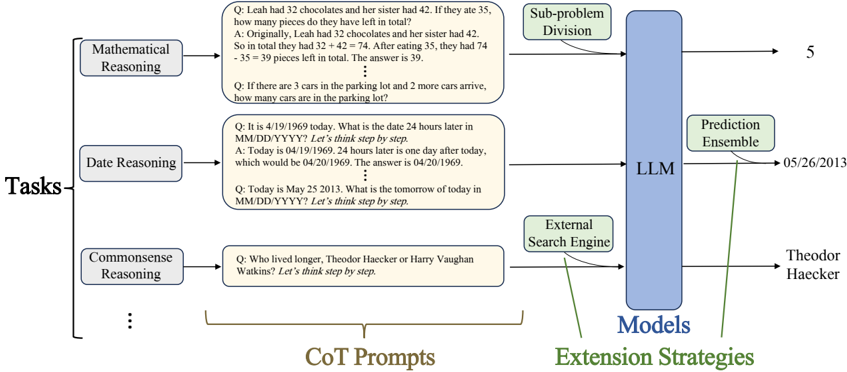

The diagram illustrates a technical architecture for processing reasoning tasks using a Large Language Model (LLM) with extension strategies. It shows a flow from task categorization through model processing to prediction enhancement.

### Components/Axes

1. **Left Panel (Tasks)**:

- **Mathematical Reasoning**: Example question about chocolate quantities (32 + 42 - 35 = 39)

- **Date Reasoning**: Example question about date calculation (1969-04-19 + 24h = 1969-04-20)

- **Commonsense Reasoning**: Example question comparing lifespans of Theodore Haecker and Harry Vaughan Watkins

- Visual structure: Three labeled boxes with example questions in beige text boxes

2. **Center Panel (CoT Prompts)**:

- Contains example prompts with "Let's think step by step" instructions

- Connects Tasks to LLM via arrows

3. **Right Panel (Models)**:

- Central blue rectangle labeled "LLM"

- Arrows from CoT Prompts and Tasks pointing to LLM

- Arrows from LLM to Extension Strategies

4. **Bottom Panel (Extension Strategies)**:

- **Prediction Ensemble**: Connected to LLM with arrow

- **External Search Engine**: Connected to LLM with arrow

- Example answer: "Theodor Haecker" (from Commonsense Reasoning task)

### Detailed Analysis

- **Flow Direction**:

Tasks → CoT Prompts → LLM → Extension Strategies

- **Key Connections**:

- Mathematical/Date/Commonsense tasks feed into CoT prompts

- CoT prompts and raw tasks both connect to LLM

- LLM outputs connect to both Prediction Ensemble and External Search Engine

- **Textual Elements**:

- All example questions use "Let's think step by step" format

- Final answer format: "The answer is [value]"

- Final answer example: "Theodor Haecker" (from Commonsense task)

### Key Observations

1. The architecture emphasizes multi-step reasoning through Chain-of-Thought (CoT) prompting

2. External search capabilities are integrated at the final prediction stage

3. Mathematical and date reasoning tasks show explicit numerical examples

4. Commonsense reasoning uses historical figure comparison as test case

### Interpretation

This architecture demonstrates a hybrid approach to LLM reasoning:

1. **Task Categorization**: Problems are first classified into mathematical, temporal, or commonsense domains

2. **Structured Reasoning**: CoT prompts enforce step-by-step logical decomposition

3. **Model Processing**: The LLM acts as the core reasoning engine

4. **Prediction Enhancement**: Final answers are refined through ensemble methods and external verification

The inclusion of both internal (CoT) and external (search engine) extension strategies suggests an emphasis on verifiable, multi-modal reasoning capabilities. The specific example answers (39 chocolates, 1969-04-20 date, Theodor Haecker) demonstrate the system's ability to handle different reasoning domains while maintaining consistent output formatting.