## Diagram: BAGEL Model Architecture with MLP Head

### Overview

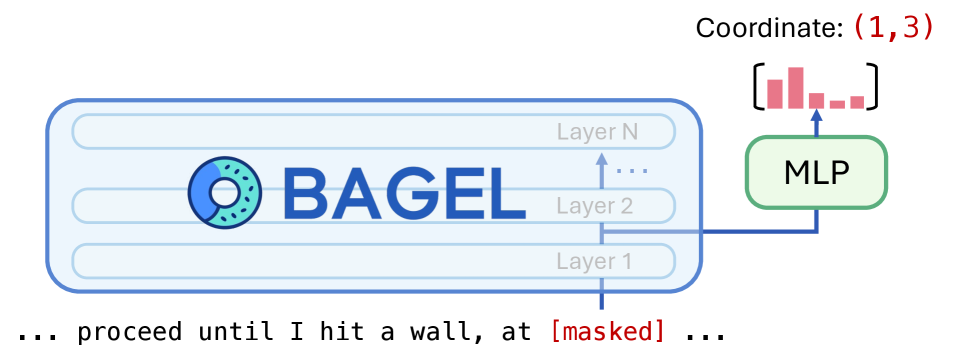

The image is a technical diagram illustrating a neural network architecture named "BAGEL." It depicts a multi-layered core model connected to a Multi-Layer Perceptron (MLP) head, which produces a specific coordinate output and a visualization. A text sequence with a masked token is shown below, suggesting the model's application in a language or sequence modeling task.

### Components/Axes

The diagram consists of three primary visual components arranged horizontally and vertically:

1. **BAGEL Core Model (Left/Center):**

* A large, light-blue rounded rectangle containing the model name and its internal structure.

* **Logo/Text:** A blue circular logo with a pattern of dots, followed by the text "**BAGEL**" in large, bold, blue sans-serif font.

* **Layer Stack:** Inside the rectangle, three horizontal bars represent model layers, labeled from bottom to top:

* `Layer 1`

* `Layer 2`

* `Layer N` (with ellipsis `...` between Layer 2 and Layer N, indicating a variable number of intermediate layers).

* A vertical arrow points upward from `Layer 1` through `Layer 2` to `Layer N`, indicating the flow of information through the stack.

2. **MLP Head & Output (Right):**

* A green rounded rectangle labeled "**MLP**" in black text.

* A blue arrow originates from the `Layer 2` bar within the BAGEL block and points to the MLP block, indicating a connection or data flow from that specific layer.

* **Output Visualization:** Above the MLP block, a small bar chart is depicted inside square brackets `[ ]`. It contains five vertical red bars of varying heights. The first two bars are the tallest, followed by three shorter bars of roughly equal height.

* **Coordinate Label:** Above the bar chart, the text "**Coordinate: (1,3)**" is displayed. The coordinate `(1,3)` is in red font.

3. **Contextual Text (Bottom):**

* A line of black monospaced text is positioned below the main diagram: `... proceed until I hit a wall, at [masked] ...`

* The token `[masked]` is highlighted in red font, matching the color of the coordinate output.

### Detailed Analysis

* **Spatial Grounding:** The BAGEL model block occupies the left and central portion of the image. The MLP head and its outputs are positioned to the right of the BAGEL block. The contextual text runs along the bottom edge.

* **Data Flow:** The diagram shows a clear flow: Information from `Layer 2` of the BAGEL model is fed into an MLP head. The MLP then produces two outputs: a specific coordinate value `(1,3)` and a feature vector visualized as a bar chart.

* **Text Transcription:**

* `BAGEL`

* `Layer 1`

* `Layer 2`

* `Layer N`

* `MLP`

* `Coordinate: (1,3)`

* `... proceed until I hit a wall, at [masked] ...`

* **Color Coding:**

* **Blue:** Used for the BAGEL model name, logo, and the connecting arrow, associating these elements with the core model.

* **Green:** Used for the MLP block, distinguishing it as a separate component.

* **Red:** Used for the output coordinate `(1,3)`, the bars in the output visualization, and the `[masked]` token. This color highlights the model's specific outputs and the target of the prediction task.

### Key Observations

1. **Layer-Specific Extraction:** The connection is explicitly drawn from `Layer 2`, not the final `Layer N`. This suggests the architecture may use intermediate layer representations for specific tasks, rather than only the final output.

2. **Output Duality:** The MLP produces both a discrete coordinate and a continuous vector (the bar chart). This could represent a multi-task prediction head.

3. **Masked Token Context:** The text snippet with a `[masked]` token strongly implies the model is being used for a masked language modeling (MLM) or similar sequence infilling task. The coordinate `(1,3)` likely corresponds to the position of this masked token within a sequence or a feature map.

### Interpretation

This diagram illustrates a **BAGEL model** being used for a **sequence prediction task**, likely involving masked token recovery. The key investigative insight is the **intermediate layer connection**.

* **What it suggests:** The architecture does not simply use the final output of the BAGEL stack. Instead, it taps into the representations at `Layer 2` to feed a specialized MLP head. This could be for efficiency (using a smaller, earlier representation) or because `Layer 2` contains features particularly suited for the task of predicting coordinates or the masked token.

* **Relationship of Elements:** The BAGEL core acts as a general-purpose feature extractor. The MLP is a task-specific "head" that interprets those features to produce a concrete output—the coordinate `(1,3)` and the associated vector. The red color linking the output coordinate and the `[masked]` token creates a direct visual and logical link: the model's output is the solution to the masked position in the input text.

* **Anomaly/Notable Pattern:** The output is a **coordinate `(1,3)`**, not a word. This is atypical for a standard language model. It suggests the task might be more complex than simple word prediction. Possibilities include:

* The model is predicting the **position** (e.g., row 1, column 3) of the masked token in a structured input (like a table or image patch sequence).

* The coordinate is a **latent variable** or **address** that points to the correct token in an external memory or embedding space.

* The bar chart represents a probability distribution over possible tokens, and `(1,3)` is a key or index derived from that distribution.

In essence, the diagram depicts a **hybrid system** where a large foundational model (BAGEL) provides rich representations, which are then efficiently decoded by a lightweight MLP for a precise, localized prediction task involving both a coordinate and a feature vector.