## Diagram: Neural Network Processing Text with Masked Token

### Overview

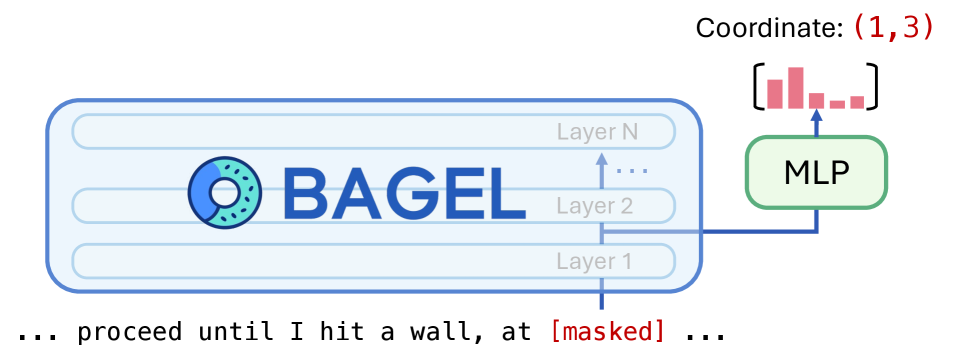

The image depicts a simplified diagram of a neural network processing the text "BAGEL" with a donut icon. The network includes multiple layers (Layer 1 to Layer N), a multi-layer perceptron (MLP), and a coordinate system. A masked token is highlighted in the input text, and a bar chart is connected to the MLP.

### Components/Axes

- **Neural Network Layers**:

- Labeled as "Layer 1," "Layer 2," ..., "Layer N" in a vertical stack.

- The input text "BAGEL" is positioned above the layers, with a donut icon (blue and teal) adjacent to it.

- **MLP**:

- A green box labeled "MLP" connected to "Layer 2" via a blue arrow.

- **Coordinate System**:

- "Coordinate: (1,3)" is written in red at the top-right corner.

- A bar chart (pink bars) is linked to the MLP, with an arrow pointing from the coordinate to the chart.

- **Masked Token**:

- The phrase "proceed until I hit a wall, at [masked] ..." is written at the bottom, with "[masked]" in red.

### Detailed Analysis

- **Neural Network Structure**:

- The input "BAGEL" is processed through a sequence of layers (Layer 1 to Layer N).

- The MLP is connected to "Layer 2," suggesting it may perform additional transformations or aggregations.

- **Coordinate (1,3)**:

- The coordinate (1,3) is positioned in the top-right corner, pointing to the bar chart. This could represent a specific position in the input or output sequence, or a reference to attention weights.

- **Bar Chart**:

- The bar chart (pink) is connected to the MLP, possibly visualizing attention scores, activation distributions, or other model outputs.

- **Masked Token**:

- The phrase "proceed until I hit a wall, at [masked] ..." indicates a point in the input sequence where a token is masked (e.g., for training purposes or to simulate uncertainty).

### Key Observations

- The MLP is connected to "Layer 2," not the input layer, implying it may process intermediate representations.

- The coordinate (1,3) and bar chart suggest a focus on specific positions or distributions within the model.

- The masked token highlights a critical point in the input sequence, potentially for debugging or training.

### Interpretation

This diagram illustrates how a neural network processes text, with the MLP acting as a secondary processing unit. The coordinate (1,3) and bar chart may indicate attention mechanisms or positional analysis. The masked token suggests the model is designed to handle incomplete or ambiguous inputs, a common technique in natural language processing. The donut icon next to "BAGEL" could symbolize the model's ability to recognize or generate circular/round shapes, though this is speculative without additional context.