TECHNICAL ASSET FINGERPRINT

8628967d5667519783ddc637

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

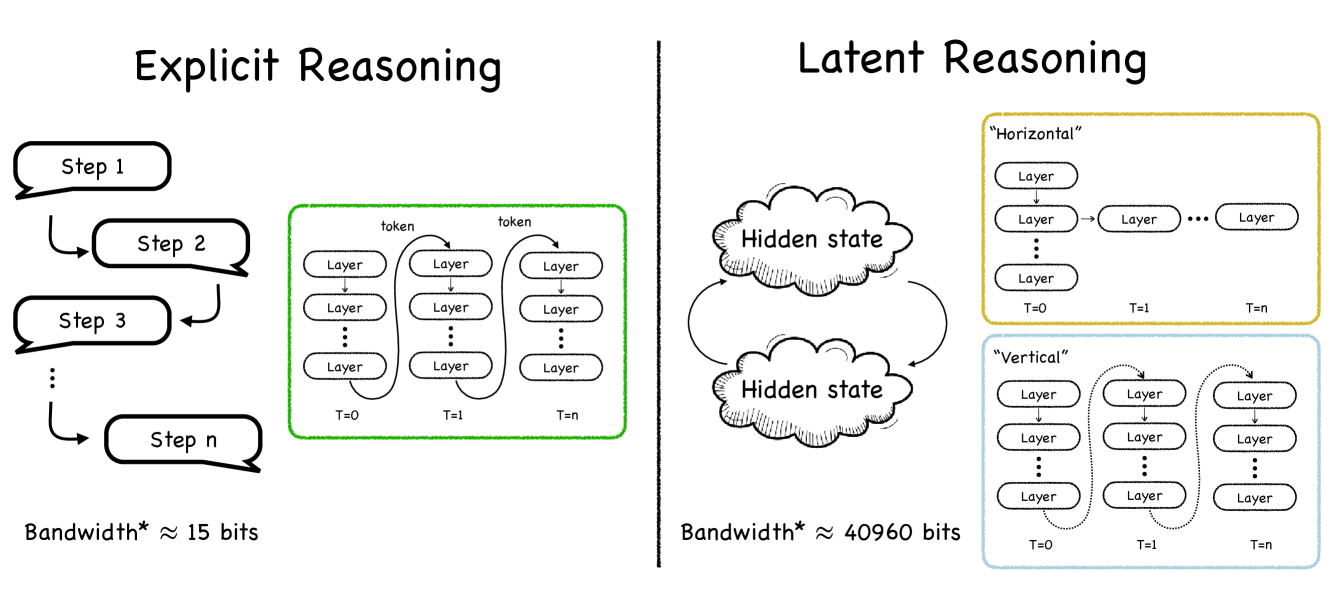

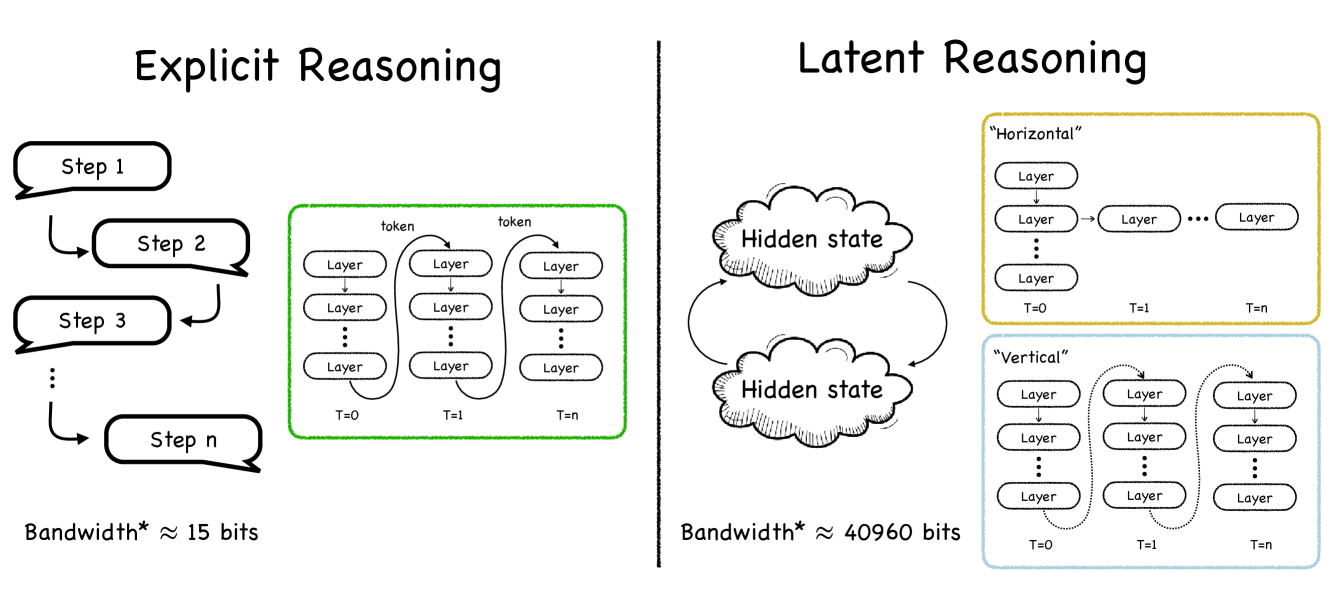

## Diagram: Comparison of Explicit vs. Latent Reasoning Architectures

### Overview

The image is a conceptual diagram comparing two paradigms of reasoning in artificial intelligence or computational models: **Explicit Reasoning** (left panel) and **Latent Reasoning** (right panel). The diagram uses flowcharts, architectural schematics, and quantitative annotations to illustrate the fundamental differences in process, structure, and information bandwidth between the two approaches.

### Components/Axes

The image is divided into two primary sections by a central vertical black line.

**Left Panel: Explicit Reasoning**

* **Title:** "Explicit Reasoning" (top center of left panel).

* **Flowchart:** A sequential process depicted by speech-bubble-like shapes labeled "Step 1", "Step 2", "Step 3", ..., "Step n". Arrows connect them in a linear, downward flow from Step 1 to Step n.

* **Architectural Schematic (Green Box):** A green-bordered rectangle containing a grid.

* **Columns:** Three columns labeled "T=0", "T=1", and "T=n" at the bottom, representing discrete time steps.

* **Rows:** Each column contains a vertical stack of rounded rectangles labeled "Layer". The stacks are connected by vertical arrows pointing downward.

* **Connections:** Horizontal arrows labeled "token" connect the top "Layer" of the T=0 column to the top "Layer" of the T=1 column, and similarly from T=1 to T=n. This indicates information (tokens) being passed sequentially across time steps.

* **Quantitative Annotation:** Text at the bottom left reads: "Bandwidth* ≈ 15 bits".

**Right Panel: Latent Reasoning**

* **Title:** "Latent Reasoning" (top center of right panel).

* **Core Component:** Two cloud-shaped figures labeled "Hidden state", one above the other. Curved arrows form a cycle between them, indicating a recurrent or iterative process.

* **Architectural Schematics:** Two distinct boxes to the right of the hidden state clouds.

* **"Horizontal" Box (Yellow Border):**

* **Title:** "Horizontal" (top left inside box).

* **Structure:** A horizontal chain of rounded rectangles labeled "Layer". The first "Layer" is at the top left, with a vertical arrow pointing down to a second "Layer". From this second layer, a horizontal arrow points right to another "Layer", followed by an ellipsis ("...") and a final "Layer".

* **Time Labels:** "T=0" is below the first vertical stack, "T=1" below the second, and "T=n" below the final layer in the chain.

* **"Vertical" Box (Blue Border):**

* **Title:** "Vertical" (top left inside box).

* **Structure:** Three columns, similar to the green box on the left, labeled "T=0", "T=1", "T=n" at the bottom.

* **Internal Flow:** Each column is a vertical stack of "Layer" rectangles connected by downward arrows.

* **Inter-Column Flow:** Dotted, curved arrows connect the bottom "Layer" of one column to the top "Layer" of the next column (e.g., from T=0 to T=1, and T=1 to T=n). This suggests a different, possibly recurrent, connection pattern compared to the explicit model.

* **Quantitative Annotation:** Text at the bottom right reads: "Bandwidth* ≈ 40960 bits".

### Detailed Analysis

The diagram contrasts the two reasoning methods across several dimensions:

1. **Process Flow:**

* **Explicit:** Linear and sequential. Reasoning is broken down into discrete, interpretable steps (Step 1 to Step n) that are executed in order.

* **Latent:** Cyclic and state-based. Reasoning occurs within and between "Hidden state" representations, without an explicit, human-readable step sequence.

2. **Architectural Implementation:**

* **Explicit (Green Box):** Uses a standard feed-forward or transformer-like block applied sequentially across time steps. Information is passed explicitly via "tokens" from one time step's processing to the next.

* **Latent (Yellow & Blue Boxes):** Presents two potential implementations:

* **Horizontal:** Processes information in a chain across time, where the output of one layer at time T becomes the input to a layer at time T+1.

* **Vertical:** Processes information through deep stacks at each time step, with recurrent connections (dotted arrows) linking the deep states across time.

3. **Information Bandwidth:**

* The most striking quantitative difference is the annotated bandwidth.

* **Explicit Reasoning:** ≈ **15 bits**. This low value suggests the "channel" for explicit steps is narrow, possibly referring to the limited information content of discrete, symbolic tokens or steps.

* **Latent Reasoning:** ≈ **40960 bits**. This is approximately 2730 times larger than the explicit bandwidth. It implies that the hidden state vectors can encode a vast amount of dense, continuous information, enabling more complex and nuanced reasoning computations.

### Key Observations

* **Visual Metaphor:** Explicit reasoning is drawn with clear, connected shapes (steps, boxes), emphasizing transparency and structure. Latent reasoning uses clouds for hidden states, emphasizing opacity and distributed representation.

* **Color Coding:** Green is used for the explicit architecture, while yellow and blue differentiate the two latent architecture variants.

* **Temporal Representation:** Both models operate over time steps (T=0, T=1, T=n), but the mechanism of information flow across these steps differs fundamentally (token passing vs. state recurrence).

* **Bandwidth Disparity:** The 40960-bit vs. 15-bit comparison is the central quantitative claim, highlighting a massive difference in the potential information processing capacity of the two approaches.

### Interpretation

This diagram illustrates a core trade-off in AI system design between **interpretability** and **computational power/capacity**.

* **Explicit Reasoning** prioritizes transparency. Its step-by-step nature makes the reasoning process auditable and understandable to humans, but it is constrained by a low-bandwidth interface (like language), which may limit the complexity and efficiency of the reasoning it can perform.

* **Latent Reasoning** prioritizes capacity and efficiency. By performing computations in high-dimensional, continuous hidden states, it can process vastly more information (as suggested by the 40960-bit bandwidth). This allows for potentially more powerful and nuanced reasoning. However, this process is opaque—the "reasoning" is latent within the network's activations and is not directly observable or easily explainable.

The two latent architecture sketches ("Horizontal" and "Vertical") suggest there are multiple ways to implement high-bandwidth latent reasoning, possibly corresponding to different neural network designs (e.g., recurrent networks vs. deep transformers with recurrence). The diagram posits that moving from explicit to latent reasoning is not just a change in process but a fundamental shift in the information channel's capacity, enabling qualitatively different kinds of computation.

DECODING INTELLIGENCE...