## Diagram: Explicit vs. Latent Reasoning Architectures

### Overview

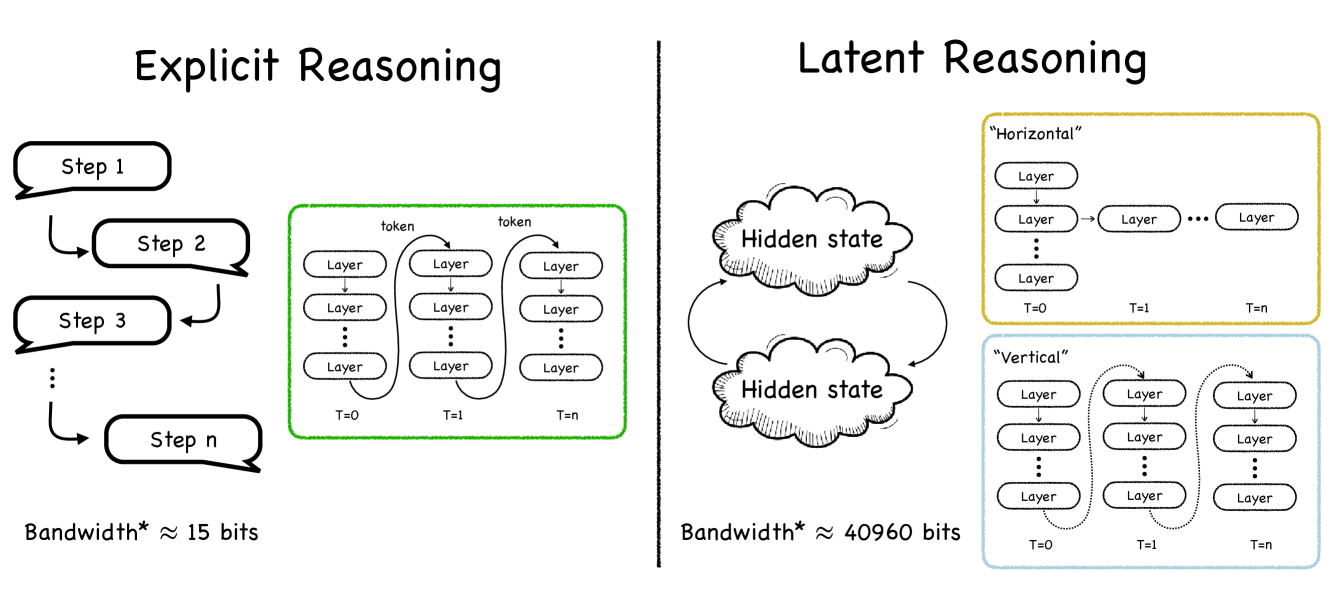

The diagram contrasts two reasoning architectures: **Explicit Reasoning** (left) and **Latent Reasoning** (right). Explicit Reasoning uses sequential steps with low bandwidth, while Latent Reasoning employs hidden states and parallel processing with significantly higher bandwidth.

### Components/Axes

#### Explicit Reasoning (Left)

- **Steps**: Sequential process labeled "Step 1" → "Step 2" → ... → "Step n" (arrows indicate flow).

- **Layers**: Green box containing stacked layers labeled "Layer" (repeated vertically).

- **Tokens**: Each layer processes "token" inputs (arrows connect tokens to layers).

- **Time Steps**: Labeled "T=0" (input) to "T=n" (output).

- **Bandwidth**: Annotated as ≈15 bits.

#### Latent Reasoning (Right)

- **Hidden State**: Central cloud labeled "Hidden state" with bidirectional arrows to/from itself.

- **Horizontal Processing**:

- Yellow box labeled "Horizontal" with sequential layers (T=0 → T=1 → ... → T=n).

- Layers connected via arrows, forming a linear chain.

- **Vertical Processing**:

- Blue box labeled "Vertical" with stacked layers (T=0 at the bottom, T=n at the top).

- Layers connected vertically via arrows.

- **Bandwidth**: Annotated as ≈40,960 bits.

### Detailed Analysis

- **Explicit Reasoning**:

- Sequential steps feed into a layered system where tokens are processed across time steps (T=0 to T=n).

- Bandwidth is explicitly stated as ≈15 bits, suggesting limited data throughput.

- **Latent Reasoning**:

- **Hidden State**: Acts as a central node with self-feedback loops, enabling dynamic state updates.

- **Horizontal Processing**: Linear progression of layers across time steps (T=0 to T=n), resembling a recurrent or sequential model.

- **Vertical Processing**: Stacked layers at fixed time steps (T=0 to T=n), suggesting parallel feature extraction or hierarchical processing.

- Bandwidth is ≈40,960 bits, ~2,730x higher than Explicit Reasoning, indicating massive parallelism or compression.

### Key Observations

1. **Bandwidth Disparity**: Latent Reasoning’s bandwidth is ~2,730x greater than Explicit Reasoning, implying vastly different computational demands.

2. **Architectural Trade-offs**:

- Explicit Reasoning prioritizes simplicity (sequential steps) but suffers from low efficiency.

- Latent Reasoning uses hidden states and parallelism (horizontal/vertical) to achieve high bandwidth but introduces complexity.

3. **Hidden State Role**: The bidirectional arrows in the hidden state suggest it retains and updates information across time steps, enabling context-aware processing.

### Interpretation

- **Explicit Reasoning** mirrors traditional step-by-step algorithms (e.g., rule-based systems) with minimal state retention. Its low bandwidth limits scalability.

- **Latent Reasoning** resembles modern neural architectures (e.g., transformers or RNNs) where hidden states enable memory and parallelism. The horizontal/vertical split may represent:

- **Horizontal**: Temporal sequence modeling (e.g., language processing).

- **Vertical**: Feature hierarchy extraction (e.g., image layers).

- The hidden state’s self-feedback loops highlight its role in maintaining context, critical for tasks requiring long-term dependencies.

- The bandwidth difference underscores the efficiency gains of latent methods, though at the cost of interpretability (e.g., "black box" nature of neural networks).

This diagram likely illustrates a trade-off between interpretability (Explicit) and efficiency/scalability (Latent), common in AI/ML system design.