## Scatter Plot: AI Model Accuracy Comparison

### Overview

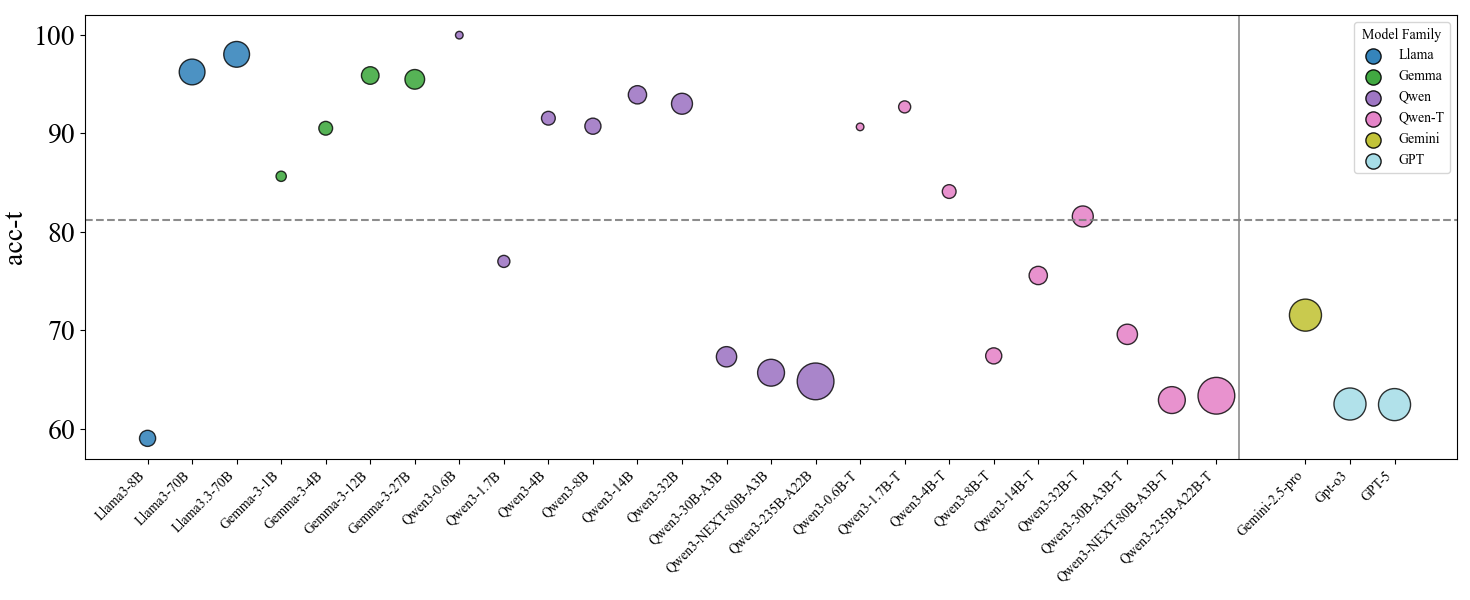

This image is a scatter plot comparing the accuracy (acc-t) of various large language models (LLMs) from different model families. The chart uses colored bubbles to represent individual models, with the bubble size likely corresponding to model size (parameter count) or another scaling metric. The plot is divided into two sections by a vertical gray line, separating the main comparison group from three additional models on the far right.

### Components/Axes

* **Y-Axis:** Labeled "acc-t" (likely accuracy on a specific task or benchmark). The scale runs from 60 to 100, with major tick marks at 60, 70, 80, 90, and 100. A horizontal dashed gray line is drawn at acc-t = 80.

* **X-Axis:** Lists the names of specific AI models. The labels are rotated for readability. From left to right, the models are:

* Llama3-8B, Llama3-70B, Llama3.3-70B

* Gemma-3-1B, Gemma-3-4B, Gemma-3-12B, Gemma-3-27B

* Qwen3-0.6B, Qwen3-1.7B, Qwen3-4B, Qwen3-8B, Qwen3-14B, Qwen3-32B, Qwen3-30B-A3B, Qwen3-NEXT-80B-A3B, Qwen3-235B-A22B

* Qwen3-0.6B-T, Qwen3-1.7B-T, Qwen3-4B-T, Qwen3-8B-T, Qwen3-14B-T, Qwen3-32B-T, Qwen3-30B-A3B-T, Qwen3-NEXT-80B-A3B-T, Qwen3-235B-A22B-T

* (After vertical divider) Gemini-2.5-pro, Gpt-o3, GPT-5

* **Legend:** Located in the top-right corner, titled "Model Family". It maps colors to model families:

* **Blue:** Llama

* **Green:** Gemma

* **Purple:** Qwen

* **Pink:** Qwen-T (likely a variant, possibly "Turbo" or "Tuned")

* **Yellow-Green:** Gemini

* **Light Blue:** GPT

### Detailed Analysis

**Data Points (Approximate acc-t values, grouped by family):**

* **Llama Family (Blue):**

* Llama3-8B: ~59

* Llama3-70B: ~96

* Llama3.3-70B: ~98

* **Gemma Family (Green):**

* Gemma-3-1B: ~86

* Gemma-3-4B: ~91

* Gemma-3-12B: ~96

* Gemma-3-27B: ~96

* **Qwen Family (Purple):**

* Qwen3-0.6B: ~100 (appears as a small dot at the very top)

* Qwen3-1.7B: ~77

* Qwen3-4B: ~92

* Qwen3-8B: ~91

* Qwen3-14B: ~94

* Qwen3-32B: ~93

* Qwen3-30B-A3B: ~67

* Qwen3-NEXT-80B-A3B: ~66

* Qwen3-235B-A22B: ~65

* **Qwen-T Family (Pink):**

* Qwen3-0.6B-T: ~91

* Qwen3-1.7B-T: ~93

* Qwen3-4B-T: ~84

* Qwen3-8B-T: ~67

* Qwen3-14B-T: ~76

* Qwen3-32B-T: ~82

* Qwen3-30B-A3B-T: ~70

* Qwen3-NEXT-80B-A3B-T: ~63

* Qwen3-235B-A22B-T: ~64

* **Gemini Family (Yellow-Green):**

* Gemini-2.5-pro: ~72

* **GPT Family (Light Blue):**

* Gpt-o3: ~63

* GPT-5: ~63

**Bubble Size Observation:** Larger bubbles are generally associated with larger model names (e.g., Llama3.3-70B, Gemma-3-27B, Qwen3-235B-A22B). However, the relationship is not perfectly linear, and some high-accuracy models (like Qwen3-0.6B) have very small bubbles.

### Key Observations

1. **Performance Spread:** There is a wide spread in accuracy, from ~59 to ~100. Most models cluster above the acc-t=80 dashed line.

2. **Family Trends:**

* **Llama & Gemma:** Show a clear positive trend where larger models (70B, 27B) achieve very high accuracy (>95).

* **Qwen (Purple):** Shows a bimodal distribution. The standard Qwen3 models (0.6B to 32B) generally perform well (>90), except for the 1.7B model. The larger "Mixture-of-Experts" style models (30B-A3B, NEXT-80B-A3B, 235B-A22B) show a significant drop in accuracy, clustering between 65-67.

* **Qwen-T (Pink):** Exhibits high variance. The smaller T models (0.6B-T, 1.7B-T) perform very well (~91-93), but performance degrades erratically with size, with several models falling below the 80 line.

3. **Outliers:**

* **Qwen3-0.6B (Purple):** Achieves the highest apparent accuracy (~100) despite being the smallest model in its family (tiny bubble). This is a significant outlier.

* **Qwen3-30B-A3B and similar (Purple):** The large MoE models underperform dramatically compared to smaller dense models from the same family.

* **Gemini-2.5-pro & GPT models:** Positioned to the right of the divider, these models show moderate to lower accuracy (~63-72) in this specific comparison.

### Interpretation

This chart visualizes a benchmark comparison that challenges simple assumptions about model size and performance.

* **Size vs. Accuracy:** While there's a general trend for larger models within the Llama and Gemma families to perform better, this relationship breaks down completely for the Qwen families. The Qwen3-0.6B model's top performance suggests that for this specific "acc-t" task, architectural efficiency or training data quality can trump raw parameter count.

* **The "T" Variant Impact:** The Qwen-T series shows that applying a specific modification (the "T" variant) introduces significant performance instability. It can boost small models (0.6B-T vs 0.6B) but harm larger ones, indicating the modification's effect is highly context-dependent.

* **MoE Model Underperformance:** The poor showing of Qwen's large Mixture-of-Experts models (A3B, A22B) is striking. It implies that for this particular evaluation metric, the sparse activation of MoE models may be a disadvantage compared to dense models, or that these specific MoE architectures are not optimized for this task.

* **Benchmark Context:** The dashed line at 80 likely represents a human-performance baseline or a target threshold. Many models exceed it, but the top performers are not exclusively the largest ones. The placement of commercial models like Gemini and GPT on the lower end suggests this benchmark may measure a specific capability where open-weight models currently excel, or that the evaluation setup differs from standard commercial benchmarks.

In summary, the data suggests that model family, architecture (dense vs. MoE), and specific variant ("T") are more critical predictors of performance on this "acc-t" task than model size alone. The outlier performance of the tiny Qwen3-0.6B model is the most notable finding, warranting investigation into its training methodology.