## Bar Chart: BLEU Score Comparison for Different Models

### Overview

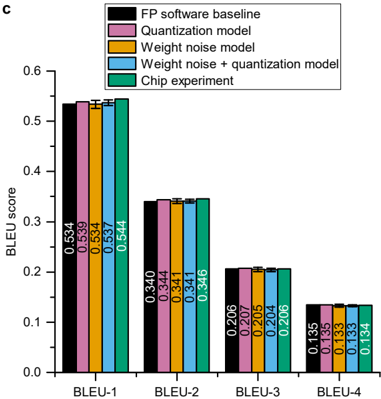

This image presents a bar chart comparing the BLEU scores of five different models across four BLEU metrics (BLEU-1, BLEU-2, BLEU-3, and BLEU-4). The models are: FP software baseline, Quantization model, Weight noise model, Weight noise + quantization model, and Chip experiment. The chart visually represents the performance of each model on each BLEU metric, allowing for a direct comparison of their effectiveness.

### Components/Axes

* **X-axis:** BLEU metric (BLEU-1, BLEU-2, BLEU-3, BLEU-4)

* **Y-axis:** BLEU score (ranging from 0.0 to 0.6)

* **Legend:** Located at the top-left corner, identifying each model with a corresponding color:

* Black: FP software baseline

* Pink: Quantization model

* Light Blue: Weight noise model

* Blue: Weight noise + quantization model

* Green: Chip experiment

### Detailed Analysis

The chart consists of four groups of bars, one for each BLEU metric. Within each group, there are five bars, one for each model.

**BLEU-1:**

* FP software baseline: Approximately 0.534

* Quantization model: Approximately 0.539

* Weight noise model: Approximately 0.534

* Weight noise + quantization model: Approximately 0.537

* Chip experiment: Approximately 0.544

*Trend: All models perform similarly, with the Chip experiment showing a slightly higher score.

**BLEU-2:**

* FP software baseline: Approximately 0.340

* Quantization model: Approximately 0.341

* Weight noise model: Approximately 0.341

* Weight noise + quantization model: Approximately 0.346

* Chip experiment: Not present.

*Trend: Models are closely clustered, with the Weight noise + quantization model showing a slightly higher score.

**BLEU-3:**

* FP software baseline: Approximately 0.206

* Quantization model: Approximately 0.207

* Weight noise model: Approximately 0.205

* Weight noise + quantization model: Approximately 0.204

* Chip experiment: Not present.

*Trend: The models are very close in performance.

**BLEU-4:**

* FP software baseline: Approximately 0.135

* Quantization model: Approximately 0.133

* Weight noise model: Approximately 0.133

* Weight noise + quantization model: Approximately 0.134

* Chip experiment: Not present.

*Trend: The models are very close in performance.

### Key Observations

* The FP software baseline consistently performs well across all BLEU metrics, but is not always the highest.

* The Chip experiment consistently shows the highest BLEU-1 score.

* The Weight noise + quantization model shows a slight improvement over the other models for BLEU-2.

* The differences in BLEU scores between the models become smaller as the BLEU metric increases (BLEU-1 to BLEU-4).

* The Chip experiment is not present for BLEU-2, BLEU-3, and BLEU-4.

### Interpretation

The data suggests that the Chip experiment performs best for BLEU-1, indicating it excels at capturing n-gram precision for single words. The Weight noise + quantization model shows a slight advantage for BLEU-2, suggesting it may be better at capturing bigram precision. As the BLEU metric increases (BLEU-3 and BLEU-4), the performance of all models converges, indicating that capturing longer n-grams becomes more challenging for all approaches. The absence of the Chip experiment data for BLEU-2, BLEU-3, and BLEU-4 could indicate limitations in its ability to generalize to longer n-grams or issues with the evaluation setup for those metrics. The overall trend suggests that while different models may have slight advantages for specific n-gram precisions, the overall performance is relatively similar across all models. This could indicate that the models are capturing similar aspects of language quality, or that the BLEU metric itself may not be sensitive enough to capture subtle differences in performance.