TECHNICAL ASSET FINGERPRINT

877dec7b071c206b33deeda1

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

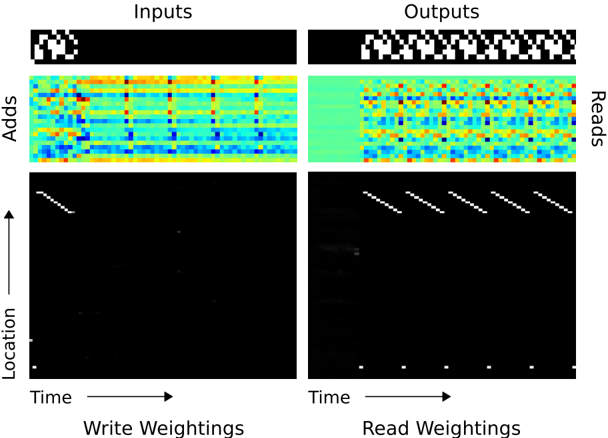

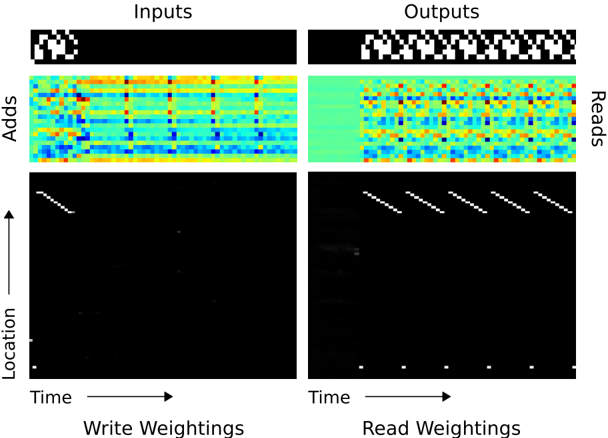

## Diagram: Neural Network Memory Analysis

### Overview

The image presents a visualization of the internal operations of a neural network, specifically focusing on memory access patterns during write and read operations. It displays input and output patterns, memory addition patterns, and write/read weighting distributions over time and location.

### Components/Axes

* **Titles:** "Inputs" (top-left), "Outputs" (top-right)

* **Y-axis (left side):** "Adds", "Location" (with an upward-pointing arrow indicating direction)

* **X-axis (bottom):** "Time" (with a rightward-pointing arrow indicating direction)

* **Bottom Labels:** "Write Weightings" (left), "Read Weightings" (right)

### Detailed Analysis

The image is divided into two main sections: "Inputs" (left) and "Outputs" (right). Each section contains three sub-sections:

1. **Input/Output Patterns (Top Row):**

* **Inputs:** A small, black square with a white pattern.

* **Outputs:** A horizontal sequence of repeating black and white patterns.

2. **Memory Addition Patterns (Middle Row):**

* **Adds (Inputs):** A heatmap-like representation showing memory addition patterns over time. The color scheme ranges from blue to red, indicating different levels of addition. There are vertical bands of varying color intensity.

* **Reads (Outputs):** A heatmap-like representation showing memory read patterns over time. The color scheme ranges from blue to red, indicating different levels of reading. There are vertical bands of varying color intensity.

3. **Write/Read Weightings (Bottom Row):**

* **Write Weightings:** A black background with sparse white pixels. There are diagonal lines of white pixels that appear to move from top-left to bottom-right. There are also a few scattered white pixels.

* **Read Weightings:** A black background with sparse white pixels. There are diagonal lines of white pixels that appear to move from top-left to bottom-right. There are also a few scattered white pixels and a row of white dots at the bottom.

### Key Observations

* The input pattern is static, while the output pattern is dynamic and repetitive.

* The memory addition patterns (Adds/Reads) show distinct temporal structures, with the "Reads" section exhibiting more regular patterns than the "Adds" section.

* The write and read weightings show diagonal patterns, suggesting sequential memory access. The read weightings show more activity than the write weightings.

### Interpretation

The diagram visualizes how a neural network interacts with its memory during processing. The "Inputs" and "Outputs" sections show the data being fed into and produced by the network. The "Adds" and "Reads" sections reveal how the network modifies and retrieves information from its memory. The "Write Weightings" and "Read Weightings" sections illustrate the specific memory locations being accessed during these operations.

The diagonal patterns in the weightings suggest that the network is sequentially accessing memory locations. The differences between the "Adds" and "Reads" sections indicate that the network's memory access patterns are different for writing and reading. The repetitive nature of the output pattern is reflected in the regular patterns observed in the "Reads" section.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 2

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Heatmaps: Neural Network Attention Visualization

### Overview

The image presents four heatmaps visualizing attention weights within a neural network. Two heatmaps represent the "Inputs" side, and two represent the "Outputs" side. Each side has two rows: the top row displays attention weights related to "Adds", and the bottom row displays attention weights related to "Location" over "Time". The color intensity represents the magnitude of the attention weight, with warmer colors (red/orange/yellow) indicating higher weights and cooler colors (blue/green) indicating lower weights.

### Components/Axes

* **Titles:** "Inputs" (top) and "Outputs" (right)

* **Row Labels:** "Adds" (top row) and "Location" (bottom row)

* **Column Label:** "Reads" (right side)

* **X-axis:** "Time" (horizontal axis for both sides)

* **Y-axis:** "Location" (vertical axis for the bottom row heatmaps)

* **Color Scale:** Ranges from dark blue (low weight) to red (high weight), passing through green, yellow, and orange.

### Detailed Analysis or Content Details

**Inputs - Adds:**

The heatmap shows a complex pattern of attention weights. The horizontal axis represents time, and the vertical axis represents the location. The heatmap is approximately 20x20 units. The color intensity varies significantly across the heatmap. There are several areas of high attention (red/orange) scattered throughout, with a general trend of decreasing attention as time progresses. The pattern appears somewhat chaotic, with no clear dominant structure. Approximate values (based on color intensity):

* Maximum attention weight: ~90% (bright red)

* Minimum attention weight: ~10% (dark blue)

* Average attention weight: ~50% (green/yellow)

**Inputs - Location:**

This heatmap shows a strong diagonal pattern. The attention weights are highest along the diagonal, indicating that the network attends to locations that are close to each other in time. The diagonal fades as time progresses. The heatmap is approximately 20x20 units.

* Diagonal attention weight: ~80-90% (bright red)

* Off-diagonal attention weight: ~10-20% (dark blue)

**Outputs - Reads:**

Similar to the "Inputs - Adds" heatmap, this shows a complex pattern of attention weights. The heatmap is approximately 20x20 units. There are several areas of high attention (red/orange) scattered throughout, with a general trend of decreasing attention as time progresses. The pattern appears somewhat chaotic, with no clear dominant structure. Approximate values (based on color intensity):

* Maximum attention weight: ~90% (bright red)

* Minimum attention weight: ~10% (dark blue)

* Average attention weight: ~50% (green/yellow)

**Outputs - Read Weightings:**

This heatmap also exhibits a strong diagonal pattern, similar to "Inputs - Location". The attention weights are highest along the diagonal, indicating that the network attends to locations that are close to each other in time. The diagonal fades as time progresses. The heatmap is approximately 20x20 units.

* Diagonal attention weight: ~80-90% (bright red)

* Off-diagonal attention weight: ~10-20% (dark blue)

### Key Observations

* The "Adds" heatmaps (both inputs and outputs) show a more diffuse and complex attention pattern compared to the "Location" heatmaps.

* The "Location" heatmaps (both inputs and outputs) exhibit a clear diagonal pattern, suggesting a temporal locality of attention.

* The attention weights generally decrease over time in all heatmaps.

* The "Inputs" and "Outputs" heatmaps show similar patterns, suggesting that the network's attention mechanism is consistent across the input and output stages.

### Interpretation

The image demonstrates how a neural network attends to different parts of the input sequence over time. The "Adds" heatmaps suggest that the network is attending to a wide range of features and locations, while the "Location" heatmaps suggest that the network is prioritizing temporal locality. The diagonal pattern in the "Location" heatmaps indicates that the network is more likely to attend to locations that are close to each other in time. The decreasing attention weights over time may indicate that the network is focusing on the most recent information.

The visualization provides insights into the network's internal workings and can be used to understand how it makes its predictions. The differences in attention patterns between "Adds" and "Location" suggest that these two features are processed differently by the network. The consistency between "Inputs" and "Outputs" suggests that the attention mechanism is a stable and reliable component of the network.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Composite Diagram: Input/Output Transformation Process

### Overview

The image is a technical composite diagram divided into two primary columns labeled "Inputs" and "Outputs," each containing three vertically stacked visualizations. It appears to illustrate a data transformation or computational process, likely within a neural network or signal processing context, showing how an initial input pattern is expanded or processed into a more complex output pattern through intermediate stages represented by heatmaps and scatter plots.

### Components/Axes

**Global Structure:**

- Two main columns: Left column titled **"Inputs"**, Right column titled **"Outputs"**.

- Each column contains three rows of visualizations.

**Row 1 (Top): Binary Pattern Displays**

- **Inputs (Left):** A rectangular black field containing a small, localized cluster of white pixels in the upper-left quadrant. The pattern resembles a sparse, irregular shape.

- **Outputs (Right):** A rectangular black field containing a longer, horizontally extended pattern of white pixels. This pattern is more complex and distributed across the upper portion of the field.

**Row 2 (Middle): Heatmaps**

- **Left Heatmap (under Inputs):**

- **Y-axis Label:** **"Adds"** (positioned vertically on the left side).

- **X-axis:** Unlabeled, but implied to represent a spatial or feature dimension.

- **Content:** A dense, colorful grid. Colors range from dark blue (low values) through cyan and green to yellow (high values). The pattern shows horizontal bands of higher intensity (yellow/green) interspersed with lower intensity (blue) regions. There are distinct vertical lines of higher intensity.

- **Right Heatmap (under Outputs):**

- **Y-axis Label:** **"Reads"** (positioned vertically on the right side).

- **X-axis:** Unlabeled, but implied to correspond to the same dimension as the left heatmap.

- **Content:** A similar dense, colorful grid with the same blue-to-yellow color scale. The pattern is more uniform and "noisier" than the left heatmap, with a prominent solid green vertical band on the far left edge. The distribution of high-intensity (yellow) points appears more scattered.

**Row 3 (Bottom): Scatter Plots**

- **Left Scatter Plot (under Inputs):**

- **Title:** **"Write Weightings"** (centered below the plot).

- **Y-axis Label:** **"Location"** (positioned vertically on the left).

- **X-axis Label:** **"Time"** (positioned horizontally below the axis).

- **Content:** A black field with white dots. The dots form a clear, tight diagonal line sloping upward from left to right in the upper-left quadrant. A few isolated white dots are visible near the bottom-left corner.

- **Right Scatter Plot (under Outputs):**

- **Title:** **"Read Weightings"** (centered below the plot).

- **Y-axis Label:** **"Location"** (positioned vertically on the left, shared with the left plot).

- **X-axis Label:** **"Time"** (positioned horizontally below the axis).

- **Content:** A black field with white dots. The dots form multiple, parallel diagonal lines sloping upward from left to right, spanning a wider range of the plot. There are approximately 5-6 distinct diagonal lines. A few isolated dots are also present near the bottom axis.

### Detailed Analysis

**Binary Patterns (Row 1):**

- **Input Pattern:** A compact, localized activation. Approximate dimensions: ~15% of the width, ~30% of the height of its container, located in the top-left.

- **Output Pattern:** An expanded, sequential activation. It spans approximately 70% of the width of its container, suggesting the input has been replicated or convolved across a temporal or spatial dimension.

**Heatmaps (Row 2):**

- **"Adds" Heatmap (Input Side):** Shows structured, banded activity. The vertical lines of high intensity suggest specific features or channels are being activated repeatedly. The horizontal bands indicate consistent activity levels across certain rows.

- **"Reads" Heatmap (Output Side):** Shows more diffuse, granular activity. The solid green band on the left edge is a notable anomaly, indicating a region of constant, medium-level value. The scattered yellow points suggest a more distributed pattern of high-value activations compared to the input side.

**Scatter Plots (Row 3):**

- **"Write Weightings" (Input Side):** The single, sharp diagonal line indicates a strong, linear correlation between "Time" and "Location" for the write operation. This suggests a sequential, ordered writing process where each subsequent time step writes to the next location. The slope is approximately 1 (45 degrees).

- **"Read Weightings" (Output Side):** The multiple parallel diagonal lines indicate that the read operation accesses multiple locations in a similar sequential, time-ordered manner, but starting from different initial locations or for different parallel processes. The lines are evenly spaced, suggesting a regular, tiled access pattern.

### Key Observations

1. **Transformation Complexity:** The system transforms a simple, localized input into a complex, distributed output. This is evident in the expansion from a single binary cluster to a long pattern, and from a single diagonal line to multiple parallel lines.

2. **Structured vs. Diffuse Activity:** The input-side processes ("Adds", "Write Weightings") show highly structured, clean patterns (bands, single line). The output-side processes ("Reads", "Read Weightings") show more complex, distributed, or noisy patterns (scattered points, multiple lines).

3. **Temporal-Spatial Mapping:** The scatter plots explicitly map "Time" to "Location," confirming the process involves a spatiotemporal transformation. The output's multiple read lines imply parallel or multi-channel processing.

4. **Anomaly:** The solid green vertical band on the far left of the "Reads" heatmap is a distinct feature not present in the "Adds" heatmap, possibly indicating a bias, initialization, or boundary condition in the read phase.

### Interpretation

This diagram likely visualizes the internal mechanics of a **memory-augmented neural network** or a **convolutional process with temporal unfolding**.

- **What it demonstrates:** It shows how a network writes a compressed input representation ("Write Weightings" to memory locations) and then reads from that memory in a more expansive, parallel fashion ("Read Weightings") to generate a complex output. The "Adds" and "Reads" heatmaps may represent the accumulation of values (e.g., in a memory matrix) and the subsequent retrieval patterns.

- **Relationship between elements:** The top row shows the *what* (data), the middle row shows the *how* (aggregated operations), and the bottom row shows the *when and where* (the precise spatiotemporal access patterns). The input's single write diagonal directly enables the output's multiple read diagonals, illustrating a one-to-many readout scheme.

- **Underlying principle:** The core concept is **structured sparsity and parallel access**. The system writes information in a sparse, ordered manner and reads it back in a parallel, tiled manner to construct a larger output. This is a common pattern in sequence modeling, attention mechanisms, or convolutional algorithms where a local kernel is applied across an entire input. The "noisier" output heatmaps suggest the read process integrates or transforms the stored information, introducing complexity.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap Diagram: Input/Output Weighting Patterns

### Overview

The image presents a comparative analysis of input/output processing through four quadrants: Inputs, Outputs, Write Weightings, and Read Weightings. Each quadrant contains a heatmap (top) and a binary pattern visualization (bottom), with Time progressing horizontally and Location vertically.

### Components/Axes

- **Axes**:

- X-axis: Time (left to right progression)

- Y-axis: Location (bottom to top progression)

- **Quadrants**:

1. **Inputs**: Leftmost column

2. **Outputs**: Rightmost column

3. **Write Weightings**: Bottom-left quadrant

4. **Read Weightings**: Bottom-right quadrant

- **Color Scale**: Implied gradient from blue (low intensity) to red (high intensity) in heatmaps

- **Binary Patterns**: White lines on black background in lower sections

### Detailed Analysis

1. **Inputs Heatmap**:

- Horizontal bands of activity with moderate intensity (yellow/orange)

- Vertical blue streaks indicating sporadic low-intensity events

- Binary pattern shows single diagonal line from bottom-left to top-right

2. **Outputs Heatmap**:

- Scattered red spots indicating high-intensity events

- Yellow/orange bands with less regularity than Inputs

- Binary pattern shows multiple diagonal lines (4-5) with varying spacing

3. **Write Weightings Heatmap**:

- Diagonal band of high-intensity activity (red) from bottom-left to top-right

- Faint horizontal blue bands suggesting residual activity

- Binary pattern matches heatmap with single diagonal line

4. **Read Weightings Heatmap**:

- Vertical red lines indicating columnar activity

- Yellow/orange horizontal bands with irregular spacing

- Binary pattern shows multiple vertical lines (5-6) with consistent spacing

### Key Observations

- **Temporal Patterns**:

- Inputs show regular horizontal activity with intermittent vertical events

- Outputs exhibit sporadic high-intensity events with less temporal regularity

- **Spatial Patterns**:

- Write Weightings demonstrate directional (diagonal) data flow

- Read Weightings show columnar access patterns

- **Binary Pattern Correlation**:

- Diagonal lines in Inputs/Write Weightings suggest sequential processing

- Multiple vertical lines in Outputs/Read Weightings indicate parallel access

### Interpretation

The system appears to process inputs through a directional weighting mechanism (Write Weightings) that transforms regular input patterns into more complex output patterns. The Read Weightings' vertical lines suggest a retrieval mechanism accessing specific memory locations. The binary patterns likely represent activation sequences, with Outputs showing more complex activation than Inputs. The absence of explicit numerical values prevents quantitative analysis, but the visual patterns strongly suggest a neural network-like processing pipeline with distinct write/read pathways.

DECODING INTELLIGENCE...