## Bar Chart: Performance Comparison of Different Models

### Overview

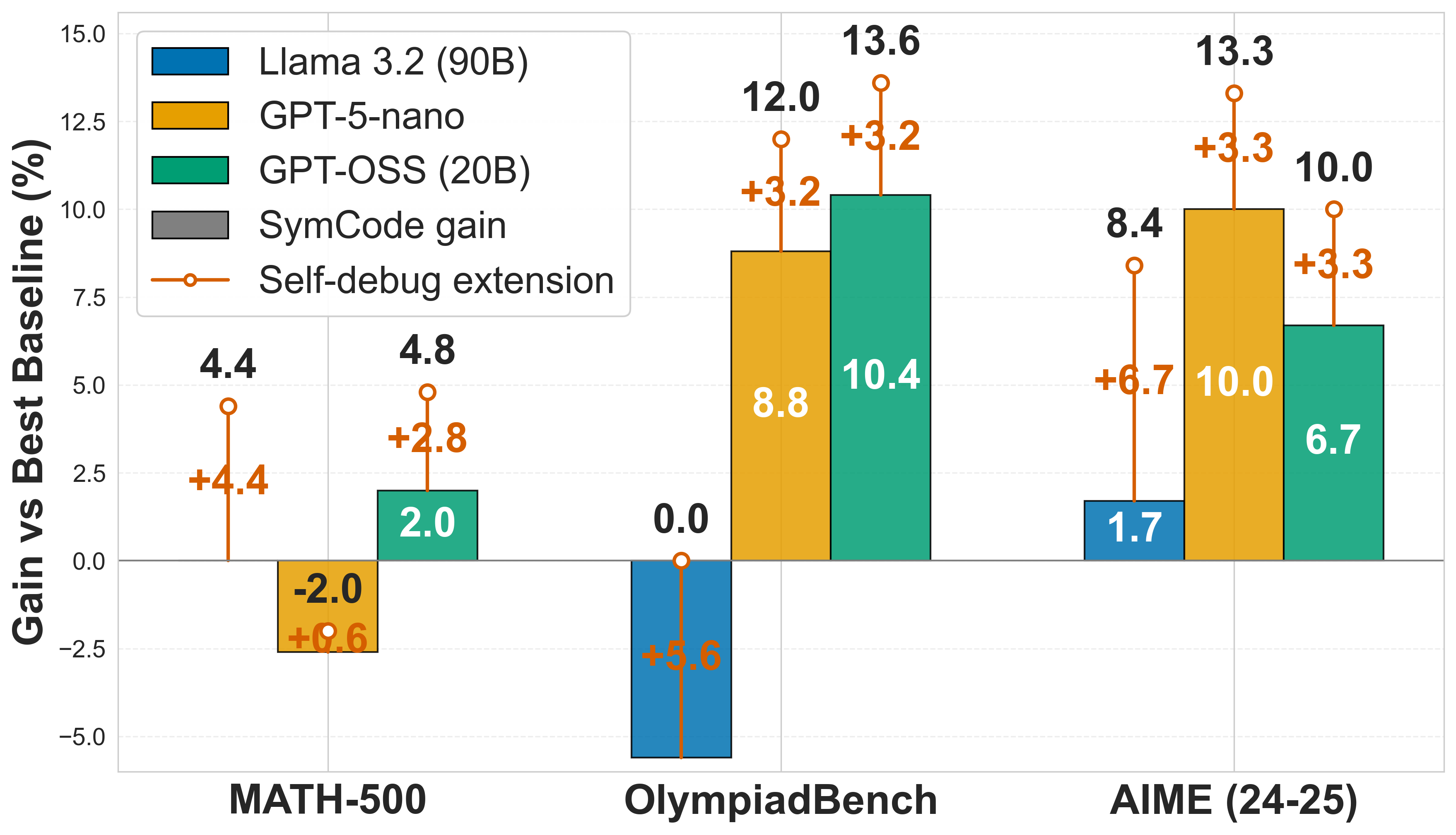

The bar chart compares the performance of four different models on three different benchmarks: MATH-500, OlympiadBench, and AIME (24-25). The chart shows the gain in performance percentage compared to the baseline for each model.

### Components/Axes

- **X-axis**: Benchmarks (MATH-500, OlympiadBench, AIME (24-25))

- **Y-axis**: Gain vs Best Baseline (%)

- **Legend**:

- Llama 3.2 (90B)

- GPT-5-nano

- GPT-OS (20B)

- SymCode gain

- Self-debug extension

### Detailed Analysis or ### Content Details

- **MATH-500**:

- Llama 3.2 (90B): +4.4

- GPT-5-nano: +2.8

- GPT-OS (20B): +2.0

- SymCode gain: -2.0

- Self-debug extension: +0.6

- **OlympiadBench**:

- Llama 3.2 (90B): +5.6

- GPT-5-nano: +3.2

- GPT-OS (20B): +3.3

- SymCode gain: 0.0

- Self-debug extension: +6.7

- **AIME (24-25)**:

- Llama 3.2 (90B): +1.7

- GPT-5-nano: +10.0

- GPT-OS (20B): +10.0

- SymCode gain: +3.3

- Self-debug extension: +3.3

### Key Observations

- The models show varying levels of performance improvement across the benchmarks.

- Llama 3.2 (90B) generally outperforms the other models, especially on AIME (24-25).

- GPT-5-nano and GPT-OS (20B) show moderate improvements, with GPT-OS (20B) performing slightly better than GPT-5-nano.

- SymCode gain and Self-debug extension have mixed results, with some models showing improvement and others showing a decrease.

- OlympiadBench shows the highest gains, with Llama 3.2 (90B) and GPT-5-nano achieving the highest gains.

### Interpretation

The data suggests that Llama 3.2 (90B) is the most effective model across all benchmarks, followed by GPT-5-nano and GPT-OS (20B). The SymCode gain and Self-debug extension have mixed results, with some models showing improvement and others showing a decrease. The OlympiadBench shows the highest gains, indicating that the model's performance is highly dependent on the specific task. The data implies that further research is needed to understand the underlying mechanisms of these models and to develop more effective techniques for improving their performance.