## Bar Chart & Line Graphs: Results on Math-500 & Average Attention on Head 14

### Overview

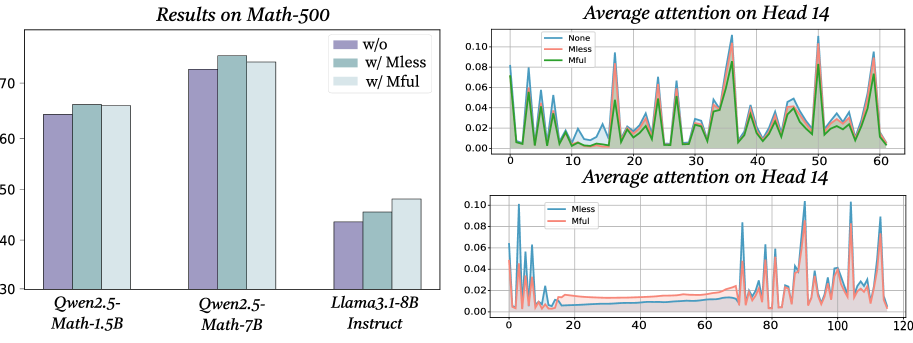

The image presents a combination of a bar chart comparing performance on a Math-500 dataset across different models and configurations, alongside two line graphs illustrating average attention on Head 14 for those same configurations. The bar chart shows accuracy scores, while the line graphs depict attention weights over a sequence length.

### Components/Axes

**Bar Chart:**

* **Title:** "Results on Math-500"

* **X-axis:** Model/Configuration: "Qwen2.5-Math-1.5B", "Qwen2.5-Math-7B", "Llama3-1-8B Instruct"

* **Y-axis:** Accuracy (approximately 30 to 75)

* **Legend:**

* "w/o" (Light Blue) - Represents a configuration without a specific method.

* "w/ Mless" (Medium Blue) - Represents a configuration with "Mless".

* "w/ Mful" (Dark Blue) - Represents a configuration with "Mful".

**Line Graphs:**

* **Title:** "Average attention on Head 14" (appears twice)

* **X-axis:** Sequence Length (0 to 60 in the first graph, 0 to 120 in the second)

* **Y-axis:** Average Attention (approximately 0 to 0.10)

* **Legend (First Graph):**

* "None" (Black)

* "Mless" (Green)

* "Mful" (Teal)

* **Legend (Second Graph):**

* "Mless" (Pink)

* "Mful" (Red)

### Detailed Analysis or Content Details

**Bar Chart:**

* **Qwen2.5-Math-1.5B:**

* w/o: Approximately 65

* w/ Mless: Approximately 68

* w/ Mful: Approximately 72

* **Qwen2.5-Math-7B:**

* w/o: Approximately 70

* w/ Mless: Approximately 73

* w/ Mful: Approximately 75

* **Llama3-1-8B Instruct:**

* w/o: Approximately 42

* w/ Mless: Approximately 44

* w/ Mful: Approximately 46

**Line Graph 1 (Sequence Length 0-60):**

* **None (Black):** The line fluctuates significantly between approximately 0.02 and 0.08, with several peaks and valleys.

* **Mless (Green):** The line generally stays lower than "None", fluctuating between approximately 0.01 and 0.05.

* **Mful (Teal):** The line fluctuates between approximately 0.02 and 0.07, showing a similar pattern to "None" but with slightly lower overall values.

**Line Graph 2 (Sequence Length 0-120):**

* **Mless (Pink):** The line fluctuates between approximately 0.01 and 0.06, with a generally increasing trend towards the end of the sequence.

* **Mful (Red):** The line fluctuates between approximately 0.01 and 0.08, showing more pronounced peaks and valleys than "Mless".

### Key Observations

* In the bar chart, adding "Mless" and "Mful" consistently improves accuracy across all models. "Mful" generally yields the highest accuracy.

* The line graphs show that attention weights vary considerably across sequence length.

* The "None" line in the first graph has a higher average attention than both "Mless" and "Mful".

* The second line graph shows that "Mful" has higher attention weights than "Mless" for most of the sequence length.

### Interpretation

The data suggests that the "Mless" and "Mful" methods are effective in improving the accuracy of the models on the Math-500 dataset. The bar chart clearly demonstrates this improvement, with "Mful" consistently outperforming the other configurations.

The line graphs provide insights into how attention is distributed across the sequence length. The fluctuations in attention weights indicate that the model is focusing on different parts of the input sequence at different times. The differences between the "None", "Mless", and "Mful" lines suggest that these methods influence the attention mechanism, potentially by focusing attention on more relevant parts of the sequence.

The fact that "None" has higher attention in the first graph could indicate that the "Mless" and "Mful" methods are suppressing attention in certain areas, potentially reducing noise or irrelevant information. The increasing trend of "Mless" and "Mful" in the second graph suggests that these methods may become more effective at longer sequence lengths.

The two line graphs focus on different sequence lengths, and different configurations. This suggests a deeper investigation into the interplay between sequence length, attention mechanisms, and model performance is warranted.