TECHNICAL ASSET FINGERPRINT

87d5708031d2cbc733650e97

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

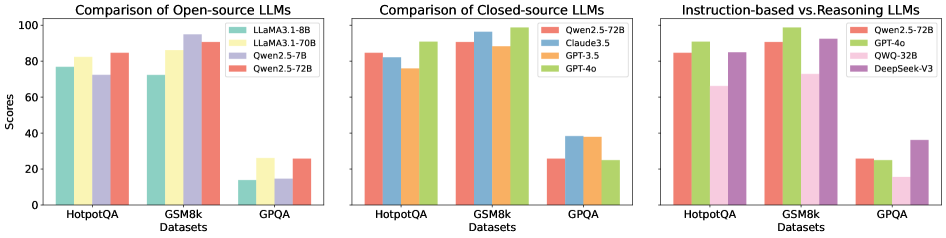

## Bar Charts: LLM Performance Comparison

### Overview

The image presents three bar charts comparing the performance of different Large Language Models (LLMs) on three datasets: HotpotQA, GSM8k, and GPQA. The charts are grouped by LLM type: Open-source, Closed-source, and Instruction-based vs. Reasoning. The y-axis represents scores, presumably accuracy or a similar metric, ranging from 0 to 100.

### Components/Axes

* **Titles:**

* Left Chart: "Comparison of Open-source LLMs"

* Middle Chart: "Comparison of Closed-source LLMs"

* Right Chart: "Instruction-based vs. Reasoning LLMs"

* **Y-axis:**

* Label: "Scores"

* Scale: 0 to 100, with tick marks at 20, 40, 60, 80, and 100.

* **X-axis:**

* Label: "Datasets"

* Categories: HotpotQA, GSM8k, GPQA

* **Legends:**

* **Left Chart (Open-source):** Located at the top-right of the chart.

* Light Green: "LLaMA3.1-8B"

* Yellow: "LLaMA3.1-70B"

* Lavender: "Qwen2.5-7B"

* Salmon: "Qwen2.5-72B"

* **Middle Chart (Closed-source):** Located at the top-right of the chart.

* Salmon: "Qwen2.5-72B"

* Light Blue: "Claude3.5"

* Orange: "GPT-3.5"

* Light Green: "GPT-4o"

* **Right Chart (Instruction-based vs. Reasoning):** Located at the top-right of the chart.

* Salmon: "Qwen2.5-72B"

* Light Green: "GPT-4o"

* Pink: "QWQ-32B"

* Lavender: "DeepSeek-V3"

### Detailed Analysis

**Left Chart: Comparison of Open-source LLMs**

* **HotpotQA Dataset:**

* LLaMA3.1-8B (Light Green): Approximately 77

* LLaMA3.1-70B (Yellow): Approximately 82

* Qwen2.5-7B (Lavender): Approximately 73

* Qwen2.5-72B (Salmon): Approximately 85

* **GSM8k Dataset:**

* LLaMA3.1-8B (Light Green): Approximately 73

* LLaMA3.1-70B (Yellow): Approximately 87

* Qwen2.5-7B (Lavender): Approximately 95

* Qwen2.5-72B (Salmon): Approximately 91

* **GPQA Dataset:**

* LLaMA3.1-8B (Light Green): Approximately 10

* LLaMA3.1-70B (Yellow): Approximately 25

* Qwen2.5-7B (Lavender): Approximately 10

* Qwen2.5-72B (Salmon): Approximately 25

**Middle Chart: Comparison of Closed-source LLMs**

* **HotpotQA Dataset:**

* Qwen2.5-72B (Salmon): Approximately 85

* Claude3.5 (Light Blue): Approximately 82

* GPT-3.5 (Orange): Approximately 91

* GPT-4o (Light Green): Approximately 92

* **GSM8k Dataset:**

* Qwen2.5-72B (Salmon): Approximately 98

* Claude3.5 (Light Blue): Approximately 92

* GPT-3.5 (Orange): Approximately 98

* GPT-4o (Light Green): Approximately 98

* **GPQA Dataset:**

* Qwen2.5-72B (Salmon): Approximately 25

* Claude3.5 (Light Blue): Approximately 37

* GPT-3.5 (Orange): Approximately 25

* GPT-4o (Light Green): Approximately 25

**Right Chart: Instruction-based vs. Reasoning LLMs**

* **HotpotQA Dataset:**

* Qwen2.5-72B (Salmon): Approximately 85

* GPT-4o (Light Green): Approximately 92

* QWQ-32B (Pink): Approximately 82

* DeepSeek-V3 (Lavender): Approximately 85

* **GSM8k Dataset:**

* Qwen2.5-72B (Salmon): Approximately 98

* GPT-4o (Light Green): Approximately 98

* QWQ-32B (Pink): Approximately 92

* DeepSeek-V3 (Lavender): Approximately 95

* **GPQA Dataset:**

* Qwen2.5-72B (Salmon): Approximately 25

* GPT-4o (Light Green): Approximately 25

* QWQ-32B (Pink): Approximately 15

* DeepSeek-V3 (Lavender): Approximately 32

### Key Observations

* **General Performance:** All models perform significantly better on HotpotQA and GSM8k datasets compared to GPQA.

* **Open-source Models:** Qwen2.5-72B generally outperforms LLaMA3.1 models across all datasets.

* **Closed-source Models:** GPT-4o and GPT-3.5 show very high performance on HotpotQA and GSM8k.

* **Instruction-based vs. Reasoning:** GPT-4o and Qwen2.5-72B show similar performance, while QWQ-32B and DeepSeek-V3 show slightly lower performance.

* **GPQA Challenge:** All models struggle with the GPQA dataset, indicating it is a more challenging benchmark.

### Interpretation

The charts provide a comparative analysis of various LLMs across different datasets. The data suggests that:

* **Dataset Difficulty:** GPQA is a significantly more challenging dataset for all models compared to HotpotQA and GSM8k. This could be due to the nature of the questions or the complexity of reasoning required.

* **Model Strengths:** Closed-source models like GPT-4o and GPT-3.5 demonstrate superior performance on HotpotQA and GSM8k, suggesting they are well-optimized for these types of tasks.

* **Open-source Advancements:** The Qwen2.5-72B model shows competitive performance among open-source models, indicating progress in open-source LLM development.

* **Reasoning vs. Instruction:** The Instruction-based vs. Reasoning chart suggests that while GPT-4o and Qwen2.5-72B perform similarly, other models like QWQ-32B and DeepSeek-V3 may have different strengths or weaknesses in instruction following or reasoning capabilities.

The low scores on GPQA across all model categories highlight the need for further research and development in areas such as complex reasoning and knowledge integration.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

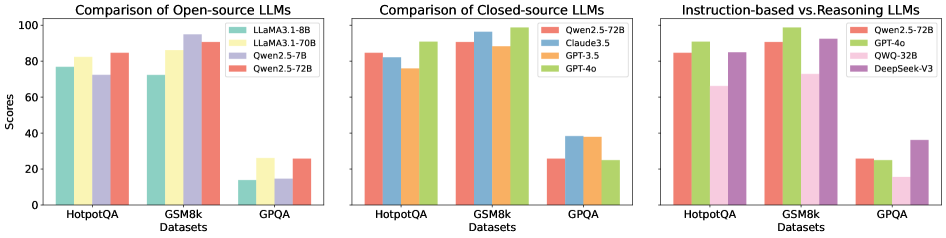

## Bar Chart: LLM Performance Comparison

### Overview

The image presents three bar charts comparing the performance of various Large Language Models (LLMs) across three datasets: HotpotQA, GSM8k, and GPQA. The charts are arranged horizontally, with the first comparing open-source LLMs, the second comparing closed-source LLMs, and the third comparing instruction-based and reasoning LLMs. The y-axis represents "Scores," ranging from 0 to 100. The x-axis represents the datasets.

### Components/Axes

* **Y-axis Title:** "Scores" (Scale: 0 to 100, increments of 20)

* **X-axis Title:** "Datasets" (Categories: HotpotQA, GSM8k, GPQA)

* **Chart 1 (Open-source LLMs):**

* **Legend:**

* LLaMA3-8B (Light Blue)

* LLaMA3-70B (Yellow)

* Qwen2-7B (Orange)

* Qwen2-5-72B (Red)

* **Chart 2 (Closed-source LLMs):**

* **Legend:**

* Qwen2.5-72B (Orange)

* Claude3-5 (Light Green)

* GPT-3.5 (Yellow)

* GPT-4o (Brown)

* **Chart 3 (Instruction-based vs. Reasoning LLMs):**

* **Legend:**

* Qwen2.5-72B (Orange)

* GPT-4o (Brown)

* QWQ-32B (Purple)

* DeepSeek-V3 (Green)

### Detailed Analysis or Content Details

**Chart 1: Comparison of Open-source LLMs**

* **HotpotQA:**

* LLaMA3-8B: Approximately 72

* LLaMA3-70B: Approximately 84

* Qwen2-7B: Approximately 78

* Qwen2-5-72B: Approximately 82

* **GSM8k:**

* LLaMA3-8B: Approximately 76

* LLaMA3-70B: Approximately 96

* Qwen2-7B: Approximately 72

* Qwen2-5-72B: Approximately 88

* **GPQA:**

* LLaMA3-8B: Approximately 12

* LLaMA3-70B: Approximately 24

* Qwen2-7B: Approximately 16

* Qwen2-5-72B: Approximately 28

**Chart 2: Comparison of Closed-source LLMs**

* **HotpotQA:**

* Qwen2.5-72B: Approximately 86

* Claude3-5: Approximately 82

* GPT-3.5: Approximately 80

* GPT-4o: Approximately 88

* **GSM8k:**

* Qwen2.5-72B: Approximately 92

* Claude3-5: Approximately 88

* GPT-3.5: Approximately 84

* GPT-4o: Approximately 94

* **GPQA:**

* Qwen2.5-72B: Approximately 26

* Claude3-5: Approximately 22

* GPT-3.5: Approximately 24

* GPT-4o: Approximately 30

**Chart 3: Instruction-based vs. Reasoning LLMs**

* **HotpotQA:**

* Qwen2.5-72B: Approximately 86

* GPT-4o: Approximately 88

* QWQ-32B: Approximately 82

* DeepSeek-V3: Approximately 84

* **GSM8k:**

* Qwen2.5-72B: Approximately 92

* GPT-4o: Approximately 94

* QWQ-32B: Approximately 88

* DeepSeek-V3: Approximately 90

* **GPQA:**

* Qwen2.5-72B: Approximately 26

* GPT-4o: Approximately 28

* QWQ-32B: Approximately 22

* DeepSeek-V3: Approximately 24

### Key Observations

* **LLaMA3-70B consistently outperforms LLaMA3-8B** across all datasets.

* **GPT-4o generally achieves the highest scores** among the closed-source and instruction-based/reasoning LLMs, particularly on GSM8k.

* **GPQA consistently yields the lowest scores** across all models, indicating it is the most challenging dataset.

* **Qwen2.5-72B performs strongly** and is competitive with GPT-4o in many cases.

* The performance differences between models are more pronounced on GSM8k than on HotpotQA or GPQA.

### Interpretation

The data suggests a clear hierarchy in LLM performance, with larger models (like LLaMA3-70B and GPT-4o) generally outperforming smaller ones. The choice of dataset significantly impacts performance, with GSM8k being a more discriminating benchmark than HotpotQA or GPQA. The comparison between open-source and closed-source models reveals that closed-source models currently have a performance edge, but open-source models are rapidly closing the gap. The inclusion of instruction-based and reasoning LLMs (Qwen2.5-72B, GPT-4o, QWQ-32B, DeepSeek-V3) demonstrates that models specifically designed for these tasks can achieve high scores, particularly on reasoning-intensive datasets like GSM8k. The consistent low scores on GPQA suggest that this dataset presents unique challenges that are not adequately addressed by current LLM architectures or training methods. The data highlights the ongoing progress in LLM development and the importance of evaluating models across a diverse range of datasets to gain a comprehensive understanding of their capabilities.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Bar Charts: LLM Performance Comparison Across Datasets

### Overview

The image displays three side-by-side bar charts comparing the performance of various Large Language Models (LLMs) on three benchmark datasets: HotpotQA, GSM8k, and GPQA. The charts are categorized by model type: open-source, closed-source, and instruction-based vs. reasoning-based. The y-axis for all charts represents "Scores" on a scale from 0 to 100.

### Components/Axes

* **Common Elements:**

* **Y-axis:** Label: "Scores". Scale: 0 to 100, with major ticks at 0, 20, 40, 60, 80, 100.

* **X-axis:** Label: "Datasets". Categories: "HotpotQA", "GSM8k", "GPQA".

* **Chart Titles:** Located above each chart.

* **Legends:** Positioned in the top-right corner of each chart's plot area.

* **Chart 1 (Left): "Comparison of Open-source LLMs"**

* **Legend (Top-Right):**

* Teal bar: `LLaMA3.1-8B`

* Yellow bar: `LLaMA3.1-70B`

* Light Purple bar: `Qwen2.5-7B`

* Salmon bar: `Qwen2.5-72B`

* **Chart 2 (Middle): "Comparison of Closed-source LLMs"**

* **Legend (Top-Right):**

* Salmon bar: `Qwen2.5-72B`

* Blue bar: `Claude3.5`

* Orange bar: `GPT-3.5`

* Green bar: `GPT-4o`

* **Chart 3 (Right): "Instruction-based vs. Reasoning LLMs"**

* **Legend (Top-Right):**

* Salmon bar: `Qwen2.5-72B`

* Green bar: `GPT-4o`

* Pink bar: `QWQ-32B`

* Purple bar: `DeepSeek-V3`

### Detailed Analysis

**Chart 1: Comparison of Open-source LLMs**

* **HotpotQA:** `Qwen2.5-72B` (salmon) leads with a score of ~85. `LLaMA3.1-70B` (yellow) is next at ~82, followed by `LLaMA3.1-8B` (teal) at ~78, and `Qwen2.5-7B` (light purple) at ~72.

* **GSM8k:** All models score significantly higher. `Qwen2.5-7B` (light purple) achieves the highest score of ~95. `LLaMA3.1-70B` (yellow) is close at ~92, `Qwen2.5-72B` (salmon) at ~90, and `LLaMA3.1-8B` (teal) at ~72.

* **GPQA:** Performance drops drastically for all models. `LLaMA3.1-70B` (yellow) and `Qwen2.5-72B` (salmon) tie for the lead at ~25. `LLaMA3.1-8B` (teal) and `Qwen2.5-7B` (light purple) are lower at ~15.

**Chart 2: Comparison of Closed-source LLMs**

* **HotpotQA:** `GPT-4o` (green) leads at ~90. `Qwen2.5-72B` (salmon) is at ~85, `Claude3.5` (blue) at ~82, and `GPT-3.5` (orange) at ~78.

* **GSM8k:** `GPT-4o` (green) achieves the highest score across all charts at ~98. `Claude3.5` (blue) is at ~95, `Qwen2.5-72B` (salmon) at ~90, and `GPT-3.5` (orange) at ~85.

* **GPQA:** `Claude3.5` (blue) and `GPT-3.5` (orange) tie for the lead at ~38. `Qwen2.5-72B` (salmon) and `GPT-4o` (green) are lower at ~25.

**Chart 3: Instruction-based vs. Reasoning LLMs**

* **HotpotQA:** `GPT-4o` (green) leads at ~90. `Qwen2.5-72B` (salmon) and `DeepSeek-V3` (purple) are tied at ~85. `QWQ-32B` (pink) is lower at ~65.

* **GSM8k:** `GPT-4o` (green) again leads at ~98. `DeepSeek-V3` (purple) is very close at ~95, `Qwen2.5-72B` (salmon) at ~90, and `QWQ-32B` (pink) at ~72.

* **GPQA:** `DeepSeek-V3` (purple) leads this category at ~35. `Qwen2.5-72B` (salmon) and `GPT-4o` (green) are at ~25, while `QWQ-32B` (pink) is lowest at ~15.

### Key Observations

1. **Dataset Difficulty:** GPQA is consistently the most challenging dataset, with all models scoring below 40. GSM8k is the easiest, with several models scoring above 90.

2. **Model Scaling:** In the open-source chart, the 70B/72B parameter models (`LLaMA3.1-70B`, `Qwen2.5-72B`) generally outperform their smaller 7B/8B counterparts, especially on HotpotQA and GPQA.

3. **Top Performers:** `GPT-4o` (green) is the top performer on HotpotQA and GSM8k in the closed-source and instruction/reasoning charts. `Claude3.5` (blue) shows strong, consistent performance, particularly on GPQA.

4. **Specialization:** `QWQ-32B` (pink), labeled as a reasoning model, shows a notable performance drop on HotpotQA compared to GSM8k, suggesting potential specialization.

5. **Open vs. Closed:** The top open-source model (`Qwen2.5-72B`) is competitive with, but does not surpass, the top closed-source models (`GPT-4o`, `Claude3.5`) on any dataset.

### Interpretation

The data illustrates a clear hierarchy in LLM capabilities across different types of reasoning tasks. The consistent struggle on GPQA suggests it tests a form of reasoning (likely complex, multi-step, or specialized knowledge) that remains a significant challenge for current models, regardless of size or training paradigm.

The strong performance of `GPT-4o` and `Claude3.5` on GSM8k (mathematical reasoning) and HotpotQA (multi-hop factual reasoning) indicates these closed-source models have robust general reasoning abilities. The fact that the open-source `Qwen2.5-72B` is competitive but not superior suggests a potential "performance ceiling" that may require different architectural or training innovations to break, not just scaling.

The third chart hints at a potential trade-off: models optimized for instruction-following (like `Qwen2.5-72B`) may not excel at the specific type of reasoning tested by `QWQ-32B`, and vice-versa. `DeepSeek-V3`'s strong showing on GPQA is an outlier among the reasoning-focused models in that chart, suggesting its training may have uniquely prepared it for that specific challenge. Overall, the charts depict a landscape where model specialization and task difficulty are critical factors in performance evaluation.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Charts: Comparison of LLMs Across Datasets

### Overview

The image contains three grouped bar charts comparing the performance of various large language models (LLMs) across three datasets: HotpotQA, GSM8k, and GPQA. Each chart focuses on a different category of LLMs: open-source, closed-source, and instruction-based vs. reasoning models. Scores range from 0 to 100 on the y-axis, with datasets on the x-axis.

---

### Components/Axes

#### Labels and Legends

1. **First Chart (Open-source LLMs):**

- **Legend:**

- LLaMA 3.1-8B (green)

- LLaMA 3.1-70B (yellow)

- Qwen2.5-7B (purple)

- Qwen2.5-72B (red)

- **X-axis:** HotpotQA, GSM8k, GPQA

- **Y-axis:** Scores (0–100)

2. **Second Chart (Closed-source LLMs):**

- **Legend:**

- Qwen2.5-72B (red)

- Claude3.5 (blue)

- GPT-3.5 (orange)

- GPT-4o (green)

- **X-axis:** HotpotQA, GSM8k, GPQA

- **Y-axis:** Scores (0–100)

3. **Third Chart (Instruction-based vs. Reasoning LLMs):**

- **Legend:**

- Qwen2.5-72B (red)

- GPT-4o (green)

- QWQ-32B (pink)

- DeepSeek-V3 (purple)

- **X-axis:** HotpotQA, GSM8k, GPQA

- **Y-axis:** Scores (0–100)

---

### Detailed Analysis

#### First Chart (Open-source LLMs)

- **HotpotQA:**

- LLaMA 3.1-70B: ~85

- Qwen2.5-72B: ~83

- LLaMA 3.1-8B: ~78

- Qwen2.5-7B: ~72

- **GSM8k:**

- LLaMA 3.1-70B: ~95

- Qwen2.5-72B: ~90

- LLaMA 3.1-8B: ~75

- Qwen2.5-7B: ~15

- **GPQA:**

- LLaMA 3.1-70B: ~25

- Qwen2.5-72B: ~25

- Qwen2.5-7B: ~25

- LLaMA 3.1-8B: ~15

#### Second Chart (Closed-source LLMs)

- **HotpotQA:**

- Qwen2.5-72B: ~85

- GPT-4o: ~80

- Claude3.5: ~75

- GPT-3.5: ~70

- **GSM8k:**

- Qwen2.5-72B: ~95

- GPT-4o: ~90

- Claude3.5: ~85

- GPT-3.5: ~80

- **GPQA:**

- Qwen2.5-72B: ~25

- GPT-4o: ~25

- Claude3.5: ~25

- GPT-3.5: ~25

#### Third Chart (Instruction-based vs. Reasoning LLMs)

- **HotpotQA:**

- Qwen2.5-72B: ~85

- GPT-4o: ~80

- QWQ-32B: ~70

- DeepSeek-V3: ~65

- **GSM8k:**

- Qwen2.5-72B: ~95

- GPT-4o: ~90

- DeepSeek-V3: ~85

- QWQ-32B: ~70

- **GPQA:**

- Qwen2.5-72B: ~25

- GPT-4o: ~25

- DeepSeek-V3: ~35

- QWQ-32B: ~20

---

### Key Observations

1. **Open-source LLMs:**

- Larger models (e.g., LLaMA 3.1-70B) outperform smaller variants (e.g., LLaMA 3.1-8B) across all datasets.

- Qwen2.5-72B consistently outperforms Qwen2.5-7B, especially in GPQA.

2. **Closed-source LLMs:**

- Qwen2.5-72B and GPT-4o dominate performance metrics, with Qwen2.5-72B leading in GSM8k and GPQA.

- GPT-3.5 and Claude3.5 show similar scores but lag behind Qwen2.5-72B and GPT-4o.

3. **Instruction-based vs. Reasoning LLMs:**

- Instruction-based models (Qwen2.5-72B, GPT-4o) outperform reasoning models (DeepSeek-V3, QWQ-32B) in HotpotQA and GSM8k.

- DeepSeek-V3 surpasses QWQ-32B in GPQA, suggesting reasoning models may excel in specific tasks.

---

### Interpretation

- **Model Size Matters:** Larger models (e.g., 70B parameters) generally achieve higher scores, particularly in complex tasks like GPQA.

- **Closed-source Advantage:** Qwen2.5-72B and GPT-4o consistently outperform open-source models, highlighting potential advantages in proprietary architectures or training data.

- **Instruction-tuning Impact:** Instruction-based models (Qwen2.5-72B, GPT-4o) excel in reasoning tasks, while reasoning models (DeepSeek-V3) show niche strengths in GPQA.

- **Anomalies:** Qwen2.5-7B underperforms significantly in GSM8k (~15 vs. ~95 for LLaMA 3.1-70B), suggesting task-specific limitations.

The data underscores the importance of model scale, architecture, and training methodology in LLM performance across diverse benchmarks.

DECODING INTELLIGENCE...