## Bar Chart: Computational Cost Comparison in LLaMA-7B

### Overview

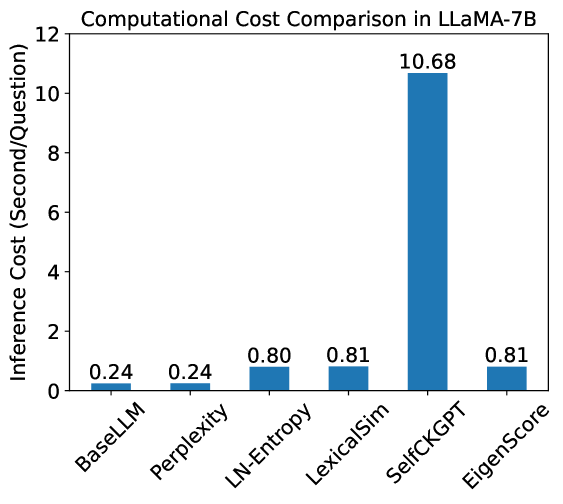

This is a vertical bar chart comparing the inference computational cost, measured in seconds per question, for six different methods or models applied to the LLaMA-7B large language model. The chart clearly demonstrates a significant disparity in cost between one method and the others.

### Components/Axes

* **Chart Title:** "Computational Cost Comparison in LLaMA-7B" (positioned at the top center).

* **Y-Axis (Vertical):**

* **Label:** "Inference Cost (Second/Question)"

* **Scale:** Linear scale from 0 to 12, with major tick marks at intervals of 2 (0, 2, 4, 6, 8, 10, 12).

* **X-Axis (Horizontal):**

* **Categories (from left to right):** BaseLLM, Perplexity, LN-Entropy, LexicalSim, SelfCKGPT, EigenScore.

* **Label Orientation:** The category labels are rotated approximately 45 degrees for readability.

* **Data Series:** A single series represented by blue bars. Each bar's height corresponds to the inference cost for its respective method. The exact numerical value is annotated directly above each bar.

### Detailed Analysis

The chart presents the following data points for inference cost (seconds/question):

1. **BaseLLM:** 0.24

2. **Perplexity:** 0.24

3. **LN-Entropy:** 0.80

4. **LexicalSim:** 0.81

5. **SelfCKGPT:** 10.68

6. **EigenScore:** 0.81

**Visual Trend:** The data series shows a relatively flat, low-cost profile for the first two methods (BaseLLM, Perplexity), a moderate step-up for the next three methods (LN-Entropy, LexicalSim, EigenScore), and then a dramatic, singular spike for SelfCKGPT, which is an order of magnitude higher than all others.

### Key Observations

1. **Dominant Outlier:** The method **SelfCKGPT** has a vastly higher computational cost (10.68 s/question) compared to all other methods. It is approximately 13 times more expensive than the next highest group (LN-Entropy, LexicalSim, EigenScore at ~0.8 s/question) and about 44 times more expensive than the base models (BaseLLM, Perplexity at 0.24 s/question).

2. **Clustering of Costs:** The methods fall into three distinct cost clusters:

* **Lowest Cost (~0.24 s):** BaseLLM and Perplexity.

* **Moderate Cost (~0.80-0.81 s):** LN-Entropy, LexicalSim, and EigenScore. The costs for LexicalSim and EigenScore are identical (0.81).

* **Very High Cost (10.68 s):** SelfCKGPT.

3. **Precision of Values:** The annotated values are given to two decimal places, suggesting precise measurement.

### Interpretation

This chart quantifies the computational overhead introduced by different evaluation or analysis techniques when applied to the LLaMA-7B model. The data suggests a fundamental trade-off:

* **BaseLLM and Perplexity** represent the baseline inference cost of the model itself or a very lightweight metric, serving as a reference point.

* **LN-Entropy, LexicalSim, and EigenScore** introduce a consistent, moderate overhead (adding roughly 0.56-0.57 seconds per question). This indicates these methods involve additional computation but are of similar complexity to each other.

* **SelfCKGPT** represents a method with a dramatically different computational profile. Its cost is so high it likely involves a significantly more complex process—potentially iterative generation, multi-step reasoning, or the use of a much larger auxiliary model—making it impractical for scenarios requiring high-throughput or low-latency inference compared to the other methods.

The chart effectively argues that while SelfCKGPT may offer certain qualitative advantages (not shown here), it comes at a severe computational cost, whereas methods like EigenScore or LexicalSim provide a middle ground with a predictable, moderate overhead.