## Pie Charts: KG vs LLM Performance on Question Answering Datasets

### Overview

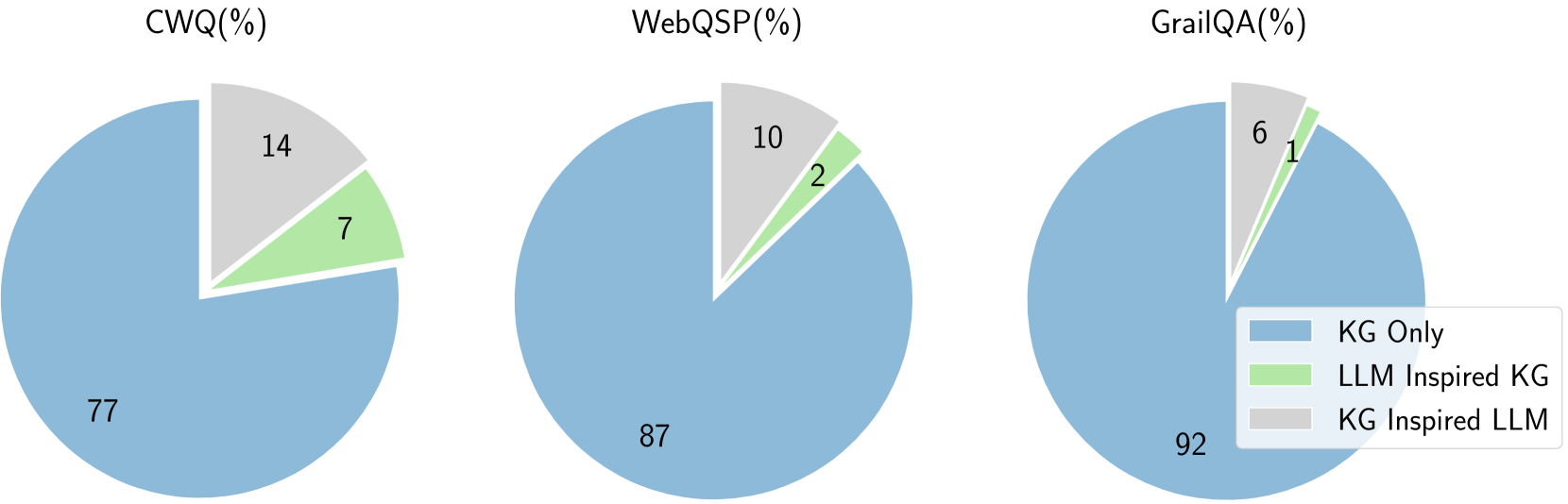

The image presents three pie charts comparing the performance of Knowledge Graph (KG) based question answering systems against Language Model (LLM) inspired KG systems on three different datasets: CWQ, WebQSP, and GrailQA. Each pie chart shows the percentage of questions answered correctly by KG Only, LLM Inspired KG, and KG Inspired LLM approaches.

### Components/Axes

* **Titles:** CWQ(%), WebQSP(%), GrailQA(%) - indicating the dataset used for each pie chart.

* **Pie Chart Segments:** Each pie chart is divided into three segments, each representing a different approach to question answering.

* **Legend (Bottom-Right):**

* Blue: KG Only

* Light Green: LLM Inspired KG

* Light Gray: KG Inspired LLM

* **Values:** Percentage values are displayed within each segment of the pie charts.

### Detailed Analysis

**CWQ(%)**

* KG Only (Blue): 77%

* LLM Inspired KG (Light Green): 7%

* KG Inspired LLM (Light Gray): 14%

**WebQSP(%)**

* KG Only (Blue): 87%

* LLM Inspired KG (Light Green): 2%

* KG Inspired LLM (Light Gray): 10%

**GrailQA(%)**

* KG Only (Blue): 92%

* LLM Inspired KG (Light Green): 1%

* KG Inspired LLM (Light Gray): 6%

### Key Observations

* Across all three datasets, the KG Only approach consistently achieves the highest percentage of correct answers.

* LLM Inspired KG consistently has the lowest percentage of correct answers across all datasets.

* The performance difference between KG Only and the other two approaches is most pronounced in GrailQA.

### Interpretation

The data suggests that for these question answering tasks, using a pure Knowledge Graph approach is more effective than incorporating Language Models, either to inspire the KG or to be inspired by the KG. The significant performance of KG Only, especially on GrailQA, indicates that the structure and information within the knowledge graph are well-suited for answering questions in these datasets. The lower performance of LLM Inspired KG might indicate challenges in effectively integrating language model insights into the knowledge graph structure. The KG Inspired LLM approach performs better than LLM Inspired KG, but still lags behind the KG Only approach, suggesting that while language models can benefit from knowledge graphs, they don't necessarily outperform a well-structured KG for these specific tasks.