## Neural Network Architecture Diagram: Spatio-Temporal Transformer Block

### Overview

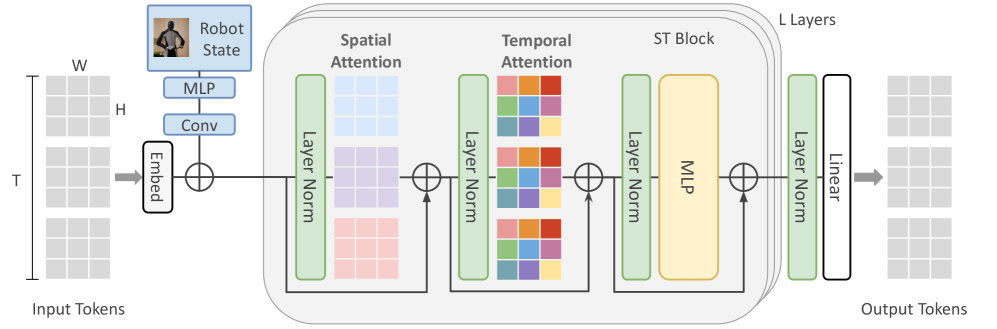

The image displays a detailed architectural diagram of a neural network model designed for processing sequential spatio-temporal data, likely for tasks involving video understanding or robotics. The diagram illustrates the data flow from input tokens, through a series of processing blocks involving attention mechanisms and normalization layers, to output tokens. A key feature is the integration of a "Robot State" input early in the pipeline.

### Components/Axes

The diagram is organized horizontally, representing the flow of data from left (input) to right (output).

**1. Input Section (Left):**

* **Label:** `Input Tokens`

* **Structure:** A 3D tensor represented as a stack of grids.

* **Dimensions:** Labeled with `T` (vertical axis, likely Time/Sequence length), `H` (Height), and `W` (Width).

**2. Robot State Integration (Top-Left):**

* **Label:** `Robot State` (accompanied by a small image of a humanoid robot).

* **Processing Path:** The Robot State data flows through:

* `MLP` (Multi-Layer Perceptron)

* `Conv` (Convolutional layer)

* **Integration Point:** The processed Robot State is combined with the embedded input tokens via a summation operation (⊕ symbol).

**3. Core Processing Block (Center):**

This main section is enclosed in a rounded rectangle and is repeated `L` times (indicated by `L Layers` label at the top-right of the block). Each layer contains:

* **Spatial Attention Sub-block:**

* Input goes through a `Layer Norm` (green vertical bar).

* The core is a `Spatial Attention` mechanism, visualized as a grid with colored cells (blue, purple, pink gradients).

* A residual connection (arrow bypassing the block) adds the original input to the attention output via a summation (⊕).

* **Temporal Attention Sub-block:**

* The output from the spatial block goes through another `Layer Norm`.

* The core is a `Temporal Attention` mechanism, visualized as a grid with a different color pattern (orange, red, blue, green, purple).

* Another residual connection and summation (⊕) follow.

* **ST Block (Spatio-Temporal Block):**

* The output goes through a third `Layer Norm`.

* The core is an `MLP` (yellow block).

* A final residual connection and summation (⊕) for this layer.

**4. Output Section (Right):**

* After the `L` repeated layers, the data passes through a final `Layer Norm` and a `Linear` layer.

* **Final Output:** A 3D tensor labeled `Output Tokens`, with a structure mirroring the input.

### Detailed Analysis

* **Data Flow:** The primary path is `Input Tokens` -> `Embed` -> [Integration with processed `Robot State`] -> `L x (Spatial Attention -> Temporal Attention -> ST Block/MLP)` -> `Layer Norm` -> `Linear` -> `Output Tokens`.

* **Key Operations:** The diagram explicitly labels the following operations: `Embed`, `MLP`, `Conv`, `Layer Norm`, `Spatial Attention`, `Temporal Attention`, `Linear`, and summation (⊕) for residual connections.

* **Visual Coding:**

* **Layer Norm:** Consistently represented as vertical green bars.

* **Attention Mechanisms:** Represented by colored grids. The Spatial Attention grid uses a blue-to-pink vertical gradient. The Temporal Attention grid uses a more complex, multi-colored checkerboard pattern.

* **MLP:** Represented as a solid yellow block within the ST Block.

* **Residual Connections:** Represented by black arrows that bypass the main processing blocks and connect to summation circles (⊕).

### Key Observations

1. **Dual Attention Mechanism:** The architecture explicitly separates `Spatial Attention` and `Temporal Attention` into sequential sub-blocks within each layer. This suggests a design focused on independently modeling spatial relationships (within a frame) and temporal relationships (across frames) before combining them.

2. **Early Fusion of Robot State:** The `Robot State` is processed and injected into the network at the very beginning, after the initial token embedding. This indicates that proprioceptive or state information from the robot is a critical, foundational input for the model's predictions.

3. **Residual Learning Framework:** Every major sub-block (Spatial Attention, Temporal Attention, MLP) is followed by a residual connection. This is a standard technique to facilitate training deep networks by allowing gradients to flow more easily.

4. **Parameter Sharing:** The label `L Layers` indicates that the entire block containing Spatial Attention, Temporal Attention, and the ST Block is repeated `L` times, with the weights likely being shared across these layers.

### Interpretation

This diagram depicts a sophisticated **Spatio-Temporal Transformer** variant, tailored for embodied AI or robotics applications. The architecture is designed to process a sequence of observations (e.g., video frames or a history of sensor readings, represented as `Input Tokens` with dimensions T, H, W) while simultaneously conditioning on the robot's own state.

The separation of spatial and temporal attention is a strategic choice. It allows the model to first understand "what is where" in each individual observation (spatial attention) and then understand "how things change over time" (temporal attention). This is more interpretable and potentially more efficient than a single, monolithic spatio-temporal attention mechanism.

The early fusion of the `Robot State` is crucial. It grounds the visual or sensory processing in the context of the robot's own configuration (e.g., joint angles, position), enabling the model to make predictions or decisions that are physically plausible and relevant to the robot's immediate situation. The repeated `L` layers allow the model to build increasingly abstract and integrated representations of the scene and its dynamics, ultimately producing `Output Tokens` that could be used for tasks like action prediction, video captioning, or control signal generation. The overall design emphasizes hierarchical feature extraction and the integration of multimodal (exteroceptive and proprioceptive) information.